Is It Time to Learn About Deep Learning?

This file type includes high-resolution graphics and schematics when applicable.

Artificial intelligence (AI) is one of those hyped topics that often comes and goes with little understanding of what’s involved or how it can be monetized. It’s not unique in that respect; robotics and a host of other topics come to mind. They typically peak as research turned into products that ride the wave and then disappear, or at least it seems that way.

In actuality, though, they rarely become products. Also, at this juncture, the practical applications hide from the spotlight, but continue to grow in acceptance. This is true even for AI, where techniques drive everything from financial transactions to Roombas.

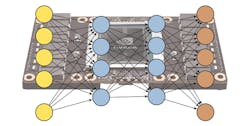

The latest idea to bring AI to the forefront is “deep learning” technology, which is based on deep neural nets (DNNs). Deep learning has moved AI into the spotlight again because of improved performance enabled by platforms like GPUs (see “GPU Targets Deep Learning Applications”). This has turned many applications of DNN into practical concepts. Keep in mind that some applications may use GPU clusters for training a DNN, but that deployment may only require a microcontroller.

So what is DNN, and how does it apply to your application area?

In the simplest sense, a neural network is an array of interconnected nodes that connect a set of inputs to outputs. The nodes have weights associated with them, and the inputs generate a set of outputs based on the node. The challenge is determining the weights, as well as the number of nodes in each layer, in addition to the number of layers (or the depth) of the system. The weights are usually determined by “training” the network via a set of inputs, such as photos of a particular type of object, and providing an indication of whether recognition is positive or negative. DNNs are actually much more complex, but this is the 10,000-ft view.

The complexity of deep-learning systems can be high, so it often helps to have a PhD in the area to develop and tune a DNN for an application. On the plus side, there are significantly more tools available, such as Nvidia’s CUDA Deep Neural Network library (CuDNN) and the Tensor Flow Playground.

So back to the question: Is it time to learn about deep learning?

I would say yes, at least to get enough of an understanding to decide whether it’s worth it to learn more. Take a look at some of the applications other than facial recognition or the more-common DNN applications to see how they might impact your application area. Recursive neural networks take time into account, allowing them to be used in applications like speech recognition.

Most applications will not benefit from DNNs or other AI techniques, such as rule-based or behavior-based systems. However, you won’t know until you at least understand the basics. From there, it might warrant the inclusion of a library or two, or something more extensive. The advantages can often be significant, providing an edge over the competition that may not know about this secret sauce.

Looking for parts? Go to SourceESB.

About the Author

William Wong Blog

Senior Content Director

Bill's latest articles are listed on this author page, William G. Wong.

The latest blogs have been moved to alt.embedded on Electronic Design.

Bill Wong covers Digital, Embedded, Systems and Software topics at Electronic Design. He writes a number of columns, including Lab Bench and alt.embedded, plus Bill's Workbench hands-on column. Bill is a Georgia Tech alumni with a B.S in Electrical Engineering and a master's degree in computer science for Rutgers, The State University of New Jersey.

He has written a dozen books and was the first Director of PC Labs at PC Magazine. He has worked in the computer and publication industry for almost 40 years and has been with Electronic Design since 2000. He helps run the Mercer Science and Engineering Fair in Mercer County, NJ.

- Check out more articles by Bill Wong on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: