This file type includes high resolution graphics and schematics when applicable.

Choosing an Electronic Design Best of Award winner always presents a challenge, seeing how there are so many new technologies that show up every year. This year, however, AMD’s High Bandwidth Memory (HBM) caught my eye. The technology allows multiple 3D DRAM stacks to be included in the same package as AMD’s latest GPU (Fig. 1).

AMD has actually been working on HBM for seven years, according to Bryan Black, the firm’s senior fellow. The project got its start when AMD realized that GDDR5 was not going to scale to match the growing GPU performance.

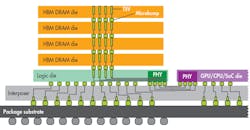

Placing the memory chips on a silicon interposer not only reduces the system footprint : It also significantly increases the memory bandwidth, since HBM does not have the pinout limitations of multiplexed off-chip memory. HBM has a 1,024-bit bus width versus 32-bits for GDDR5. HBM can deliver 100 Gbytes/s/stack. There are four stacks in the R9 Radeon Series GPU boards, including the R9 Nano (Fig. 2). The HBM approach is also more power efficient. Bandwidth per watt for GDDR5 is 10.66 Gbytes/s/W while HBM is over 35 Gbytes/s/W.

Packaging is only part of the puzzle. “The DRAM is only part of the equation,” Bryan notes. “Building the entire solution required contributions from a number of ecosystem partners in packaging, assembly, and test, as well as DRAM. AMD found highly capable partners to develop the fundamental technologies required to bring the final product to market.”

While silicon interposer use has increased, it has been relegated to high-end solutions like Xilinx’s Virtex-7 2000T. The advantage of the interposer is the ability to mix chip technologies, allowing optimized designs for each die in the system. In Xilinx’s case, it was the addition of SERDES. In AMD’s case, it is the HBM memory stacks.

AMD’s implementation is actually more complex because the HBM is a 3D stack of memory dies on top of a logic interface die. These die employ through silicon vias (TSV) to provide the vertical interconnects.

The R9 Series 4 Gybtes of HBM basically provides storage for AMD’s Fiji GPU. The platform supports Microsoft DirectX 12, Open GL, Mantle, and Vulkan, and it can easily handle 4K displays for high-end gamers. The Fiji GPU has 4096 stream processors, 64 compute units, and 256 units. It has a compute performance of 8.19 TFLOPS. The R9 Nano board uses only 175 W of power and plugs into an x16 PCI Express Gen 3 slot.

The R9 Series supports other AMD technologies, including FreeSync, LiquidVR, Eyefinity, and CrossFire. FreeSync synchronizes GPU and CPU gaming operation. LiquidVR assists virtual reality displays by providing services to reduce motion-to-photon latency to less than 10 milliseconds. Eyefinity provides multiple display support, while CrossFire allows multiple GPUs to be linked for even higher performance.

This file type includes high resolution graphics and schematics when applicable.