Embedded Tech at CES—From the Sublime to the…Invasive?

Support for Amazon Alexa and Google Home/Assistant were plastered all over booths and products at this year’s Consumer Electronics Show (CES). “Hey Google” signs were larger than life. These services were in everything from smart TVs to smart refrigerators. It was hard to find a product without a speaker and microphone array that wasn’t attached to the cloud.

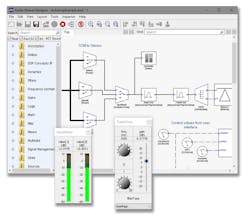

DSP Concepts was highlighting its partnership with Amlogic and Ambiq Micro. These two chip companies have low-power ARM Cortex families that mesh well with DSP Concepts’ Audio Weaver (Fig. 1). Audio Weaver is a tool that builds a code base from a graphical model using a graphical interface similar to National Instruments’ LabVIEW or MathWorks’ Simulink.

1. DSP Concepts’ Audio Weaver builds a code base from a graphical model using a graphical interface similar to National Instruments’ LabVIEW or MathWorks’ Simulink.

Audio Weaver is used to build custom audio-processing systems that are needed to support voice platforms like Alexa, Google Assistant, Apple Siri, and Microsoft Cortana. The code can run on something as small as a Cortex-M4 with plenty of headroom left over for applications. This support becomes very interesting when considering battery-powered devices like smart ear buds or other wearable devices.

Amazon has already qualified the Amlogic A113X reference design that uses DSP Concepts software. I also save a compact, battery-powered device running an Ambiq Micro Apollo2 chip that would operate full-time for a week using a CR2032 battery. Its longevity is courtesy of Ambiq Micro’s use of Subthreshold Power Optimized Technology (SPOT). The system also integrated DSP Concepts’ Quiescent Sound Detection, Beamforming, and Noise Reduction, along with Sensory’s TrulyHandsfree Low Power Sound, Keyword, and Command Phrase.

Into the Car

Next we switch over to Media Oriented Systems Transport (MOST) automotive networks with Microchip’s INIC chip demo (Fig. 2). It was playing music, streaming video, and bridging a pair of Raspberry Pi systems via Ethernet connections.

2. Microchip’s INIC chips support the MOST synchronous network. In this demo, the two Raspberry Pi boards (left and right) are linked via Ethernet that’s bridged over the MOST network.

MOST has been around for decades and is employed in many of the latest cars that. It’s getting a lot of competition from automotive Ethernet and systems like Maxim’s GMSL. Still, it has many advantages, such as the ability to use nodes that don’t require a host processor. Digital and streaming data can be exchanged via the controller chip, allowing for very simple nodes like amplified speakers. One idea might be to use this in embedded applications like robotics, where its synchronous nature makes timing easier. It can also operate over twisted pair for simplified wiring.

Sticking with the automotive theme, artificial intelligence (AI) is finding its way into more systems. Elektrobit, like many others, is into AI and machine learning (ML) in a big way. It was showing a self-driving car demo (Fig. 3) using small models so that it could run continuously on the show floor. There were kiosks next to the demo area where I could request a virtual ride à la Lyft or Uber. The model car would arrive, stop, and then proceed to the destination—all without hitting another car or running into any of the attendants in the area talking with people like me. This demo highlighted Elektrobit’s EB robinos system.

3. Elektrobit was showing a self-driving car demo using small models that would go to a kiosk when a person requested it. It would then drive to the selected destination.

You get to see all sorts of things at CES. Intel had dancing and flying acrobats with LED uniforms to complement their drones. Plenty of self-driving car demos were taking place, although some had to be canceled due to weather for a time.

What was unexpected was seeing the real Aflac duck (Fig. 4), which was very tame. He was there to highlight another duckling, the My Special Aflac Duck. It will be given to 16,000 children who have been diagnosed with cancer. The average treatment for these children is more than 1,000 days. A web-based app enables children to mirror their care routines. The special duck can emulate the young patients' moods. It can also dance, quack and nuzzle to help comfort children when they need it most.

4. I wasn’t expecting to see the real Aflac duck (left) at CES, but he was there to highlight another duckling (right) that will be given to 16,000 children diagnosed with cancer.

Now back to the embedded stuff.

Collective Advances

The Z-Wave Alliance, Zigbee Alliance, and the Open Connectivity Forum (OCF) had large booths with lots of products on display (Fig. 5). They really underscored the range of options available to developers. Sigma Designs also released its 700-Series chipset. This chipset increases range and uses less power. A sensor can run on a coin cell for 10 years. It supports the latest Security 2 (S2) framework that we covered earlier, and has an ARM Cortex-M at its core.

The 700-series also supports SmartStart, a deployment methodology that streamlines installation. It uses QR codes that can be read by a smartphone’s camera. Scan the code for a new device into the local gateway and then turn on the device. It will be automatically incorporated into the network with all of the security features enabled.

5. The Z-Wave booth was chock full of products and visitors, as were the Zigbee and Open Connectivity Forum (OCF) booths around the corner.

The Zigbee Alliance was highlighting the Zigbee PRO 2017 platform. It supports two ISM frequency bands simultaneously. This includes the sub-gigahertz, 800-900 MHz band for regional requirements and the 2.4 GHz band.

The OCF’s IoTivity platform now has a number of certified devices. This included the Haier Group’s washer and Technicolor’s home gateway. The OCF product registry currently has 2392 certified devices although this also includes UPNP and AllJoyn certifications.

Finally, I leave you with one item that’s both amazing as well as disconcerting.

Amazing Advances and Privacy Concerns

Horizon Robotics’ multichannel, advanced perception solution was showing how it can recognize multiple faces from a real-time video stream (Fig. 6). It runs on Intel FGPA technology. Horizon Robotics is delivering a range of AI solutions for everything from autonomous vehicles to smart cities. It also offers the Sunrise and Journey processors. The Sunrise processor targets smart cameras that enable face recognition and tracking, while the Journey processor pushes toward ADAS applications.

6. Horizon Robotics’ multichannel, advanced perception solution was built on Intel FGPA technology (right). It can identify multiple faces in real time.

The demo was using a recorded video stream, but a number of other vendors at CES were doing real-time recognition of people individually and in the crowd. These were for demonstration purposes, and the technology continues to improve in both speed and accuracy.

This type of image recognition is being built into almost anything that has a camera, from a smartphone to a smart refrigerator. A similar trend is occurring with audio input and the smart speaker craze. It’s going to be difficult to avoid this technology, even if you don’t purchase it. Such technology will be watching and listening in every electronics store, as well as the homes and businesses of others that purchase the products. Security and privacy concerns were discussed at CES, but they were rarely at the top of the list of items with respect to personal security and privacy.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: