Industrial CES: More Than HDTVs and IoT

This year’s Consumer Electronics Show (CES) wasn’t all IoT and large-screen TVs. I saw a wide range of industrial devices on display at the show, both on the floor and in private demos. This is just a few of the more interesting ones that have industrial and consumer applications.

FLIR is well known for its thermal imaging technology. It has standalone devices as well as many handheld sensors for commercial and industrial use. They were showing a number of new devices CES including augmented reality (AR) glasses (Fig. 1) that have a thermal imaging camera as part of the package. This type of device could easily replace handheld devices while freeing up hands to do other things.

1. FLIR does Google Glass one better with a heat imaging sensor instead of a conventional camera.

FLIR also had an automotive development kit. The idea is that thermal imaging provides superior detection capabilities compared to LIDAR and conventional imaging cameras. Thermal imaging has the advantage in low light and rough weather environments.

Ricoh updated its Theta V line of 360-deg. cameras and was showing off a development platform that can be used to create some interesting products (Fig. 2). A 360-deg. camera is typically created using a pair of high-resolution cameras with fisheye lenses. Software knits together the images that can then be viewed on a screen or virtual reality glasses. The camera has a number of MEMS microphones to record 360-deg. movies.

2. Ricoh’s Theta V (right) is available as separate sensors that can be used to develop interesting 360 camera applications.

Ricoh already has an API available that developers can use to connect to the Theta V via USB, but there is more. A headless version of Android is actually running inside the camera, and Ricoh is now allowing third parties to install Android applications on the device, since the processor has sufficient headroom and storage to handle applications over and above the basic 360-deg. capture. The Theta V can also stream live 4K video. It supports Wi-Fi and Bluetooth wireless connectivity.

Haptic feedback is all around us, but these days it is a relatively easy chore for designers dealing with small devices like smartphones. It is a bit more challenging when dealing with large screens or other large surfaces.

It turns out that our feel for vibrations doesn’t really care whether the vibrations are perpendicular or normal to the surface. This allowed TDK to develop some heavy-duty, piezo haptic actuators (Fig. 3). Its PowerHap line is available in 2.5G , 7G, and 15G versions. The feedback I received using buttons and controls on a large screen were as good or better than what a smartphone delivers. TDK also has very small piezo haptic actuators, as well.

3. TDK gives large displays haptic feedback. The piezo device is mounted on the right of the display.

TDK had half a dozen interesting technology demos, but I did specifically want to mention its smart crystals (Fig. 4). There are myriad applications for these crystals, ranging from medical to automotive, and the way in which they are grown is particularly interesting. They are pulled down and can be grown in arbitrary shapes like hollow tubes and start-shaped rods. The crystal growth technology was developed in conjunction with Tohoku University.

4. TDK’s smart, shape-controlled crystals have interesting applications ranging from medical to automotive.

Virtual reality (VR) and augmented reality (AR) were everywhere at CES, but one of the challenges with both is resolution and field-of-view (FOV). Realmax was showing off prototype AR glasses with a Leap Motion gesture sensor mounted on top (Fig. 5). They have a wide 100-deg. FOV, allowing me to play some visually interesting 3D games. Many AR platforms like Microsoft’s Hololens have a 35-deg. FOV. This is definitely a game-changer for AR.

5. Realmax augmented reality technology has a very wide field of view.

Ricoh wasn’t the only one with 360-deg. camera technology. Detu’s F4 Plus (Fig. 6) looks to kick the technology up a notch with 8K resolution. The F4 Plus can record at 7,680 by 3,840 pixels at 30 frames/s. It has a large F2.2 aperture and uses a 2.3-in Sony sensor. There are a number of samples that can be viewed online.

6. The Detu F4 Plus records 8K 360-deg. images and video.

I had to stop by to see the New Bee Drone buzzing around (Fig. 7). This is a tiny VR racing drone that streams video in real time to VR glasses. It was merrily zooming around the booth, never hitting people—which is more a testament to the pilot, as visual recognition and avoidance are all under pilot control. I didn’t get to try it out, but it looks like a lot of fun.

7. New Bee Drone is a tiny racing drone flown with virtual reality glasses.

Speaking of fun, this person was definitely having too much of it with one of the Funin VR systems, the Eagle Flight VR (Fig. 8), from Guangzhou Zhuoyuan Virtual Reality Tech. The company has a wide range of VR simulators and gaming platforms. This one rotates and pivots, providing VR helmet-wearing players with an even-more-lifelike VR environment.

8. This person is having too much fun in the Eagle Flight VR virtual reality system from Funin VR.

Texas Instruments’ DLP is looking for a home in smart automotive headlights (Fig. 9). DLP works with any light source, including laser and LEDs. It can provide a high-resolution display or just pump out lots of light. It can also target that light. In the example, a camera detects the position of a person’s head and the system then emits light in areas around the face, so not to blind the driver of an oncoming car. It could just as easily blank out the entire windshield. It could also take a heads-up display a step farther and project information in front of the car or provide information for people outside the car.

9. Texas Instruments’ DLP technology is being employed with smart headlights that do not project light in areas like an oncoming driver’s face (left) or project informational images on the ground (right). A forward-facing camera is used to detect oncoming objects.

DLP allows light to be projected in many different areas—not just in front of the car. This would allow lights to turn more when the driver turns the car. It could also adjust the horizon depending upon the intensity. DLP headlights are just in the experimental stage and not yet approved for use.

Sensors are interesting, but highlighting their functionality normally requires an application. mCube highlighted its MEMS motion sensors with a full body suit lined with various sensors (Fig. 10). The position and movement of the young lady was displayed on the large screen behind her, showing the sensor outputs as well as an animated, kenimatic model. The company’s ultra-low-power MC3672 3-axis accelerometer is found in applications from fitness wearables to hearing aids.

10. mCube wanted to highlight its range of MEM sensors, so it equipped a body suit that tracks movement. The data is shown on the screen in the background.

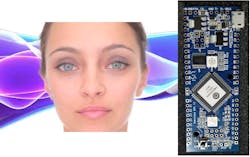

Finally we come to Emoshape. The firm’s Emotion Processing Unit (EPU) II chip is found in its dev kit (Fig. 11): “The EPU is based on Patrick Levy-Rosenthal’s Psychobiotic Evolutionary Theory extending the Ekman’s theory by using not only 12 primary emotions identified in the psycho-evolutionary theory, but also pain/pleasure and frustration/satisfaction.” The 12 primary emotions are excitement, confident, happy, trust, desire, fear, surprise, inattention, sad, regret, disgust, and anger. Input is conversation text that could be obtained via voice recognition and natural language processing software.

11. Emoshape’s dev kit (right) is designed to bring emotions to artificial constructs from avatars (left) to robots.

The main difference between this approach and what smart speaker systems like Amazon Alexa and Google Home do is that those systems evaluate speech for commands or requests. They do not analyze the emotions involved. The EPU can also take additional inputs when available, such as a person’s visible state. The EPU’s classifiers achieve up to 86% accuracy in a typical conversation. The EPU II USB dongle allows easy integration with everything from a Raspberry Pi to a robot running Microsoft Windows.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: