Understanding The Protocols Behind The Internet Of Things

The Internet revolutionized how people communicate and work together. It ushered in a new era of free information for everyone, transforming life in ways that were hard to imagine in its early stages. But the next wave of the Internet is not about people. It’s about intelligent, connected devices.

To interact successfully with the real world, these devices must work together with speeds, scales, and capabilities far beyond what people need or use. The Internet of Things (IoT) will change the world, perhaps more profoundly than today’s human-centric Internet.

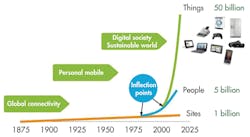

Today’s Internet connects Web sites and workstations. There are approximately a billion sites on the Internet, a huge number that has delivered profound results (Fig. 1).

With alarming speed, the mobile revolution added handsets for most of the world’s population in only a few years. With more than 5 billion smart phones out there, most people have or soon will have access to mobile connectivity.

There also are 50 billion smart devices. The IoT’s opportunity and challenge will be to connect them in a meaningful way to deliver truly distributed machine-to-machine (M2M) applications. The IoT will dwarf the Internet by a factor of 50. It will connect 10 times as many devices as the mobile revolution and be the driving transformation of our time.

Where are all these devices? They are part of the fabric of everyday life. In fact, you own many of them! Recent cars use more than 100 processors. Smart devices pervade industrial systems, hospitals, houses, transportation systems, and more. Today, these systems are weakly connected, but that will quickly change.

Connection on this scale enables applications beyond imagination. The IoT will do fundamental things that have not been done before. The inklings of the possibilities are already quite visible and growing quickly.

What Is The Internet Of Things?

In the early 1990s, every technology was summarized as the onramp to the information superhighway. These types of analogies didn’t really help define the Internet. In reality, the Internet turned out to be a new way to share your life with others, a new business model for shopping, and a different way to educate your children. The Internet is defined more by what you can do than what it does. The Internet of Things suffers from similar confusion.

The big information players are pushing out vision statements trying to define the IoT. Cisco calls it the “Internet of Everything” and says it will be the “latest wave of the Internet—connecting physical objects… to provide better safety, comfort and efficiency.” IBM describes it as “a completely new world-wide Web, one comprised of the messages that digitally empowered devices would send to one another. It is the same Internet, but not the same Web.”

General Electric’s “Industrial Internet” is perhaps the most exciting vision because it directly envisions new applications. At GE, the industrial Internet represents “the convergence of machine and intelligent data… to create brilliant machines.”

At RTI, our company slogan is, “Your Systems. Working as One.” We contend that that IoT will be an entirely new utility. It will be as profound as the cell network, GPS, or the Internet itself.

Everyone describes the future differently, but all agree that the IoT and the intelligent systems it enables will fundamentally change our world.

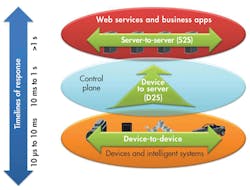

Devices must communicate with each other (D2D). Device data then must be collected and sent to the server infrastructure (D2S). That server infrastructure has to share device data (S2S), possibly providing it back to devices, to analysis programs, or to people. From 30,000 feet, the protocols can be described in this framework as:

• MQTT: a protocol for collecting device data and communicating it to servers (D2S)

• XMPP: a protocol best for connecting devices to people, a special case of the D2S pattern, since people are connected to the servers

• DDS: a fast bus for integrating intelligent machines (D2D)

• AMQP: a queuing system designed to connect servers to each other (S2S)

Each of these protocols is widely adopted. There are at least 10 implementations of each. Confusion is understandable, because the high-level positioning is similar. In fact, all four claim to be real-time publish-subscribe IoT protocols that can connect thousands of devices. And it’s true, depending on how you define “real time,” “things,” and “devices.”

Nonetheless, they are very different indeed! Today’s Internet supports hundreds of protocols. The IoT will support hundreds more. It’s important to understand the class of use that each of these important protocols addresses.

The simple taxonomy in Figure 2 frames the basic protocol use cases. Of course, it’s not really that simple. For instance, the “control plane” represents some of the complexity in controlling and managing all these connections. Many protocols cooperate in this region.

MQTT, the Message Queue Telemetry Transport, targets device data collection (Fig. 3). As its name states, its main purpose is telemetry, or remote monitoring. Its goal is to collect data from many devices and transport that data to the IT infrastructure. It targets large networks of small devices that need to be monitored or controlled from the cloud.

MQTT makes little attempt to enable device-to-device transfer, nor to “fan out” the data to many recipients. Since it has a clear, compelling single application, MQTT is simple, offering few control options. It also doesn’t need to be particularly fast. In this context, “real time” is typically measured in seconds.

A hub-and-spoke architecture is natural for MQTT. All the devices connect to a data concentrator server, like IBM’s new MessageSight appliance. You don’t want to lose data, so the protocol works on top of TCP, which provides a simple, reliable stream. Since the IT infrastructure uses the data, the entire system is designed to easily transport data into enterprise technologies like ActiveMQ and enterprise service buses (ESBs).

MQTT enables applications like monitoring a huge oil pipeline for leaks or vandalism. Those thousands of sensors must be concentrated into a single location for analysis. When the system finds a problem, it can take action to correct that problem. Other applications for MQTT include power usage monitoring, lighting control, and even intelligent gardening. They share a need for collecting data from many sources and making it available to the IT infrastructure.

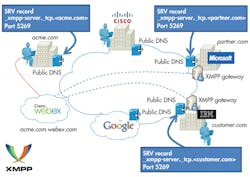

XMPP was originally called “Jabber.” It was developed for instant messaging (IM) to connect people to other people via text messages (Fig. 4). XMPP stands for Extensible Messaging and Presence Protocol. Again, the name belies the targeted use: presence, meaning people are intimately involved.

XMPP uses the XML text format as its native type, making person-to-person communications natural. Like MQTT, it runs over TCP, or perhaps over HTTP on top of TCP. Its key strength is a [email protected] addressing scheme that helps connect the needles in the huge Internet haystack.

In the IoT context, XMPP offers an easy way to address a device. This is especially handy if that data is going between distant, mostly unrelated points, just like the person-to-person case. It’s not designed to be fast. In fact, most implementations use polling, or checking for updates only on demand. A protocol called BOSH (Bidirectional streams over Synchronous HTTP) lets severs push messages. But “real time” to XMPP is on human scales, measured in seconds.

XMPP provides a great way, for instance, to connect your home thermostat to a Web server so you can access it from your phone. Its strengths in addressing, security, and scalability make it ideal for consumer-oriented IoT applications.

In contrast to MQTT and XMPP, the Data Distribution Service (DDS) targets devices that directly use device data. It distributes data to other devices (Fig. 5). While interfacing with the IT infrastructure is supported, DDS’s main purpose is to connect devices to other devices. It is a data-centric middleware standard with roots in high-performance defense, industrial, and embedded applications. DDS can efficiently deliver millions of messages per second to many simultaneous receivers.

Devices demand data very differently than the IT infrastructure demands data. First, devices are fast. “Real time” is often measured in microseconds. Devices need to communicate with many other devices in complex ways, so TCP’s simple and reliable point-to-point streams are far too restrictive. Instead, DDS offers detailed quality-of-service (QoS) control, multicast, configurable reliability, and pervasive redundancy. In addition, fan-out is a key strength. DDS offers powerful ways to filter and select exactly which data goes where, and “where” can be thousands of simultaneous destinations. Some devices are small, so there are lightweight versions of DDS that run in constrained environments.

Hub-and-spoke is completely inappropriate for device data use. Rather, DDS implements direct device-to-device “bus” communication with a relational data model. RTI calls this a “DataBus” because it is the networking analog to a database. Similar to the way a database controls access to stored data, a data bus controls data access and updates by many simultaneous users. This is exactly what many high-performance devices need to work together as a single system.

High-performance integrated device systems use DDS. It is the only technology that delivers the flexibility, reliability, and speed necessary to build complex, real-time applications. Applications include military systems, wind farms, hospital integration, medical imaging, asset-tracking systems, and automotive test and safety. DDS connects devices together into working, distributed applications at physics speeds.

Finally, the Advanced Message Queuing Protocol (AMQP) is sometimes considered an IoT protocol. AMQP is all about queues (Fig. 6). It sends transactional messages between servers. As a message-centric middleware that arose from the banking industry, it can process thousands of reliable queued transactions.

AMQP is focused on not losing messages. Communications from the publishers to exchanges and from queues to subscribers use TCP, which provides strictly reliable point-to-point connection. Further, endpoints must acknowledge acceptance of each message. The standard also describes an optional transaction mode with a formal multiphase commit sequence. True to its origins in the banking industry, AMQP middleware focuses on tracking all messages and ensuring each is delivered as intended, regardless of failures or reboots.

AMQP is mostly used in business messaging. It usually defines “devices” as mobile handsets communicating with back-office data centers. In the IoT context, AMQP is most appropriate for the control plane or server-based analysis functions.

The IoT needs many protocols. The four outlined here differ markedly. Perhaps it’s easiest to categorize them along a few key dimensions: QoS, addressing, and application.

QoScontrol is a much better metric than the overloaded “real-time” term. QoS control refers to the flexibility of data delivery. A system with complex QoS control may be harder to understand and program, but it can build much more demanding applications.

For example, consider the reliability QoS. Most protocols run on top of TCP, which delivers strict, simple reliability. Every byte put into the pipe must be delivered to the other end, even if it takes many retries. This is simple and handles many common cases, but it doesn’t allow timing control. TCP’s single-lane traffic backs up if there’s a slow consumer.

Because it targets device-to-device communications, DDS differs markedly from the other protocols in QoS control. In addition to reliability, DDS offers QoS control of “liveliness” (when you discover problems), resource usage, discovery, and even timing.

Next, finding the data needle in the huge IoT haystack is a fundamental challenge. XMPP shines here for “single item” discovery. Its “user@domain” addressing leverages the Internet’s well-established conventions. However, XMPP doesn’t easily handle large data sets connected to one server. With its collection-to-a-server design, MQTT handles that case well. If you can connect to the server, you’re on the network. AMQP queues act similarly to servers, but for S2S systems. Again, DDS is an outlier. Instead of a server, it uses a background “discovery” protocol that automatically finds data. DDS systems are typically more contained. Discovery across the wide-area network (WAN) or huge device sets requires special consideration.

Perhaps the most critical distinction comes down to the intended applications. Inter-device data use is a fundamentally different use case from device data collection. For example, turning on your light switch (best for XMPP) is worlds apart from generating that power (DDS), monitoring the transmission lines (MQTT), or analyzing the power usage back at the data center (AMQP).

Of course, there is overlap. For instance, DDS can serve and receive data from the cloud, and MQTT can send information back out to devices. Nonetheless, the fundamental goals of all four protocols differ, the architectures differ, and the capabilities differ. All of these protocols are critical to the (rapid) evolution of the IoT. The Internet of Things is a big place, with room for many protocols. Choose the one for your application carefully and without prejudice of what you know.

This file type includes high resolution graphics and schematics when applicable.

Stan Schneider is the founder of Real-Time Innovations (RTI). Previously, he was an independent technical and management consultant, working with companies in medical products, digital signal processing, aerospace, semiconductor manufacturing, video and television, and networking. Before that, he managed one of the largest laboratories at Stanford, focusing on intelligent mechanical systems. At Sperry Computer Systems (now Unisys), he developed networked communications systems and led the software team responsible for Sperry’s personal computer product line. He began his career in automotive impact testing. â¨â¨ He completed his PhD in electrical engineering and computer science at Stanford University. He holds a BS in applied mathematics (summa cum laude) and an MS in computer engineering from the University of Michigan. He is a graduate of Stanford’s Advanced Management College as well.

About the Author

Stan Schneider

CEO, Real-Time Innovations

Dr. Stan Schneider is CEO of Real-Time Innovations (RTI), the Industrial Internet of Things connectivity company. Stan serves as Vice Chair of the Industrial Internet Consortium (IIC) Steering Committee. He also serves on the advisory board for IoT Solutions World Congress, and chairs OpenFog's Fog World keynote committee. He was named Embedded Computing Design’s Top Embedded Innovator Award 2015 & IoTOne’s top-10 most influential in the IIoT 2017.He holds a PhD in EE/CS from Stanford.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: