If you’re a fabless IC company looking to optimize production, or are generally interested in the practical application of big data, then you should definitely stop by and see Marc Jacobs’ presentation at the International Test Conference next week.

Jacobs is vice president of engineering at Marvell and after you hear him talk you’ll not only understand the true value and optimum application of test data, you’ll also find out why a company called Optimal+ just raised $42 million in growth capital and then a week later was recognized as a best-in-class company by Frost & Sullivan.

In the context of test, it is still the case where many engineers test toward a given requirement: if it’s within the required spec, it’s good to go. The data provides a record that it passed and it often ends there. This is a frustrating underuse of potential.

In the context of the Internet of Things (IoT) and Big Data, some companies string some chips together and declare themselves IoT companies, while at the same time, pundits and analysts declare “data” to be the big thing. Both of them are wrong. Anyone can put chips together and anyone can generate data.

The “big thing” is the analysis of data over time and applying the results of that analysis toward either predicting outcomes or discovering trends and conditions that would not otherwise have been discernible.

Not to get too “Rumsfeld-y” on you, but finding out something really useful—that you didn’t even know to look for—is a precious moment of enlightenment. And that’s what test- and IoT-generated data can provide, and that’s why Optimal+ has gotten so much attention—and why it’s now testing 25 billion ICs per year.

So who or what is Optimal+? I never actually heard of it until recently. I was pursuing National Instruments in the wake of NIWeek to find out more about going beyond basic testing to spec, and getting to a point where you start seeing those ethereal trends that can provide a competitive advantage—if detected and incorporated back into a design.

That’s when Adam Foster laid it on me. NI recognized that big data in the production test environment can be used to understand trends in test results and yields in real-time, so it recently worked with Optimal+ to bring its technology to NI’s Semiconductor Test System (STS) to do just that.

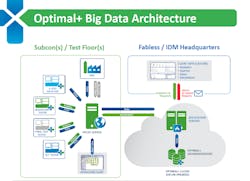

Founded in 2005, Optimal+’s infrastructure analyzes and processes wafers once they get to the test stage at the factory floor. It collects, cleans, and aggregates data from multiple manufacturing locations and then analyzes the data to provide insights to help customers improve quality, output yields, and processing times, while providing complete supply-chain visibility.

“In short,” wrote Foster in an email, “our STS sends results back to a centralized server running Optimal+ algorithms to determine what test sequences can be safely skipped to reduce test times. In the disruptive and highly competitive semiconductor space, every microsecond counts, so these fine-tuned improvements mean big money for companies.”

Time is money, when it comes to IC test, or any test, for that matter. But it gets even more interesting in that the parametric data from test sequences can be stored to cloud storage and tied to the serial number of a chip, component, or system.

Now, rather than simply reporting pass/fail, the component under test can be scored to potentially determine the performance of one component of one grade when it is paired with a different component of another grade. “This could help consumer electronic device manufacturers optimize their boards by optimizing the pairing of components within their devices,” Foster wrote.

While Foster isn’t yet ready to comment on how to get that analysis back into the design process for the next iteration or a new design, he did say, “If there is one thing that we have learned, the right data with the right algorithm can unlock tremendous potential in the high-speed test industry,”

David Park, vice president of worldwide marketing at Optimal+, has much to say about this topic, and will do so in an upcoming feature here on Electronic Design, but suffice to say for now that he agrees with Foster, with a few additional points:

- Using analytics can increase yield recovery by up to 2%

- Good analytics can prevent bad ICs from getting into your supply chain

- A fabless company can change the game by analyzing a supplier based on good die per hour to get a true feel for how that supplier can meet its needs

- Use analytics to identify and then look for specific signatures that might indicate a fault condition or to set parameters for acceptance/non-acceptance of die.

Also, “don’t accept data ‘pushed’ from your supplier or foundry,” he says. “Instead, ‘pull’ data right from the subcontractor or foundry—right from the factory floor.” This is critical for real-time analysis that can be used to halt material before it leaves a facility.

Of course, how to do all this is just part of Optimal+’s secret sauce. While its systems process the test data, only the customers themselves actually see that data, and those customers are a who’s who of the semiconductor industry, including Broadcom, Qualcomm, NVidia, AMD, Freescale, STMicroelectronics, and, of course, Marvell.

That’s why Marvell’s Marc Jacobs will be at ITC next week, to expand upon the use of data in the IC manufacturing environment. It’s worth a look. It could save you a few dollars if it encourages you take a look at what Optimal+’s expert systems can offer.

With the $42 million, Park said Optimal+ will continue its growth plan, which includes taking Optimal+ to the system level, which will have an interesting effect on component choices and system design, some of which NI’s Foster alluded to above.