As the sophistication of embedded software systems escalates, the need for greater safety and security for these applications must keep pace. As autonomous systems, connected devices, and artificial-intelligence technologies arrive, engineering teams are finding it more difficult to rely on traditional development methods. These teams must verify that the software meets overall functional requirements, robustness, and safety needs. However, code reviews and other non-exhaustive techniques used to detect bugs and run-time errors can be labor-intensive and often don’t meet the needs of large software teams.

Several questions arise as a result: How can engineering teams best address these challenges? What tools and techniques are readily available to increase software safety and security? How can teams best optimize the development process and speed time to market?

I talked with Jay Abraham, Manager, Verification Products, MathWorks, about these topics.

Jay Abraham, Manager, Verification Products, MathWorks

To begin, what are the various code verification techniques that engineering teams often use? What are the pros and cons of each technique?

There are several verification techniques including code review, testing, and static analysis, that engineering teams will usually implement depending on the specific case at hand.

For code review, teams will manually inspect code with the intent to quickly identify an error that may prompt a safety concern. Safety standards often mandate the use of code reviews; however, it’s a manual task and can be time-consuming. Subtle run-time errors may be missed by this approach alone.

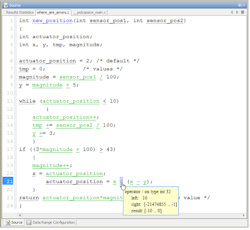

Functional testing is a mandatory task and is needed to confirm that the code behaves as intended. Additional structural testing can be performed to check that all aspects of the code are adequately exercised. However, functional and structural testing are not exhaustive. For example, consider the code shown in the figure. You only need two test cases for full modified condition/decision coverage (MCDC) base testing. These test cases would execute all branches of the code, but they don’t sweep the full complement of inputs to the function, resulting in an insufficient confirmation of robustness and safety. Teams are left with the possibility that the code may still contain a bug.

This figure shows a function written in C code, displaying results of static analysis. (© 1984–2019 The MathWorks, Inc.)

Static analysis is used to supplement the verification process. Static analysis, which automates manual verification tasks such as enforcing coding standards and style guides, can also find defects based on analysis of the code with various techniques. They range from simple pattern-based techniques to sophisticated use of formal-methods. Simple static analysis may produce many false warnings.

While each of these techniques bring certain benefits to the table, such as efficiency and increased predictability, there appear to be some negative aspects associated with each one. Which technique should engineering teams leverage to ensure they’re uncovering every error, every time?

Of the three major verification techniques specified above, static analysis tends to be the most logical choice because of its added intelligence and prediction capabilities. While the technique isn’t without its faults, static analysis, when based on formal methods, can help teams better verify run-time behavior and prove the absence of critical run-time errors in software.

Static-analysis tools use proof-based techniques to prove that the software is safe under all run-time conditions, identify variable range information for every point in the code, and elaborate control and data flow of the software program. Formal methods apply theoretical computer-science fundamentals to solve difficult problems in software, such as proving that software will not fail because of a run-time error. When effectively utilized in a development organization, formal methods-based static analysis can seek out bugs in the code and, more importantly, prove that the code is free from critical run-time errors like overflows, divide-by-zero, and buffer overflows.

What are the immediate benefits that engineering teams will experience when they apply formal methods-based static analysis to their code verification processes?

There are several benefits associated with formal methods-based static analysis, but perhaps the most immediate is an expedited feedback loop and more focused test strategies. Both encompass:

• Expedited feedback: In software development for embedded systems, run-time defects can be introduced during the coding stage. These errors are often missed during code review, and they can’t be detected by conventional static analysis. They propagate downstream and are often detected later, during testing on the real hardware and system, causing rework and delays.

Using formal methods-based static analysis, developers can identify critical run-time errors as soon as code is written or checked into a repository. These tools can be coupled with continuous integration to scale usage across the development organization. They can help with team collaboration by providing a defined process for quality engineers to triage and assign defects with a methodology for software developers to debug and fix issues.

• Focused test strategies: Unit testing requires teams to manually write test cases that can highlight run-time errors and lead to redundancies in the code. In contrast, formal methods use mathematics to prove certain facts or properties. Therefore, verification techniques based on formal methods can conclusively prove certain aspects of software, such as proving that software does or does not contain run-time errors, including overflows, divide-by-zero, and illegally dereferenced pointers. Once again, this can be coupled with continuous integration system where testing and static analysis is automatically performed on code checked into the source-code repository.

What are the long-term benefits of formal methods-based static analysis for engineering teams?

Traditional verification techniques of code review and testing may be inadequate when proving that the code is safe and secure. Problems in the code can create safety issues such as a program crash or may even present a security exploit. Given the increasing connectivity of embedded systems—like those for automated driving and autonomous systems—security is a growing concern, especially when it affects safety.

Formal methods-based static analysis results in deeper verification of the code that can uncover classic safety exploit techniques such as buffer overrun. Lightweight application of this technique scans code to detect bugs and checks for conformance to security standards such as CERT C/C++.

Jay Abraham manages product marketing at MathWorks for Verification and Validation products. He has over 25 years software and hardware design experience. Jay has a MS in Computer Engineering from Syracuse University and a BS in Electrical Engineering from Boston University.