With its 30-plus years of reliably connecting together all manner of technology hardware, Ethernet holds on firmly to its role as a system-to-system interface in data centers and cloud computing. However, it’s steadily being eclipsed by PCI Express (PCIe) in cost and energy advantages within the rack.

I talked with Krishna Mallampati, Senior Marketing Director for PCIe switch products at PLX Technology, about this issue.

Wong: Will Ethernet ever “sunset”?

Mallampati: That question is often asked by all manner of industry experts – from networking consultants to IT professionals to system architects. The fact is, when looking at the price and power comparisons of systems based on Ethernet vs. those using PCIe, the distinctions become clear. Still, PCIe and Ethernet will continue to coexist in the data center, enabling optimal interconnect both within the rack and rack-to-rack. The aforementioned experts won’t be easily convinced to replace Ethernet with PCIe for modest savings, because what they truly need is exponential reductions in both power and cost before making any changes. With this imperative, PCIe definitely holds its own!

Wong: What do current architectures look like?

Mallampati: Traditional systems now used throughout the industry employ more than one interconnect technologies: InfiniBand, Fibre Channel and Ethernet, being the most common of them. Such architectures present several limitations: existence of multiple I/O interconnect technologies; low utilization rates of I/O endpoints; and higher latency because the PCIe interface native in the processors on these systems needs to be converted to multiple protocols - rather than converging all endpoints by using PCIe. Additionally, there are high power and cost of the system due to the need for multiple I/O endpoints to satisfy the multiple I/O interconnect technologies; I/O is fixed at the time of architecture and build, with no flexibility to change later; and management software must handle multiple I/O protocols with overhead.

This file type includes high resolution graphics and schematics when applicable.

Wong: What solution is there to those limitations?

Mallampati: Sharing I/O endpoints is an approach appealing to system designers because it lowers costs and power, improves performance and utilization, and simplifies design. Shared-I/O deployment in a PCIe switch is the key enabler to this type of system architecture.

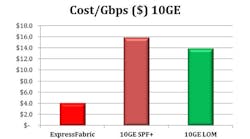

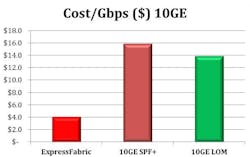

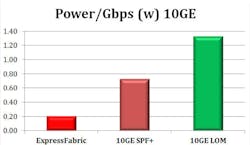

Wong: What are the cost and power comparisons between PCIe and Ethernet?

Mallampati: PCIe delivers more than 50 percent savings compared to 10G Ethernet, mainly through the elimination of adapters (Fig. 1). Moreover, PCIe is native on an increasing number of processors from major vendors so designers can benefit from the lower latency realized by not having to use any components between a CPU and a PCIe switch. With a new generation of CPUs, designers can place a PCIe switch directly off the CPU, thereby reducing latency and component cost.

Wong: What is the industry doing today to meet the demand for more efficient interconnect technology?

Mallampati: To satisfy the requirements in the shared-IO and clustering market segments, vendors such as PLX Technology are bringing to market high-performance, flexible, and power- and space-efficient devices. These switches have been architected to fit into the full range of applications cited above.

Krishna Mallampati is senior marketing director for PCI Express switches at PLX Technology, Sunnyvale, Calif. Previously, he worked as an applications engineer and product marketing manager at Agere Systems, and was a senior product marketing engineer at Altera Corp. Krishna holds an MS from Florida International University and an MBA from Lehigh University.