Simple economics originally motivated the changeover in the telecommunications industry from a circuit-switched to a packet-switched system when the cost of dynamic allocation fell below the cost of communication lines in 1969.1 By 1976, the ratification of the X.25 protocol provided a common international communication standard, accelerating the trend.

This file type includes high resolution graphics and schematics when applicable.

What went unforeseen at the time was that this change in network architecture would lead to the merging of communication and computing infrastructure and the emergence of the data-communication sector. Nearly four decades on, voice traffic now accounts for only a few percent of network utilization. So large was the telecom system, however, that vestiges of the old architecture remain to this day.

Prior to packet switching, circuit-switched networks processed only voice-band traffic using large numbers of analog amplifiers, equalizers, and rotary solenoid relays. The –48-V power supplies for these systems were compatible with the large-capacity lead-acid battery systems necessary to meet high up-time requirements.

The mains-powered energy source was typically unregulated, resulting in system distribution voltages ranging from –75 V at mains high line to –36 V at the end of the backup battery’s charge cycle. Over-charging the lead-acid batteries of the day resulted in the escape of acidic vapors. The choice of a negative distribution voltage helped minimize and localize corrosion products, an issue exacerbated by the crude charge-control systems, humidity, and poor ventilation in battery rooms.

Since the conversion to packet switching and the conversion of telecom providers into datacom companies, central offices (COs) have served voice, video, and Internet through common digital resources.

Think Positive

Changes in network architecture in combination with technology advances in power-subsystem components have motivated shifts in power-subsystem design and a switch from negative distribution voltages to positive. New battery designs and a trend toward better environmental controls in battery rooms have facilitated that trend. For example, most new facilities and CO upgrades at datacom companies such as AT&T (U.S.), Deutsche Telekom (Germany), Orange SA (France), and Verizon (U.S.) will operate from +48-V distribution feeds.

The polarity flip allows datacom companies to take advantage of economies of scale for power-distribution hardware already in heavy use by server-class data-processing systems in other sectors. That, however, is just the most visible shift in power-distribution strategies that datacom companies are exploiting. The output voltage range of datacom ac to +48-V converters is substantially narrower than their ac to –48-V predecessors. The narrower range of distribution voltage brings with it several key values.

SELV Saves

With tighter output-voltage tolerances, the high-line limit for telecom +48-V distribution rails is 60 V, which allows them to qualify as safety extra-low-voltage (SELV) systems. SELV power distribution systems are less expensive to design and construct than equivalent systems that allow higher voltages because they don’t require additional personnel-safety features.

In addition to hardware savings, installation and maintenance technicians don’t require certification for higher-voltage non-SELV circuits, so labor costs for these functions are typically lower as well. SELV systems are also more compatible with high-density design than systems that operate at higher potentials because they require smaller creepage and clearance distances.

Within the CO, density is a keen concern. In the days of switched circuits, power consumption was on the order of a few hundred watts per square foot. Today, that figure is several kilowatts. Consumer demand is driving network traffic at a compound annual growth rate of 23%, projected through 2017. Given the incremental cost of CO real estate, maximizing throughput per unit floor area is a priority.2

Keep To The Narrow

Narrow-range 48-V power distribution allows system designers to specify more efficient and more compact converters for downstream loads such as line cards and CPUs. Efficiency for its own sake may not drive hardware selection decisions by itself. But by enabling higher density, energy-efficient converters are compelling for several reasons. Primarily, they allow designers to drive power and functional densities to the practical limits of current technology, minimize the cooling load, and maintain high reliability.

Early 48-V SELV power distribution systems maintained their output voltages to ±20%. Current ac-dc designs typically exhibit tighter output ranges with tolerances of ±10% or better. As a result, for some technologies, the same conversion topology that can source +48-V distribution systems can, alternatively, provide 54-V outputs and stay within the 60-V SELV high-line limit.

Operating the power distribution apparatus at 54 V reduces I2R losses by 26% compared to 48-V power feeds. The higher distribution voltage also enables use of Power over Ethernet within the facility for on-site communication, remote sensors, and physical-plant security functions.

High Power Density

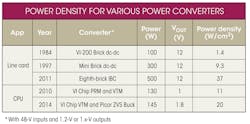

As power-conversion topologies, switching-device designs, and power packaging technologies have advanced, per package power capacity and overall power density have increased (see the figure).

The raw power density, however, doesn’t tell the whole story, particularly for 48-V input, 1.x-V output devices for CPU applications (see the table).

These power converters eliminate a conversion stage by replacing both an intermediate bus dc-dc and the CPU’s point-of-load (PoL) regulator, often in a smaller footprint than the original PoL. This approach enables designers to route the 48-V supply on the CPU board, reducing printed-circuit board (PCB) I2R losses by a factor of 16 or more.

Vicor’s 48-V VR12.5 reference design does away with multi-phase conversion topologies, reducing component count, while maintaining full compatibility with Haswell power requirements. The architecture also significantly reduces bulk capacitance, increasing power density further.

The reduction in component count and lower energy-storage requirements permit designers to locate the power train closer to the processor. This reduces losses and parasitic inductances in the processor’s supply traces—those that carry the board’s highest currents and exhibit the largest current dynamics.

Conclusion

Datacom applications demand high-density, low-loss power subsystems. Tight tolerance +48-V (nominal) power distribution allows systems to qualify as SELV designs, reducing installation and operating costs. Narrow-range 48-V rails facilitate high-efficiency power converters to power line cards and processors. These power components help power-subsystem designers minimize power losses and attain maximum power density.

References

1. Roberts, Lawrence, The Evolution of Packet Switching, November 1978.

2. Cisco Visual Networking Index: Forecast and Methodology, 2012-2017, Cisco Systems, May 2013.

Stephen Oliver is vice president of the VI Chip product line for Vicor Corp. He has been in the electronics industry for 18 years, with experience as an applications engineer and in product development, manufacturing, and strategic product marketing in the ac-dc, telecom, defense, processor power, and automotive markets. Previously, he worked for International Rectifier, Philips Electronics, and Motorola. He holds a BSEE from Manchester University, U.K., and an MBA in global strategy and marketing from UCLA. He holds several power-electronics patents as well.