Download this article in PDF format.

Finding the right balance among test cost, test quality, and data collection for running diagnosis requires consideration of several competing factors. Luckily there are some best practices for creating efficient cost-effective pattern sets and applying them in the best order for detection and diagnosis of defective parts.

Test engineers can improve defect detection and silicon quality by applying a lot of patterns and pattern types, such as gate-exhaustive patterns, but this gets expensive. The more cost-effective way is to target the types of fault models that detect the most silicon defects without over-testing. Do this by creating a sequence such that each pattern set can be fault-simulated against other fault types before additional “top-up” patterns are created to target the remaining undetected faults. This cross-fault simulation is important for test cost reduction.

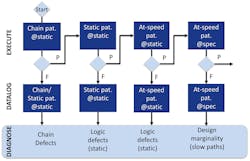

The challenge is determining the order in which patterns should be created. In general, give priority to creating patterns that have the most strict detection requirements, such as path delay patterns. Figure 1 illustrates a typical pattern generation process.

1. A typical pattern creation process involves fault-simulating each pattern set against other fault types before creating additional “top-up” patterns to target the remaining undetected faults.

Test patterns can be grouped into three main categories:

- Chain test patterns.

- At-speed patterns, which include transition, path delay, timing-aware, delay cell-aware, delay bridge, and delay functional user-defined fault-modeling (UDFM) patterns.

- Static patterns, which include stuck-at, toggle, static cell-aware, static bridge, and static functional UDFM.

Functional UDFM patterns, whether static or delay, are the design’s functional patterns described using UDFM so that they can be applied easily through scan.

Use the ordered list of scan pattern types in Figure 2 as a guideline for creating and fault-simulating each pattern type to achieve the smallest pattern set. At-speed patterns are shown in a darker shade. Before creating new patterns, all previously created patterns are simulated for the target fault type so that only top-up patterns that target the remaining undetected faults need to be created.

2. This list of ordered scan patterns can be used as a guide to the pattern creation and fault simulation sequence for the smallest pattern set. Note that the most efficient pattern creation sequence may not be the best order for pattern application on the tester.

You can optimize the pattern sets by examining field return and manufacturing yield data. The goal is to create a set of patterns that’s efficient to apply and detects the most defects in silicon.

Note that the most efficient sequence for creating the smallest pattern set is different than the ideal order for tester application. Therefore, save all pattern sets separately so that they can be applied on the tester according to the appropriate test phase and desired defect detection.

Determining the Order of Patterns During Tester Application

During the tester application, the order in which different types of patterns are applied depends on whether it’s a “go/no go” production test—testing stops at the first failure—or datalogging of some or all failures is needed for diagnosis.

If the goal is to quickly determine which devices failed without any failure datalog, it’s best to apply chain patterns first, followed by the at-speed patterns at functional (at spec) speed and static patterns.

Within the at-speed pattern set, we recommend applying patterns in the following order:

delay bridge —> delay cell-aware —> timing-aware —> transition —> path delay —> delay functional UDFM

We recommend the order for applying the static patterns as following:

static bridge —> static cell-aware —> stuck-at —> static functional UDFM —> toggle

To log failing data for volume diagnosis, we recommend the flow shown in Figure 3. The goal here is to make sure that diagnosis can have staged failure data for isolating different types of defects.

3. Pattern application order at the yield ramp-up stage is shown. The best order for applying patterns is determined by whether it’s a “go/no go” production test, or a datalogging session failure.

In summary, establishing the most efficient sequence of test-pattern creation and application can save significantly on tester cost. Determining the ideal order involves creating the most restrictive patterns first, then simulating the remaining faults. If needed, top-up patterns can be used to get the desired coverage. For the order of application on the tester, test the most easily failed patterns first in a go/no-go test. Follow up with testing for diagnosis data collections.

Jay Jahangiri is Product Manager and Wu Yang is Technical Program Manager for the Silicon Test Division at Mentor, a Siemens Business.