FPGAs, like GPUs, have changed significantly from their initial inception that took a narrower view of the solution space. They’ve morphed from a collection of gates and routing to taking on jobs that range from communications to artificial intelligence (AI).

Changing FPGA Landscape

FPGAs used to be single chips like most devices. Though they’ve grown in size in terms of transistors, the underlying architecture is morphing as well. FPGAs, large and small, are still available and useful in their original context, but the overall FPGA solution landscape is much broader.

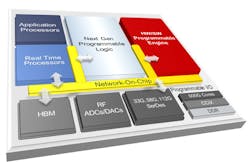

At the high end, interposer technology is allowing types of devices to be combined on a single die. Xilinx’s adaptive compute acceleration platform (ACAP) pushes the envelope with multiple hard cores employing different silicon node processes and analog support, such as mixed signal and SERDES onto a single chip (see figure).

Xilinx’s adaptive compute acceleration platform (ACAP) combines a range of cores and technologies with its FPGA fabric.

Interposer technology is available in a number of forms like Intel’s embedded multi-die interconnect bridge (EMIB). EMIB uses through-silicon vias (TSVs) like other interposer technologies, but smaller, individual interposers are utilized between die. This is more like using connectors than a backplane. TSV technology is also applied in stacking dies, which are common in high bandwidth memory that’s also being tied to FPGAs.

Mixing technologies brings significant advantages when it comes to analog technology. It allows for high-speed SERDES to be optimized, since they utilize transistor technology different from that needed for FPGA fabrics.

Another change in the FPGA space is the rise of embedded FPGAs. Usually, FPGA intellectual property (IP) was only used in chips from FPGA vendors. Now a number of embedded FPGA vendors provide IP so that FPGA fabrics are part of a system-on-chip (SoC) solution. This is the flip side of FPGA SoC designs from FPGA vendors that incorporate hard-core processors and peripherals with an FPGA fabric. In both instances, the approach is to use compatible FPGA technology that meshes with the other IP versus using interposer technology to link dissimilar silicon components.

Also worth mentioning is the impact of flash-based FPGAs. Their low power and instant-on capabilities allows them to be used in applications such as mobile or in IoT devices where RAM-based FPGAs may be impractical.

Changing Architectures

The use of hard-core processors and peripherals in FPGAs has been commonplace for a while, as are building blocks like DSP and floating-point units, but even these are changing as application demands shift.

AI is one tool that’s reinventing how FPGAs work. These days, AI’s machine learning (ML) means implementing deep-neural-network (DNN) support. DNN inference support can benefit from small floating-point or integer support, whereas most DSP blocks lean toward larger values amenable to the problems addressed by earlier FPGAs.

FPGA vendors have been eyeing the lookup table (LUT) and interconnect fabric as well, to see how to improve implementations in the face of wider FPGA usage.

Another way FPGAs are changing is the use of custom blocks. It’s most common in embedded FPGAs, but some vendors like Achronix will incorporate custom or customer IP into a conventional FPGA fabric. This is different than the aforementioned interposer support in that the IP uses the same silicon technology employed by the FPGA logic. Moreover, it’s designed to work within the FPGA interconnect fabric versus adding hard-core blocks. The custom IP support is integrated into the FPGA software tools so that the custom IP blocks can be connected and utilized like the usual FPGA LUTs.

Custom IP of this type is normally included in columns like LUTs and other FPGA building blocks such as DSP units. However, custom IP can have other advantages; for example, an additional connection fabric that’s independent of the FPGA connectivity fabric. This can be handy for high-speed applications (e.g., networking), where it’s possible to improve the flow of data significantly compared to a conventional FPGA implementation.

Soft Cores and RISC-V

Soft-core processors have been common since FPGAs appeared. Some vendors had their own optimized soft-core processors, such as Intel and its NIOS II and Xilinx with its MicroBlaze. There are a number of third-party cores for processors, like the venerable 8051 and Arm’s Cortex-M1. The latter tended to provide more mobility between FPGAs compared to the proprietary soft cores from FPGA vendors, which were optimized for their FPGA hardware.

Enter RISC-V. RISC-V is actually an open-source instruction set standard. However, it leads to hardware implementation, including those on FPGAs. This approach has been spearheaded by Microsemi’s Mi-V FPGA ecosystem. RISC-V is available for most FPGA platforms, in addition to showing up in RISC-V-based SoCs as well as GreenWaves Technologies’ multicore GAP 8 SoC for machine-learning applications.

Even as FPGA hardware continues to morph, one of the greatest changes for FPGA technology is the software used to create the IP that runs on the FGPA. But that will have to wait for a future article.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.