Autonomous Vehicles are Driving Innovation

As the automotive and technology industries converge more tightly together, it’s accelerating the pace of innovation in the mobility and transportation sectors, creating opportunities that are disrupting the established industry structure. Designing safe, efficient, and convenient autonomous vehicles (AVs) requires a mix of complex technologies in the cloud and at the edge, including data connectivity, powertrain electrification, autonomous driving systems, and an unprecedented number of semiconductor devices (Fig. 1). Sensing and perception systems and power-optimized high-performance processors will be critical areas of innovation for AV development.

1. Autonomous vehicles will support a range of connectivity options in addition to the V2X support for autonomous operation.

Sensing and Perception

AVs must have a means to perceive the vehicle’s surroundings to determine precise localization, accurately detect and classify fixed and moving objects, and measure the distance to those objects. Most AVs will employ a combination of sensing and perception systems, including camera-based embedded vision systems, radar, and LiDAR sensors. These sensing technologies have complementary strengths, and they can be integrated into an effective sensor suite for AVs.

Camera-based vision systems capture rich visual information, but are limited in their ability to determine distance to the object. LiDAR (light detection and ranging) provides precise range measurement with high resolution, but it can be adversely affected by visual obscuration such as fog, smoke, or glare. Conventional radar can “see” through these conditions, but it doesn’t produce the high-resolution 3D mapping that can be done with LiDAR.

Camera

The use of camera-based sensing in advanced driver-assistance systems (ADAS) and AV has been enabled by advances in image sensors, image-processing algorithms, and high-performance computing hardware. These technologies will remain key areas of innovation in future AV development. Camera-based sensing will combine with other sensing technologies to produce a detailed 3D representation of the vehicle’s surroundings. And, as the number of camera-equipped vehicles grows, they will become a valuable source of data about road conditions, traffic, hazards, availability of parking spaces, and other information.

Some embedded vision systems utilize field-programmable gate arrays (FPGAs) and graphics processing units (GPUs), which are well-suited to the high degree of parallelism required by vision-processing algorithms. However, the most successful automotive vision-processing solution to date is the Mobileye EyeQ series, a dedicated hardware-accelerator application-specific integrated circuit (ASIC). An important factor in Mobileye’s success in ADAS applications was an extensive period of testing in real-world conditions. This enabled continuous refinement of the algorithms and silicon over successive generations of the chip.

LiDAR

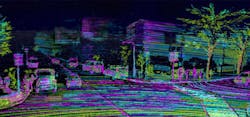

LiDAR is a critical sensing technology for AVs due to its ability to produce very-high-resolution mapping of objects in relation to the vehicle (Fig. 2). LiDAR is useful in detecting road features, such as curbs and lane markings, as well as in tracking objects in proximity to a vehicle. LiDAR sensors transmit a laser pulse, detect the backscattered or reflected light energy, and calculate the distance to the object based on the elapsed time. Early AV platforms used scanning LiDAR systems that employed a rotating mirror assembly to direct the laser pulse. These perform well and have good range, but are bulky and too costly for production.

2. High-res LiDAR mapping.

Innovations in LiDAR for AVs focus on reducing the system’s size and the cost, while maintaining the required performance in detecting range and angular resolution. This has led to the development of solid-state LiDAR systems, which reduced the complexity, size, and cost as compared to mechanical scanning LiDAR systems. The challenge with solid-state LiDAR systems is achieving the requisite range and resolution. This is now driving innovation in the design of the laser emitter, optics, photodetector, and signal processing over a wide range of technologies, including gallium-arsenide (GaAs) photodetectors, virtual beamsteering using MEMS technology, and advanced signal-processing algorithms.

Automotive radar is one of the most mature sensing technologies employed in AVs. It was introduced in the first generation of adaptive cruise control systems in the early 2000s. LiDAR offers a wider field of view and higher resolution, but radar is less susceptible to many forms of visual obscuration, such as smoke and fog, that can reduce the effectiveness of LiDAR and camera-based sensing systems. While there’s a significant overlap in the functions of radar and LiDAR, they’re likely to coexist in AV systems for some time because of the advantages of sensor fusion and the need for redundancy in safety-critical applications.

Despite its relative maturity, there’s still room for innovation in radar technology for AVs. High-frequency radar in the 77-GHz band improves long-range performance and has high reflectivity with non-metallic objects, which is needed to detect pedestrians and animals. Advances in signal-processing algorithms will continue to improve performance. The use of RF CMOS technology enables higher functional integration, which results in a more compact radar system design. Automotive radar system-on-chip technology is an example of this type of innovation.

Processing

Much of the innovation in AV systems centers on optimizing the “virtual driver,” or the vehicle’s brain. The virtual driver consists of machine-learning algorithms and middleware that connects to the vehicle’s sensing, actuation, and communication subsystems. This technology is core to the functioning of the AV.

It’s possible that in the future, AV developers will license their virtual driver software stack to vehicle manufacturers who integrate it onto their platform using standard interfaces for sensors, actuators, and data-communication protocols. However, these standards haven’t been fully defined and some sensing technologies aren’t mature enough to be separated from the control system. \

Summary

The advancement of AV systems is driving innovation across many technology domains. This increased pace of innovation, however, poses challenges for automakers as well as materials, component, and manufacturing equipment suppliers. Automotive technical intelligence and intellectual-property management will help auto manufacturers and their suppliers protect their market position and identify additional revenue streams through competitive benchmarking, patent licensing negotiation, and indemnification.

Such a process assists in market-entry due diligence decisions. It also helps companies understand the intellectual property and technology strengths of their competitors to enable differentiation, inform important technical design decisions, and guide the acquisition of patents to enable lower-risk market entry. And, experienced, objective third-party analysis at component, circuit, and system levels supports assertion of patent claims and patent transaction valuation. Finally, an understanding of components’ underlying cost structure aids in price negotiations.

Jim Hines is Director of Automotive Research at TechInsights.

About the Author

Jim Hines

Director of Automotive Research

Jim Hines, TechInsights’ Director of Automotive Research, is a semiconductor expert specializing in automotive and smart mobility innovations. The former Gartner analyst has advised clients around the world on semiconductors and related technologies supporting autonomous driving systems, data connectivity in vehicles, human-machine interface (HMI), and powertrain electrification.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: