When you imagine robots, you might think of massive machine arms along a factory floor, with welding sparks flying. Today, as robotic technologies advance, so do complementary sensor technologies. One relatively new technology in robotic sensing is complementary metal-oxide semiconductor (CMOS) millimeter-wave (mmWave) radar sensors.

Compared to vision- and LiDAR-based sensors, immunity to environmental conditions such as rain, dust, smoke, fog, or frost is one important advantage of mmWave sensors. In addition, mmWave sensors can work regardless of lighting conditions. The extremely rugged sensors are mounted directly behind enclosure plastics without external lenses, apertures, or sensor surfaces. All of this ensures these sensors are able to perform in industrial robotics applications, addressing key concerns about safety when interacting with humans, as well as the robot’s ability to accurately map and navigate through its environment.

Safety Guards Around Robotic Arms

As robots become more ubiquitous and increase their interactions with humans—either in service capacities or in flexible, low-quantity batch-processing automation tasks—it’s critical that they don’t cause harm to the people with whom they interact.

Historically, a safety curtain or keep-out zone around the robot’s field of operation ensures physical separation (Fig. 1).

1. A robotic arm with a physical safety cage is the historical method to ensure safety, but limits human interaction.

Sensors make it possible for a virtual safety curtain or bubble to separate robotic operation from both unplanned human interaction and robot-to-robot collision as density and operation programmability ramp up. Vision-based safety systems require controlled lighting, which increase energy consumption, generate heat, and require maintenance. In dusty manufacturing environments such as textile or carpeting, lenses need frequent cleaning and attention.

Since mmWave sensors are robust, with the ability to detect objects regardless of lighting, humidity, smoke, and dust on the factory floor, they’re well-suited to replace vision systems. Moreover, they can provide this detection with very low processing latency—typically under 2 ms. With a wide field of view and long detection range, mounting these sensors above the area of operation simplifies installation. Having the capability to detect multiple objects or humans with only one mmWave sensor reduces the number of sensors required and lowers cost.

Using mmWave Sensors to Measure Ground Speed

Accurate odometry information is essential for the autonomous movement of a robot platform. It’s possible to derive this information simply by measuring the rotation of wheels or belts on the robot platform. This low-cost approach is easily defeated, however, if the wheels slip on surfaces such as loose gravel, dirt, or wet areas.

More advanced systems can assure very accurate odometry through the addition of an inertial measurement unit (IMU) that’s sometimes augmented with GPS. mmWave sensors can supply additional odometer information for robots that traverse over uneven terrain or have a lot of chassis pitch and yaw by sending chirp signals toward the ground and measuring the Doppler shift of the return signal.

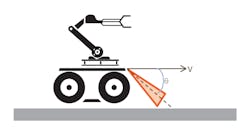

2. A radar configuration like that shown on this robotics platform can calculate ground speed independent of wheel rotation.

Figure 2 shows the potential configuration of a ground-speed mmWave radar sensor on a robotics platform. Whether to point the radar in front of platform (as shown) or behind the platform (as is standard practice in agriculture vehicles) is an example tradeoff. If pointed in front, then you can use the same mmWave sensor to also sense surface edges and avoid an unrecoverable platform loss, such as going off the shipping dock in a warehouse. If pointed behind the platform, you can mount the sensor at the platform’s center of gravity to minimize the pitch and yaw effect on the measurement, which is a large concern in agriculture applications.

Point-Cloud Information Generated by mmWave Sensors

A person walking in front of an mmWave sensor generates multiple reflection points. Each of these detected points can be mapped in a 3D field relative to the sensor (Fig. 3) within the popular robot operating-system visualization (RVIZ) tool.

3. A point cloud of a person shown in RVIZ is captured with Texas Instruments' IWR1443BOOST EVM.

This mapping collects all points over a quarter-of-a-second time period. The density of the point information collected provides a good amount of fidelity with leg and arm movement visible, enabling object classification as a moving person. The clarity of the open spaces in the 3D field is also very important data for mobile robots, as it enables autonomous operation.

Mapping and Navigation Using mmWave Sensors

4. Mounted on the Turtlebot 2 is a mmWave Radar IWR1443BOOST Evaluation Module board, which helps accurately map objects.

Using the point information for objects detected by the mmWave sensor, it’s possible to accurately map obstacles in a room, and to use the free space identified for autonomous operation and navigation (Fig. 4).

5. Using the OctoMap library in ROS, an occupancy map from the mmWave radar point map is generated.

As you can see in Figure 5, a robot equipped with an mmWave sensor can accurately build a 3D occupancy grid map by driving through an area and detecting stationary obstacles. This map can then be used by the robot to avoid these stationary obstacles when autonomously navigating to specific destinations (Fig. 6), as well as helping it to avoid dynamic obstacles that enter its path.

6. The IWR1443BOOST EVM occupancy map is used for autonomous navigation of a Turtlebot 2 with the ROS move_base library.

About the Author

Dennis Barrett

Product Marketing Manager

Dennis Barrett is a Marketing Manager for the Processor Business Unit at Texas Instruments, where he has worked on DSP, processor, and controller solutions for 30 years.