Testing the Unknown: The Real Problem with Autonomous Vehicles

Autonomous vehicles are a hot topic—so much so that the technology powering them and the surrounding regulations feel a lot like the Wild West. OEMs and Tier 1s are pitting competing strategies, architectures, and technologies against each other in a largely unregulated space to develop the safest vehicles on the road, all while racing to capture market share. As an engineer, this makes it an exciting time to be involved in the automotive industry as we strive to apply cutting-edge technology to stop the 10 million road accidents that happen every year.

With that said, this same innovation is creating a nightmare scenario for test engineers, because all of these evolving ideas mean testing against unknowns. Unknown regulation, unknown technology, unknown architectures, even unknown algorithms created by neural networks instead of line-by-line code developed by software engineers.

On top of all of this is the burden of safety that falls on new technology. Every crash of a self-driving car brings more scrutiny and doubt of the technology—despite the fact that there has only been a small number of documented self-driving accidents compared to the 1.3 million road deaths caused by human drivers every year. As with any new technology, we as consumers will need vast reassurance about the safety of the technology before literally putting our lives in its hands.

This is the ultimate unknown for test engineers. How much testing will be needed to prove these systems are safe—both to government and legal standards—and meet our confidence standards as consumers?

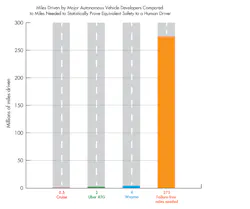

The chart illustrates the number of miles needed to prove autonomous vehicle performance meets or exceed human driver performance, according to statistical analysis by RAND Corporation, compared to actual driven miles as of January 2018. Obviously, new test strategies will need to be developed to meet these near-impossible numbers in any reasonable timeline.

Machine-Developed Algorithms: Testing the Black Box

There’s a general agreement that the only way autonomous vehicles can become a reality is through the application of machine learning. The possible scenarios a vehicle could encounter are basically infinite and it’s impossible to hard-code the algorithms to successfully negotiate all of them. Instead, massive data sets are being recorded along with how humans react to the driving scenarios that are then fed into neural networks.

While this allows design engineers to reasonably tackle the problem of algorithm design, it makes the test engineer’s job much harder. Algorithms are now a black box. This requires more extensive testing because you don’t have a fundamental understanding of the code that can be used to generate test scenarios. Rather, you need to test against almost every conceivable scenario to ensure the algorithms function properly.

Evolving Technologies and Architectures

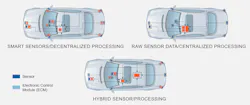

As technology evolves and becomes more cost-competitive, design engineers are constantly updating their ADAS systems to include new and different sensor types, and in some cases, they’re even fundamentally evolving system architectures. Right now, there are competing schools of thought about centralized vs. distributed processing architectures. Adding to the complexity is the role cloud computing will play as 5G radio technology is rolled out to support the bandwidth requirements needed for the massive data streams coming off sensor systems.

Vehicle ADAS architectures are currently changing as developers test different strategies for power consumption, data fidelity, and processing power.

What this is creating for test engineers is the need for flexibility. As it is, no one can say for sure what types or how many sensors will be on future vehicle platforms even a year or two from now. When you add in the fact that most test organizations don’t have the budget or time to bring up new test systems every year to meet these requirements, it’s clear they need test systems that are adaptable to change, can add more cameras or radar sensors, and tack on new sensor types like LiDAR.

Open and Adaptable Test Infrastructure

As autonomy packages in vehicles become productized, government regulation will need to be developed to ensure consumer safety—or at the very least, build consumer confidence.

We’re already seeing the beginnings of this with ISO 26262 functional safety standards and EURO-NCAP insurance testing standards. In addition, Germany has recently released an autonomous vehicle ethical standard that governs the decisions self-driving cars should make.

Largely though, we’re in a world where future regulation and the testing it will mandate is unknown and variable. Not only that, but as test expertise in this domain develops, organizations will add their own testing “secret sauce” to differentiate themselves. Test engineers need to be prepared to adapt test procedures and documentation as these internal and external standards continue to be written and evolve. They can start hedging against this unknown future today by investing in open and adaptable test infrastructure.

About the Author

Jeff Phillips

Head of Automotive Marketing

As Head of Automotive Marketing at NI, Jeff Phillips is responsible for leading the go-to-market strategy for Automotive initiatives. This involves understanding market needs and expectations, defining solution messaging, and ultimately evangelizing how NI’s software-centric platform can solve the growing challenges in testing the vehicles of today and tomorrow.

Phillips uses his Certified LabVIEW Developer honors to volunteer as a FIRST Robotics mentor with elementary and middle school children and holds a Bachelor’s in mechanical engineering from The University of Tennessee.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: