Most of you are too young to remember when the only computer interface around was a keyboard and paper. It sounds almost as archaic as pencil and paper, but it worked. It was also easy to learn because there were not a lot of options, although that was not the case with the commands being typed.

The keyboard is still with us, but screens have long since replaced paper. This is the set-up I am using to type this article, and the user interface is fine for what I am doing now. (I tend to use hot keys a lot; but digress to the mouse quite often; such is the nature of the beast.)

It should be noted, though, that the methods used for midsize screens do not necessarily work as well with small and large screens. Buttons and touch interfaces are the common interface for small screens, while wands, rings, and hand waving are attempting to replace the television remote with its myriad buttons.

The small screen interfaces typically utilize absolute positioning for controls like buttons and sliders. It helps if the controls are large enough to hit with a finger. Personally, I tend to need larger buttons. Gestures like flicking to flip pages and pinching for zooming use a more relative approach. This makes the gestures easier to utilize because the position of the fingers is less critical.

Quantum Interface’s Precognition adds a new direction to the user interface. It uses movement and direction to select items, as opposed to the absolute positioning common to small devices like smartphones and tablets. Like gestures, positioning is a less critical component with this approach.

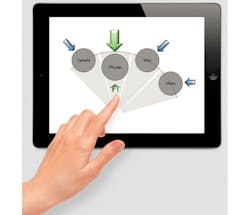

The way the system works is to bring up a selection when the finger moves into a control area like the center of the screen. A selection list is presented (see figure). Moving the finger side-to-side causes the highlighted selection to move. Typically the highlight is a large, brighter version of the item. Selection is done by sliding the finger towards the highlighted item. This might bring up another level of selection, such as an item within a folder.

The key is that the system only uses relative movement, so it works with interfaces like a touchscreen that generates absolute coordinates for movement. It turns out that Precognition works equally well with sensors that only detect relative movement. This tends to be handy for devices like wands or television remotes. Relative motion also is something easily detected using a range of other sensing systems, even on devices as small as a watch. This is useful because the sensor does not have to be on the watch face, where a finger blocks much of a small display.

You really need to check out Quantum Interface’s videos to see how the interaction flows. It is similar to 3D glasses—e.g., a visual thing. Where and how Precognition shows up will be interesting to watch. It just goes to show that there are more interface opportunities for that flood of IoT devices.

About the Author

William Wong Blog

Senior Content Director

Bill's latest articles are listed on this author page, William G. Wong.

The latest blogs have been moved to alt.embedded on Electronic Design.

Bill Wong covers Digital, Embedded, Systems and Software topics at Electronic Design. He writes a number of columns, including Lab Bench and alt.embedded, plus Bill's Workbench hands-on column. Bill is a Georgia Tech alumni with a B.S in Electrical Engineering and a master's degree in computer science for Rutgers, The State University of New Jersey.

He has written a dozen books and was the first Director of PC Labs at PC Magazine. He has worked in the computer and publication industry for almost 40 years and has been with Electronic Design since 2000. He helps run the Mercer Science and Engineering Fair in Mercer County, NJ.

- Check out more articles by Bill Wong on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: