If you’re a programmer looking for an artificial intelligence bargain, you should check out Intel’s $79 Movidius Neural Compute Stick (Fig. 1). I did, and I’m very impressed with its functionality, as well as the software that comes with it.

For those unfamiliar with the Neural Compute Stick, is a USB 3 device with a Movidius Myriad 2 VPU (video processing unit). The chip includes a deep neural network (DNN) accelerator in addition to camera inputs; it is designed to handle image processing chores as well as other DNN applications. Unfortunately, the camera inputs are not exposed on the stick, but it is possible to stream video via the USB 3 interface. Some of the examples that come with the system do just that.

The stick will work with just about any system that has a USB 3 connection, but the software development kit (SDK) targets Ubuntu 16.04. This is the latest, long-term Linux implementation from Ubuntu, and it supports a variety of hosts that includes x86 and Arm.

I initially planned on testing the stick using a virtual machine running Ubuntu on Linux KVM, but unfortunately, the USB passthrough was not sufficient to handle the odd handshaking that the stick uses for configuration. Luckily I had a Raspberry Pi 3 handy, because that is another platform supported by the stick and Ubuntu.

The SDK uses a command line installation that also installs Caffe. This was the initial machine learning (ML) platform supported by Intel. It has since expanded that support to include TensorFlow. The example programs all take advantage of the Caffe runtime support, but there are sample applications for TensorFlow that can easily be tested with Intel’s platform.

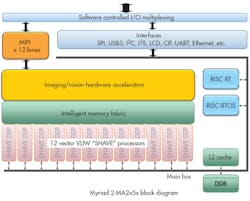

The Getting Started manual outlines the few steps needed to install and test the support of the USB stick. These are command line application scripts, although one requires a USB camera to do its recognition chores in real time (Fig. 2). This test application actually highlights the advantage of using the Movidius Myriad 2 VPU chip, as the Raspberry Pi would be hard-pressed to keep up with the dozen specialized vector VLIW SHAVE processors and two RISC processors for this type of application (Fig. 3). In actuality, the chip is hardly breaking a sweat.

Moving past the examples takes a bit more work. The addition of TensorFlow support may help in this area, because it is easier to set up using existing ML models and some Python code.

In general, the Intel Movidius Neural Compute Stick will be used for processing models that have been configured on other systems although it can be used for training purposes. It is possible to utilize multiple sticks on a single system, although I only had one for testing. A USB 3 hub will have enough bandwidth to work with four or more and these can run in parallel on the same application, although I did not delve into this type of set-up.

Using existing ML models and data is a relatively straightforward process depending up on the framework used, since the interfaces are standardized. Developing models from scratch will be a major undertaking regardless of which platform is used. Likewise, you will need to delve into the documentation or examples for your platform of choice—including Caffe and TensorFlow—to get a handle on using or creating models, as the documentation for the stick will simply make sure things work properly.

The Movidius Myriad 2 VPU is already used in applications like DJI’s SPARK drone. An embedded design like this has the advantage of direct camera input to the chip, but logically, it will be the same as using the USB camera with the stick.

Developers can get started with ML on a PC but the Movidius Neural Compute Stick offers support for embedded applications. It can handle the DNN heavy lifting, allowing platforms like the Raspberry Pi to handle ML applications that would otherwise be too slow to be useful.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: