Lowering the Failure Rates in NAND Flash Systems Requires More than Strong ECC Code

How do we achieve the lowest failure rates in our NAND flash-based system? You may have had this discussion amongst your engineering team or with your storage system supplier. What steps are being taken to ensure that you’re getting the quality solution that not only efficiently corrects the inevitable errors, but establishes a system structure so robust it prevents errors from occurring in the first place?

As the process geometry of NAND flash memory shrinks, the bit error rates are increasing and, consequently, so are system failure rates. Anyone who understands the basics of SD cards, USB flash drives, and other NAND flash-based solutions knows that the key control component in minimizing these failure rates is the NAND flash-memory controller.

You may be familiar with this component and have discussed error-correction code (ECC) strength. Have you ever wondered what’s actually happening inside that tiny package? What’s the flash-memory controller doing to prevent failures from occurring? ECC is one unit within a set of different building blocks. The system should be so well-designed that its reliability and error prevention is interleaved throughout an array of processes, including ECC for the unavoidable bit errors. If you want to impress your boss and bring more to the table, you should read on as we explain the capabilities of those incredibly powerful flash-memory controllers.

Qualification

Even before a system is assembled, either in-house or through a system integrator, a significant level of planning goes into flash qualification. In other words, a flash-memory controller should be strategically paired with the correct flash memory. So, what exactly do we mean by qualification? A qualification doesn’t only imply that the controller will work with the selected flash. Above all, it means tests, and not just a few.

For instance, Hyperstone ensures that the combination has been tested thoroughly. This begins by characterizing the flash memory itself. A characterization is done by extensively testing the NAND flash in all lifecycle stages, in different use cases. This knowledge helps to properly design the error correction unit—to extract logarithmic-likelihood-ratio (LLR) tables used in the soft-decoding of the error correction and to implement the most effective overall error-recovery flow.

When planning a design, most companies discuss flashes in relation to their overall cost, but what many forget to consider is that flashes behave differently because of their architecture, the environment, and the use-case they’re exposed to. Unique processing, correction, and recovery options are needed for each scenario to achieve optimal results. This characterization activity is extremely important because all collected data opens the possibility to validate tools in the most accurate and effective way possible.

Complex and well-thought-out qualification is the ground work for a robust and stable system. For demanding systems, it’s worthwhile to question and discuss the qualification process with your system integrator. Alternatively, if you’re designing your solution in-house for more flexibility, ask your controller company directly. While solid qualification sets up a system for success, calibration and controller features such as Read Disturb Management, Wear Leveling, and Dynamic Data Refresh act more as direct error prevention.

Threshold-Voltage Disturbs

An efficient calibration process can keep bit error rates low throughout the lifetime of the device while adapting dynamically to variations in threshold voltages in the memory cells. There are many disturbs that impact the threshold voltage of a cell: program- and erase-cycling, read disturb, data retention, temperature variation, etc. Flashes do not automatically track threshold variations. Instead, the flash controller is expected to decide when calibration is needed and to execute the suitable sequence of operations.

Since different blocks or pages can experience different disturbs, optimal calibration for one page can’t necessarily be applied to another page (Fig. 1).

1. Calibration changes the reference voltage of the cell.

Error-Prevention Mechanisms

Furthermore, error-prevention mechanisms such as Wear Leveling (WL), Read Disturb Management (RDM), Near-Miss ECC, and Dynamic Data-Refresh (DDR) work together to manage the efficient and reliable transfers of data onto the flash. Wear Leveling ensures that all blocks in a flash or a storage system approach their defined erase-cycle budget at the same time, rather than some blocks approaching it earlier. Read Disturb Management counts all of the read operations to the flash. If a certain threshold is reached, the surrounding regions are refreshed.

Near-Miss ECC refreshes all data read by the application that exceeds a configured threshold of errors. Dynamic Data-Refresh scan reads all data and identifies the error status of all blocks as a background operation. If a certain threshold of errors per block or ECC unit is exceeded in this scan-read, a refresh operation is triggered.

These features are often titled differently by different controller companies and ultimately the logic and algorithms behind them, while targeting a common goal, reach them differently. One should establish a close relationship with their controller company to understand how these features operate differently in collaboration with the qualified flashes.

Error Correction Still the Priority

Finally, error correction has become one of the most well-known and important tasks of flash-memory controllers. While error prevention should carry more weight in terms of its worth, the complexity and strength of error correction ultimately leaves it taking the cake as most valued mechanism of the controller.

Error-correction coding has become increasingly difficult when considering area and power limitations. With increasing demand toward the capabilities of the error correction, the older codes are no longer able to deliver the demanded correction performance based on limited spare area that’s available in the latest flashes.

On that front, Hyperstone developed its own error-correction engine—a hard- and soft-decision error-correction module based on generalized concatenated codes. The advantage that this code-construction offers is in one particular aspect: the number of correctable errors in each codeword can be analytically determined. This implies that for every codeword, the error correction can guarantee a level of correction performance. As for all of the available flash memories that specify a guaranteed bit error rate, it’s possible to guarantee a reliable operation within specified parameters.

When the data is read back from the flash memory and passed into the error-correction module, the decision of which bits are erroneous is based solely on the redundant information added into the codeword. Using only this information implies that for every single bit, it’s equally likely that it’s correct or incorrect.

Utilizing so-called soft-information takes probabilities into account, which indicate how likely the received bit is what’s received or that it was of the other value (a bit can either be “zero” or “one”). These probabilities are taken from LLR tables that have been generated and stored in lookup tables in the controller. Using this information, the error correction now has a lot more input: For every single bit the probability information now indicates how likely this bit is what was received, e.g., a zero was received with 74% confidence that the original value was a zero. The error correction has a clear indication which bits are likely to be wrong and those that are unlikely to be wrong. This additional information significantly increases the correction capabilities of the error correction.

Conclusion

Flash-memory controllers are the key component that ensures reliable and secure handling of flash memories. They handle an array of features designed to efficiently manage data transfers onto the flash memory, and they do more than error correction—they also offer error prevention.

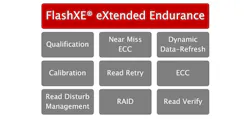

2. Shown is a block diagram of the FlashXE eXtended Endurance ecosystem.

However, these features are all engineered differently, and depending on a company’s business model and focus, your controller could be doing the bare minimum. Hyperstone calls this collective of features, mechanisms, and complex processes, which is aimed at increasing the endurance and therefore the reliability of flash memories, the FlashXE eXtended Endurance ecosystem (Fig. 2).

Lena Harman is Marketing Coordinator at Hyperstone.

About the Author

Lena Harman

Marketing Coordinator

Lena Harman is responsible for digital marketing, online strategy, and the optimization of online platforms at Hyperstone. She holds a double degree in Communications and International Studies from the University of Technology, Sydney.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: