Robots used to be hidden in assembly lines or research labs. Now you can find them in supermarkets, warehouses, and rolling down the sidewalk to deliver treats. They’re also taking on dangerous chores like fighting fires. Some are controlled remotely while others are fully autonomous. New sensors, improved motors, and machine-learning (ML) support are all helping to improve what robots can do and how closely they can interact with people.

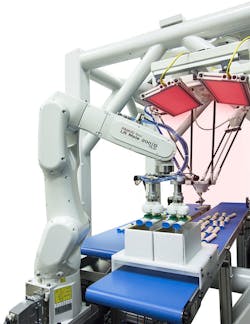

Robots are appearing more frequently in assembly lines, with robotic arms and systems from companies like those from FANUC (Fig. 1) providing a more flexible and functional setup. They allow assembly lines to be reconfigured quickly, given the advanced programming available as well as improved safety around people.

1. All sorts of robots, like these from FANUC, are providing more flexible assembly lines and working in closer proximity with people.

These days, robots aren’t always sitting in fixed locations. Autonomous, mobile robots like those from KUKA (Fig. 2) move throughout factories often equipped with robotic arms or other actuators to do more than just roll around. Like many mobile robots, including self-driving cars, these moving platforms include a range of sensors to determine their position as well as objects in its surroundings. Technologies ranging from radar and LiDAR to thermal, 3D cameras, and ultrasonics are being used.

2. KUKA can combine mobile robotic platforms with robotic arms to support mobile pick-and-place operations.

Secure Measures

Keeping autonomous robots in a self-contained area like a warehouse can simplify the use of robots, since they would only bump into other robots. Allowing a person to move into that space could be hazardous unless the robots were equipped with more sensors and software. Amazon is using a slightly different approach by having employees wear a special vest when moving into these more restricted areas. The vest provides location information about a person so that robots know to steer clear.

Robots operating in a secured area allows them to be simpler and move faster. Still, robots are being used in close quarters with people doing jobs that tend to be monotonous. For example, Giant Food Stores are now home to a host of Marty robots (Fig. 3) that roam around the stores looking for spills. The robot has a number of obstacle detection sensors, but its slow movement and the lack of any arms or protrusions makes it a relatively safe device.

3. Marty is a slow-moving robot on the lookout for spills in Giant Food stores.

Similar-sized robots are being developed to handle inventory chores. Robots that will stock shelves are generally limited to warehouses at this point, but could be used during the time a store is closed and with a limited numbers of humans around. People could also wear something like Amazon’s vest and have additional controls to make it safer to work around robots.

Brain Corp.’s line of robotic solutions includes some giant Roombas designed to handle industrial-sized cleaning chores like supermarkets (Fig. 4). These don’t bump into walls and furniture like tiny vacuum-cleaning robots. Instead, these floor scrubbers are designed to detect local obstacles in order to perform their function with limited or no human intervention. They typically operate when the store or area is closed so that there are few people to get in the way.

4. Brain Corp. offers a number of robots that are industrial-sized Roombas for handling large cleaning chores.

Robots that Deliver

Delivery robots approximately the size of shopping carts are taking to the sidewalks. Robots like Postmates’ Serve (Fig. 5) are designed to handle local deliveries. Postmates already uses people driving cars to handle deliveries, but many are often within walking distance, perhaps enabling the use of Serve in such cases.

5. Postmates’ Serve delivery robot is controlled by NVIDIA’s Jetson AGX Xavier.

Under the hood of Serve is NVIDIA’s Jetson AGX Xavier. It has a laser range finder on top along with other sensors spaced around the robot. Its large wheels are designed to go over curbs. They have already applied for a commercial permit in San Francisco, but it won’t be long before this type of robot becomes more common.

Taking on Dangerous Situations

The terrible fire at Notre Dame Cathedral saw a number of robots in use, including Colossus (Fig. 6) from Shark Robotics. It has a 360-degree HD camera, thermal cameras, and NRBC sensors for detecting hazards. Colossus is designed for remote control as well as autonomous operation for hours. It can be equipped with a fire house, allowing it to get closer to a fire than would be recommended for human firefighters.

6. Colossus, developed by Shark Robotics, was used to help fight the fire that gutted much of Notre Dame cathedral in Paris.

Other robots used to help control the fire included DJI Mavic Pro and Matrice M210 drones. These didn’t deliver water like the Colossus, but they did fly over Notre Dame cathedral to provide information about the status of the fire. These stock drones, which utilized conventional cameras, were remotely piloted. Drones equipped with thermal cameras are being used in similar situations. Firefighters actually had to borrow the drones, since they didn’t have any at this point.

One challenge with drones is that geofencing is being used more often. DJI’s drones take this into account—the area around the cathedral was fenced, so DJI cooperated to disable that feature, allowing the drones to be used in this instance.

Sensor, ML, and robot platforms are making it possible to put robots in close proximity with people. However, tools like NVIDIA’s Isaac Sim (Fig. 7) actually help develop and test robots. This type of simulation environment is also being used for self-driving car development, providing a much safer and cheaper way to test systems.

7. NVIDIA’s Isaac Sim can support HIL robotic testing.

One of the challenges with these simulation environments is incorporating the sensors so that their characteristics match that of the real-world systems. Another challenge is to handle a large number of robots.

Such systems are becoming more sophisticated, too, so that they can be part of hardware-in-the-loop testing, where a real robot is being utilized with these same tools. Likewise, the simulators also support running the robotic software on a real platform like the Jetson Nano, while sensors operate in the simulated environment.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.