Touch technology has come a long way from the first pushbutton, but Qeexo looks to push the technology past the multitouch support available with many smartphones and other mobile devices. It looks to unleash the selective power of fingertips, knuckles, or nails.

I spoke with Sang Won Lee, co-founder of Qeexo, about its FingerSense technology and where Qeexo and touch technology are headed.

Wong: How has touchscreen technology evolved over the last 10 years?

Lee: The biggest advance in touch-interaction techniques over the last decade has been the evolution to multi-touch in 2007 with the introduction of the iPhone. In an incredibly brief timeframe, almost all mobile devices supported multi-touch. However, there hasn’t really been much evolution since then.

This file type includes high resolution graphics and schematics when applicable.

Despite device manufacturers continuing to invest heavily in improving UX, the touch experience has improved little since then. We believe we’re entering new phase of touch called rich-touch, which will provide a much richer experience to users as they touch and interact with the mobile screen.

Wong: Is there still a lot of room for improvement in touch UX?

Lee: Absolutely. The current state of touch is not the pinnacle of the touch experience. Mobile devices can do far more for users, and the touch experience can be so much richer than it is currently.

Wong: Why is today's touch experience not enough for consumers?

Lee: Many features, such as copy and paste, screen capture, image cropping, and note taking, continue to suffer from poor UX and rely on cumbersome input methods. There is an opportunity to overcome these and other UX limitations by adding powerful yet simple new features to mobile devices, such as what the right-click and scroll wheel brought to the mouse. Adding an extra dimension to the single-button mouse made tasks and actions much easier and greatly improved the overall user experience of PCs. Current touchscreen devices act like the single-button mouse—equipped to handle only one type of input: click. Mobile handset and component manufacturers understand this and are looking for ways to enhance the touch experience.

Wong: Tell me more about Qeexo and what you’re doing with FingerSense?

Lee: Qeexo was spun out of Carnegie Mellon University in late 2012. Our goal was—and still is—to elevate the user experience of touch-enabled devices. We understand how much room there is to advance touch interaction, and FingerSense is the first solution from us aimed at accomplishing this goal.

Wong: What is FingerSense accomplishing that other mobile technology isn’t today?

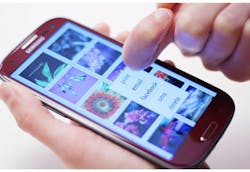

Lee: FingerSense adds intelligence to touchscreen devices by letting these devices know not only where a user is touching a screen, but also what the user is using to touch the screen. FingerSense distinguishes between touch input from the fingertip, the knuckle, a nail, or passive stylus (Fig. 1). With multiple input options, FingerSense improves device functionality while enhancing simplicity, thus advancing the user experience on mobile devices.

FingerSense unleashes new features and functions of the mobile phone and tablet by assigning different device actions to the fingertip, knuckle, nail, or stylus. For example, rather than a press-and-hold function to copy and paste text, you can simply tap with your knuckle to achieve the same result. Or rather than press and hold, then select all, then cut, then paste—a three-step process to grab a piece of text for a full image—FingerSense allows you to use your knuckle to circle an area of the screen, including text or photo, to cut and paste in just two steps. The result allows mobile-device users to far more easily cut, copy, paste, erase, capture, white board, annotate, and accomplish other tasks on the screen.

Check out the video to see FingerSense in action:

Wong: How does your technology work?

Lee: The technology uses acoustic sensing and real-time classification to allow touchscreens to not only know where a user it touching, but also how, whether its with the fingertip, knuckle, or nail (Fig. 2). The technology is currently being developed for inclusion in next year’s smartphone models to bring traditional “right-click” style functions to smartphones, among many features.

Wong: Does FingerSense run on any mobile phone?

Lee: FingerSense is software-only and runs on most of today’s mobile devices without the cost or space needed for additional hardware. Because it’s software-only, it can be implemented quickly; customers do not need to add any additional hardware to the device to support FingerSense. The platform is designed to run on mobile devices without the cost or space of additional hardware, and can run today on multiple operating systems including Android, iOS, and Windows.

Wong: How does your technology benefit consumers?

Lee: Many features, such as copy and paste, screen capture, image cropping, and note taking, continue to suffer from poor UX and rely on cumbersome input methods. As an example, capturing the iPhone screen requires two hands to press the power and control buttons simultaneously, and even then, the full screen is captured rather than just the desired section. These functions can be simplified with intelligent software and rich touch functionality.

FingerSense moves beyond multi-touch and just counting the number of fingers by letting users perform different actions with their fingertip, nail, knuckle (Fig. 3), or passive stylus. Thus, mobile devices become more powerful and easier to use. Previously cumbersome tasks become easy and entirely new device functions are made possible with FingerSense.

Wong: Why should device manufacturers care?

Lee: Manufacturers are looking for software innovation that will radically improve the way devices are used, while adding power and simplicity simultaneously. FingerSense lets device manufacturers dramatically elevate the user experience of their devices. It gives them a way to add an extra input dimension like a knuckle, without the need for an expensive accessory or stylus. In addition, it is software-only and as a result, there is no cost associated with adding additional components.

Wong: What has been the response of customers so far?

Lee: Customers and partners are incredibly excited about our technology. They believe, like us, that the market is in need for major innovation in the touch space, and they feel our solution provides considerable value to their products.

Wong: What is your long-term vision for FingerSense?

Lee: We believe FingerSense can be the interaction platform of the future. The most natural place to launch FingerSense today is on a mobile device, but we soon think FingerSense will go from on-device to on-body, implemented in things such as wearables, to finally on-world, where you’ll see FingerSense on tables, walls, and any platform where a touch surface makes sense.

This file type includes high resolution graphics and schematics when applicable.

Sang Won Lee, CEO, co-founded Qeexo in 2012 after spending more than eight years in the mobile industry. He previously held roles at Samsung, SK Telecom, and HTC. Sang received a degree in electrical engineering from POSTECH, and an MBA from UC Berkeley’s Haas School of Business.

References:

Plug and Play Tech Center Accelerator, Sang Won Lee, co-founder, CEO.

EmTech 2012, Chris Harrison, co-founder, CTO.

Innovators Under 35: “Liberating us from the touch screen by turning skin and objects into input devices,” Chris Harrison.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.