How Much Overengineering Do You Do?

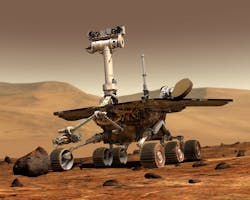

NASA’s Opportunity rover (see figure) landed on Mars in January 2004 along with its sibling, Spirit. It was going to be a short romp, but Opportunity has greatly exceeded its project lifetime—although the latest dust storm on Mars may finally cut this short. Part of the reason why Opportunity’s life lasted longer was due to the overengineering done by NASA scientists, which was necessary because they were dealing with the unknown. Mars is generally well-known, but the need for autonomous operation was dictated by the distance between Earth and Mars.

Designing and building devices and systems that exceed base specifications is often desirable and sometimes by design. For example, the “headroom” in terms of computational power and memory is often increased for devices that may have a long lifetime with the possibility of updates in the future. The additional resources allow changes to be made that typically require these resources. This approach was less common in the past with electronic devices, as most were isolated. Not so these days with the Internet of Things (IoT), where connectivity and over-the-air (OTA) updates are possible.

NASA’s Opportunity rover has lasted well past its planned voyage that started in 2004.

Cost Considerations

The problem is that overengineering comes with costs on both the design as well as implementation side of things. It can take longer to create because designs tend to be more extensive and, of course, additional resources typically cost more initially and over time. Likewise, these costs may overshadow the actual purpose of the design. A low-cost wireless sensor would be impractical if it weighs a ton.

Cost isn’t limited to the physical aspects of a design. Consider an application that exclusively uses 8-bit data. Would a 32-bit microcontroller be a desirable platform for implementation? It’s inefficient to store 8 bits in a 32-bit word, and packing and unpacking data has overhead. Of course, the details will be application-specific; one application of this nature may be more efficient on an 8-bit micro while another would work better with a 32-bit micro.

For example, convolutional neural networks (CNNs) are popular these days with machine learning being applied to a variety of applications. These often use 8-bit data or even fewer bits, but they also involve thousands of computations per iteration. Efficient hardware designs typically move around lots of data even though the individual items are small, making wider buses and registers preferable. Overengineering may warrant wider data widths. However, if this makes minimal improvements to the calculations while significantly increasing costs in terms of power, performance, and so on, then it’s not necessarily a good idea.

Reliability and Security

The idea of overengineering covers all aspect of design. Reliability is critical in systems like aircraft, where catastrophic results occur when systems fail. High standards are obviously part of a design specification, but engineers will typically overengineer “just to be safe.” Self-driving cars and semi-autonomous vehicles are making such design requirements more common.

Security is finally becoming a more common requirement, especially for IoT devices—including self-driving cars. Here, overengineering can occur, although security is often neglected rather than overbuilt. Part of the challenge in this aspect of design is what constitutes overengineering, since it’s more difficult to determine the effects and costs.

For example, what does increasing the number of bits in an encryption key cost in terms of performance and power consumption? What would the payoff be in terms of a higher level of security? Likewise, is the addition of another security layer, such as using a hypervisor, going to be a benefit or a useless overindulgence?

Things get even more complicated when considering the entire system. For example, a board designer who is more familiar with the digital side of the spectrum may specify a voltage regulator that greatly exceeds the requirements of the system, since they may not have knowledge of the specific requirements.

The unknown is often why designers choose technologies like SLC NAND flash memory over QLC NAND flash because they think that the reliability, speed, and longevity of the former are necessary for their application without knowing what those requirements may really be. That’s not good if devices get into the field and fail early. The flip side is they could be long-lived like Opportunity, but it could cut into profits.

The asymmetric aspects of the problem arise because each component will have its own costs, lifetimes, etc. A quote that has been attributed to the famous automotive engineer, Ferdinand Porsche, is “The perfect race car crosses the finish line in first place and immediately falls into pieces.”

Some devices, like cars, have major components that are easily replaceable, such as tires or fuel. Unfortunately, most systems have many components that aren’t so easily replaced, and these devices often become useless if a critical component fails. The typical IoT example is a battery-operated device that works only as long as the battery operates. This may be 10 minutes or 10n years. Devices at those extremes may be useful and by design. For example, a device that needs to send a temperature for a few minutes when it’s thrown in a vat of molten metal contrasts with a temperature sensor that’s designed to transmit the ambient room temperature for decades.

Getting Edgy

So how close to the edge do you design?

I learned programming back when assembler was common. Assemblers still exist, but the average programmer rarely gets down to this level. I developed a digital camera system for small robots that used an 8-bit SX microcontroller. It was fun to pack as much functionality into the limited amount of memory available. The timing for reading data from the camera chip was coded so that the instructions were in sync with the camera data. The only time the system waited for synchronization was at the start of a line of data from the camera.

Eventually, the memory filled up to the point where I could only debug portions of the system because the debugger required two bytes of main memory. That was enough for a jump table entry, whereby I could debug one set of code at a time using conditional assembly to build the test systems. The final version used that two bytes. Luckily, the functionality was such that independent testing was possible.

Engineers and programmers are often limited by these types of boundary conditions, but that’s typically the exception rather than the rule, especially these days when so many options are available. The careful system design is often replaced by overexuberant overengineering. These are obviously extremes, but it’s a reminder that developers need to be aware of the impact of the choices they make while designing a system. This can be as basic as choosing a programming language, to selecting a processing platform, to the type of concrete to use, or whether plastic or paper will affect the long-term viability of a solution.

Engineers are often prodded to think “outside the box.” Such a philosophy usually leads to new and useful approaches to problems. However, it shouldn’t lead to unnecessary and undesirable overengineered solutions.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: