Will Moore’s and Metcalfe’s Laws Doom the IoT to the Dotcom Fate?

In 2017, Wired magazine heralded the end of the Internet of Things (IoT). The prediction was part of several tech expectations written by the Wired staff for that year. It turned out that the prediction was not really about the demise of the IoT industry, but rather the term itself.

Still, the misleading prediction made me wonder if the IoT would follow the same fate as the great tech bubble of the early 2000s, namely, the Dotcom burst. There are striking similarities, especially in terms of the two guiding tech principals that many pundits used to explain the rise and (in part) fall of the Dotcom companies, e.g., the laws of Moore and Metcalfe.

In the late 1990s, Dotcom tech-savvy proponents promoted two main reasons for the rapid growth of the industry: continued semiconductor technology innovation, and rapid network growth valuations that would lead to increased revenue potential. At the time, it certainly appeared that the key market drivers were the promise of Moore’s Law—cheaper, faster and more powerful chips—combined with Metcalf’s Law about the inherent value of an ever-increasing network.

The bursting of the Dotcom Bubble brought the interpretation of both laws into question. Fortunately, today’s IoT depends less on Moore’s Law and more cautious in growth and value estimates from Metcalfe’s Law. To see what I mean, let’s first start with Moore’s Law.

Less of Moore?

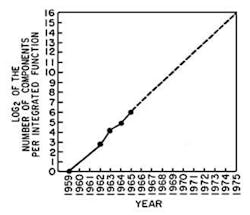

In 1965, Intel co-founder Gordon Moore used four data points to empirically predict that the numbers of transistors (or functions) per chip would double every year (Fig. 1). These data points yielded a straight line when plotted on a logarithmic-linear chart. But as Wally Rhines, the CEO of Mentor Graphics (now Mentor, a Siemens Business) has pointed out numerous times, Moore’s empirical law is really part of a more comprehensive law known as the “Learning Curve.”

1. A replication of the original data behind Moore’s Law.

“The Learning Curve has been around for centuries,” explained Rhines at a recent Semi Pacific NW event. “It is a log-log plot of the cost per unit produced of almost anything made in history over time.” Put another way, it represents an increase in learning (measured in cost/volume) over the increase of experience (time).

Most engineers will remember—from their undergraduate engineering economics courses—that cost is the great normalizer for comparing engineering projects. In this way, the Learning Curve and its more specialized case of Moore’s Law can be seen roughly as a measure of innovation.

But Moore’s Law has faced serious challenges beyond 28-nm chip designs, mainly because it’s proving economically difficult to shrink die sizes. Fortunately, this slowdown in Moore’s Law hasn’t yet affected the IoT technologies, which rely on much older and established process nodes for its chip—i.e., 90 nm. In this way, you might say the IoT chips are leaving Moore’s Law and returning to the Learning Curve.

Metcalfe’s Too-High Expectations?

Now let’s consider the other part of this Dotcom (and IoT) growth equation: Metcalfe’s Law. Several years after Moore’s original prediction, another technology pioneer—3Com co-founder Bob Metcalfe—stated that the value of a network grows with the square of the number of network nodes (or devices, or applications, or users, etc.), while the costs follow a more or less linear function. In other words, the value of the network increases as you add more people and applications to the network. Metcalfe’s Law attempts to quantify this increase in value as “n squared.”

Like Moore’s Law, Metcalfe’s Law is really an empirical observation—not really a law. The problem with Metcalfe’s Law first appeared during the Dotcom burst, where the law predicted growth that far outpaced the reality seen by network owners and investors.

A much more modest estimation of value was suggested years ago by Bob Briscoe, Andrew Odlyzko and Benjamin Tilly: “In our view, much of the difference between the artificial values of the dot-com era and the genuine value created by the Internet can be explained by the difference between the Metcalfe-fueled optimism of n2 and the more sober reality of n log(n).”

As the network nodes grow, the n2 climbs at an extraordinary rate, one that would be almost impossible to maintain in the real world (Fig. 2).

2. Growth rates for n2 vs. nLog(n). For the purposes of this blog, n equals the number of network nodes.

The key to applying Metcalfe’s Law is to properly estimate the value of providing connectivity. Increased connectivity will increase the value of the IoT sensor-connected networks up to a certain point. Beyond that point, the cost of growing the network will overwhelm its value. When that happens, another famous law will take over, namely, the Law of Diminishing Returns.

Dotcom Redux?

Let’s return to today’s IoT industry. Intel, Arm, and others have forecasted that 50 billion IoT devices will be connected by 2020. That growth number will lead to decreasing costs per unit for many IoT devices. Sound familiar? This is the innovation phenomena described by the Learning Curve (and Moore’s Law). It ensures continued innovation (increased power and decreasing power consumption) at lower cost for future IoT devices.

Since, by definition, all of these devices will be connected, the IoT growth also adheres to Metcalf’s Law—up to a point. The use of gateway devices and edge computing may well bring the proper balance to Metcalfe’s over-zealous prediction for end-user data points. And, in the process, lessen the likelihood of a repeat of the Dotcom bubble for IoT.

The relationship between Moore’s Law for the semiconductor space and Metcalfe’s Law in the networking communication world has been used in the past to explain the phenomenal rise of the Internet and the Dotcom industry.

The last few years have seen renewed interest in the intersection of these two empirical principals, thanks to the rise of the IoT. Remembering the limitations of both laws—but especially Metcalfe’s—will lessen a repeat of history’s greatest tech bubble to date.

About the Author

John Blyler

John Blyler has more than 18 years of technical experience in systems engineering and program management. His systems engineering (hardware and software) background encompasses industrial (GenRad Corp, Wacker Siltronics, Westinghouse, Grumman and Rockwell Intern.), government R&D (DoD-China Lake) and university (Idaho State Univ, Portland State Univ, and Oregon State Univ) environments. John is currently the senior technology editor for Penton Media’s Wireless Systems Design (WSD) magazine. He is also the executive editor for the WSD Update e-Newsletter.

Mr. Blyler has co-authored an IEEE Press (1998) book on computer systems engineering entitled: ""What's Size Got To Do With It: Understanding Computer Systems."" Until just recently, he wrote a regular column for the IEEE I&M magazine. John continues to develop and teach web-based, graduate-level systems engineering courses on a part-time basis for Portland State University.

John holds a BS in Engineering Physics from Oregon State University (1982) and an MS in Electronic Engineering from California State University, Northridge (1991).

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: