What’s the Difference Between CompactPCI Serial and OpenVPX?

As legacy computing projects upgrade from VME and CompactPCI systems, or as new projects arise, an important choice is presented: “which direction do we go?” For open standard embedded computing, the technologies have advanced from bus-based platforms to high-speed serial architectures.

For VME, created by VITA, the follow-on generation architecture is OpenVPX using a completely different connector and routing system. For CompactPCI, created by PICMG, the follow-on generation architecture is CompactPCI Serial (cPCI Serial). It also has a new connector and routing approach. So, whether a user’s legacy platform was VME or CompactPCI, it’s completely open going forward in terms of selecting the next-generation architecture. For example, a previous VME user can select cPCI Serial for their upgrade path.

Similarities

So, what’s the difference between cPCI Serial and OpenVPX? Let’s start with their similarities. They both utilize the 3U and 6U × 160-mm-deep Eurocard form factor for the modules. They’re both excellent high-performance architectures available in air- or conduction-cooled formats. They utilize a 4HP (0.8 in.) slot pitch, but it’s far more common for OpenVPX to use 5HP (1.0 in.) pitch even in air-cooled systems. For conduction-cooled modules, both specifications usually incorporate the 1.0-in. pitch spacing.

The two standards have routing provision for high-speed serial traffic such as PCIe Gen3 or 10GbE/40GbE (40GbE isn’t yet specifically defined in these specifications). There are also control planes, expansion planes, utility planes, and provisions for SAS/SATA, clocking, and more. Therefore, both architectures can be utilized for a wide range of high-performance data processing, signal conversion, digitizing, and multicore communication across a backplane-based system. Furthermore, both architectures can be employed in commercial/industrial-grade as well as rugged/Mil-grade applications.

The differences are primarily the high-performance serial connector used, interoperability, slot availability and I/O, power options, ecosystem availability, and price. OpenVPX utilizes the MultiGig RT series connector, while cPCI serial opts for the Airmax VS series.

In addition, VPX was initially less defined (under VITA 46), leading to some interoperability issues. The VITA group later created OpenVPX (under VITA 65) with defined pinout libraries called “profiles” to help overcome the concerns. cPCI Serial defines a simple topology structure for different slot configurations, ensuring interoperability. The advantage of the OpenVPX approach is there’s more flexibility in routing configurations. The potential downside is the complexity in maintaining interoperability between slot, module, and backplane pinout profiles.

Rugged Versions

Where VME was applied in a wide range of markets, OpenVPX is primarily used in defense applications. This is a mainly a price issue, as the industrial, medical, and several other markets are more price-sensitive. Because cPCI Serial is designed to be a relatively low cost for the level of performance, the architecture is used in markets that include, but aren’t limited to, industrial automation, railway, communications, medical, and defense. cPCI Serial is beginning to see increased usage in defense applications. There are a wealth of ruggedized and conduction-cooled options and even a RAD-hard variant called cPCI Serial Space.

A board vendor and a backplane/chassis vendor both recently entered the North America-based market, and a large U.S. defense program recently selected cPCI Serial. OpenVPX is highly successful in Mil/Aero applications and its ecosystem in those programs is diverse.

Multicore Processing

Both OpenVPX and cPCI Serial can provide multicore, multiprocessor communication across the slots. OpenVPX has the advantage of having very-high-power options (up to 768 W theoretically), allowing for the use of fast (and hot) processors. 3U cPCI Serial has up to 80 W of power for each slot and 171 W for the 6U version. However, a double-wide module (8HP) or greater can be used to leverage more pins for higher power requirements.

The power-rail options for 3U OpenVPX are +3.3 V, +5 V, +12 V, −12 V, 3.3 V AUX, and 48 V. They provide the advantage of flexibility, but the disadvantage of having to provide PSUs that support multiple rails. This significantly increases the costs, especially for pluggable units. Conversely, 3U cPCI Serial only uses 12 V (with a +5-V standby option). The approach is less versatile, but it’s cost-effective and simple. The 6U version also allows the use of −48 V.

Another cost-effective dimension of cPCI Serial is its high-performance serial connector is much lower cost, despite offering near apples-to-apples performance. Overall, it’s expected that most cPCI Serial systems are as low as half the cost of OpenVPX. Ethernet is the primary interface for multiprocessing for cPCI Serial and the P6 connector is dedicated for a (typical) mesh interface.

I/O Availability, Slots, and Hot Swap

The cPCI Serial topology is primarily a star configuration (one hub going to each payload slot) for the fat pipes; a full x4 PCIe interface would be limited to nine slots (one hub and eight payload). A full mesh (all slots directly interconnected) is also possible in smaller paths. Moreover, it’s possible to have, for example, a 17-slot system/backplane, where slots 2-9 have no SATA and slots 10-17 have SATA only. Such types of configurations could be used for storage racks (8x Hard Disk Drives), but they’re not defined in the specification.

Figure 1 shows an example of a 5-slot 3U cPCI Serial chassis. As the slot counts are typically lower, the chassis used is 42HP wide (9.5 in.). This is half the width of a standard 84HP (19 in.) rackmount chassis.

1. This example of a cPCI Serial chassis from Pixus Technologies demonstrates the commonly compact width of the high-performance open-standard computing architecture.

OpenVPX provides lots of flexibility in connections that are double fat pipe (x8) or fat pipe (x4), thin pipe (x2), ultra-thin pipe (x1), etc. How these connections are utilized in each configuration is determined by the VITA 65 profile. One can certainly utilize these types of connections in cPCI Serial, but they’re not specifically defined in the specification. This would also allow, for example, a x2 configuration in cPCI Serial to go beyond nine slots. Alternatively, customized versions can utilize undefined I/O for enough pins to expand x4 PCIe in more slots. It should be noted that these versions would not necessarily comply with the specification, and interoperability with standard-compliant modules could be jeopardized.

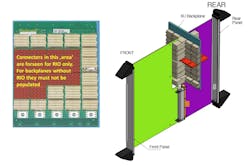

OpenVPX and cPCI Serial provide a wealth of I/O pins, depending on the configuration and several factors. The use of rear I/O is interesting in cPCI Serial. The connectors on the front of the backplane aren’t populated in several locations to reserve them for rear I/O. If rear I/O is required, then the connectors are populated on both the front and the rear. A standard 3U cPCI Serial backplane has 224 rear I/O pins available on slot 2 and 3, and 240 pins available on slots 5 through 9. The potential benefit is that the connectors aren’t populated unless needed; thus, money is saved.

Conversely, in OpenVPX, the front connectors are often all used for various signals. So, the front connectors are typically all present, whether rear I/O is needed or not. If rear I/O is required, then the connectors are populated in the rear of the backplane as needed.

The 6U option for cPCI Serial is also interesting. It provides a separate rear connector interface to an rear transition module (RTM). This approach allows the 3U portion of the backplane to be fully utilized while providing dedicated pins to a separate rear board. It’s similar to the approach of other PICMG specifications such as AdvancedTCA and MicroTCA.4. Figure 2 (left) shows the dedicated pin area for the rear I/O and Figure 2 (right) shows the 6U board interface. Alternatively, a 6U RTM in OpenVPX would plug directly into the RTM connectors in the same slot (and plane) on the backplane as the front boards.

2. cPCI Serial only populates certain front connectors if rear I/O is required, otherwise they’re left blank (left). For additional I/O, the 6U cPCI Serial form factor has a rear module interface (right). (Image on left courtesy of Schroff)

As its predecessor CompactPCI allowed, cPCI Serial facilitates hot-swappability, where all FRUs (including the board modules) can be removed or inserted while the system is still running. OpenVPX doesn’t facilitate hot-swap of the board modules.

Ecosystem

The ecosystem of the architecture constitutes the number of board, chassis, and specialty product vendors that support the technology. cPCI Serial has at least eight board vendors (and growing) and at least five chassis vendors. As cPCI Serial is adopted in more defense applications, it’s expected that more U.S.-based vendors will enter the market.

Since OpenVPX has been around longer and is extremely successful in defense applications, it has a vast ecosystem to support that market. More and more cPCI Serial vendors are developing high-end FPGAs and multi-core processors. When the technology develops a larger footprint in defense, one could image that more boards will be developed for analog-to-digital and digital-to-analog, or versions that accept front mezzanine cards (FMCs) with various sample rates and channel options to be utilized.

Two Successful Technologies

Both OpenVPX and CompactPCI Serial are excellent high-performance architectures. OpenVPX has tremendous brand recognition, particularly in the Mil/Aero arena. The cPCI Serial architecture is growing in adoption in the U.S. and its brand is gathering more steam globally. OpenVPX has a wide ecosystem/deployment and technically seems to have advantages in high-power, high-slot-count systems.

For mid-sized to small systems or ATR applications, the architectures seem to have comparable performance. cPCI Serial would likely have a significant advantage in price. OpenVPX would hold a big advantage in being well-known in the industry. Overall, users should know that multiple architecture options are available for commercial, industrial, and military high-performance embedded computing systems.

About the Author

Justin Moll

Vice President of Sales & Marketing, Pixus Technologies

Justin Moll is the Vice President of Sales & Marketing for Pixus Technologies and has been with the company since 2012. He’s active in trade associations such as VITA and PICMG and served as the Vice President of Marketing for PICMG. Justin has been a keynote speaker and featured commentator at multiple embedded computing industry events.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: