NVIDIA EGX Spreads AI from Cloud to the Edge

NVIDIA’s EGX software stack (Fig. 1) is designed to run on the company’s hardware, which starts with the compact Jetson Nano platform through cloud servers with potentially hundreds or thousands of NVIDIA T4 GPGPUs. The same application software built on NVIDIA’s CUDA-X could be run on something like the Jetson Nano up through the cloud servers.

1. The EGX Edge Stack is built on CUDA-X, which allows applications to take advantage of the company’s hardware from the compact Jetson Nano that targets the edge to cloud servers based on racks of NVIDIA T4 boards.

Of course, there’s a performance difference depending on the hardware of choice (Fig. 2). Edge devices include platforms like the Jetson Nano that delivers 0.5 TOPS of performance, and the Jetson AGX Xavier, which delivers 320 TOPS. Servers can run the NVIDIA T4 that offers 520 TOPS of performance. Multiple T4 boards can be included in a rack delivering over 10,000 TOPS.

2. NVIDIA’s EGX scales from the Jetson Nano that delivers 0.5 TOPS of performance up to a stack of T4’s that deliver 10,000 TOPS of performance.

Artificial-intelligence (AI) support for applications targeting the edge typically employ machine-learning (ML) models to handle tasks such as classification. This can also be done on servers or in the cloud, but these heavy-duty systems are also often employed for training ML models that require more computational power and more data storage. Hyperscale data centers offer a way to share resources to support very large AI applications.

NVIDIA recently picked up Mellanox for its switch technology. “Mellanox Smart NICs and switches provide the ideal I/O connectivity for data access that scale from the edge to hyperscale data centers,” said Michael Kagan, Chief Technology Officer at Mellanox Technologies. “The combination of high-performance, low-latency, and accelerated networking provides a new infrastructure tier of computing that is critical to efficiently access and supply the data needed to fuel the next generation of advanced AI solutions on edge platforms such as NVIDIA EGX.”

“Cisco is excited to collaborate with NVIDIA to provide edge-to-core full stack solutions for our customers, leveraging Cisco’s EGX-enabled platforms with Cisco compute, fabric, storage, and management software and our leading Ethernet and IP-based networking technologies,” said Kaustubh Das, Vice President of Cisco Computing Systems.

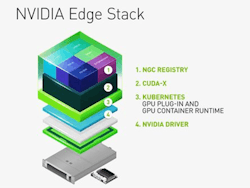

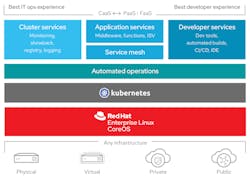

The NVIDIA Edge Stack that runs on all of the EGX-capable hardware incorporates the company’s hardware drivers, a CUDA Kubernetes plug-in, a CUDA container runtime, the CUDA-X libraries, and containerized AI frameworks. The frameworks include TensorRT, TensorRT Inference Server, and DeepStream. The containerized system, which provides modularity and simplifies deployment, has less overhead than a virtual-machine environment while offering isolation and security. Compatibility with Kubernetes allows the Edge Stack to run on platforms like Red Hat’s OpenShift container-management system (Fig. 3). OpenShift runs on Red Hat Enterprise Linux (RHEL).

3. Red Hat’s Kubernetes-based OpenShift container platform supports EGX Edge Stack containerized applications.

“Red Hat is committed to providing a consistent experience for any workload, footprint, and location, from the hybrid cloud to the edge,” said Chris Wright, Chief Technology Officer at Red Hat. “By combining Red Hat OpenShift and NVIDIA EGX-enabled platforms, customers can better optimize their distributed operations with a consistent, high-performance, container-centric environment.”

RHEL isn’t the only platform that will run the Edge Stack, but it’s the one most will choose given Red Hat’s support. Red Hat is now also part of IBM.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: