IGBTs Or MOSFETs: Which Is Better For Your Design?

Download this article in .PDF format

Until the MOSFET came along in the 1970s, the bipolar transistor was the only "real" power transistor. It provided the benefits of a solid-state solution for many applications, but its performance was limited by several drawbacks: It requires a high base current to turn on, it has relatively slow turn-off characteristics (known as current tail), and it's susceptible to thermal runaway due to its negative temperature coefficient. Also, the lowest attainable on-state voltage or conduction loss is governed by the collector-emitter saturation voltage (VCE (SAT)).

In contrast, the MOSFET is a device controlled by voltage rather than current. It has a positive temperature coefficient, preventing thermal runaway. And, its on-state resistance has no theoretical limit, so its on-state losses can be far lower than those of a bipolar part. The MOSFET also has a body-drain diode, which is particularly useful in dealing with limited free-wheeling currents. All these advantages and the comparative elimination of the current tail quickly made the MOSFET the device of choice for power switch designs.

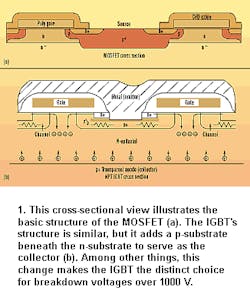

Then in the 1980s, the insulated-gate bipolar transistor (IGBT) came along. This device is a cross between the bipolar and MOSFET transistors. It has the output switching and conduction characteristics of a bipolar transistor, but it's voltage-controlled like a MOSFET. Generally, this means it combines the high-current-handling capability of a bipolar part with the ease of control of a MOSFET.

Unfortunately, the IGBT still has the disadvantages of a comparatively large current tail and the lack of a body-drain diode. Early IGBT versions were prone to latch up, too, but this has been largely eliminated. Another potential hazard with some IGBT types is the negative temperature coefficient, which can lead to thermal runaway. It also makes the paralleling of devices hard to effectively achieve. Currently, this problem is being addressed in the latest generations of IGBTs that are based on non-punch-through (NPT) technology. This development maintains the same basic IGBT structure, but it's based on bulk-diffused silicon, rather than the epitaxial material that both IGBTs and MOSFETs have historically used.

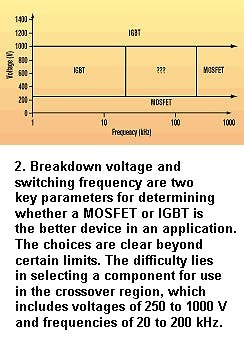

This variation is sufficient to produce some clear distinctions as to which device serves which applications better. Certainly, the IGBT is the choice for breakdown voltages above 1000 V, while the MOSFET is for device breakdown voltages below 250 V.

Device selection isn't so clear, though, when the breakdown voltage is between 250 and 1000 V. In this range, some components vendors advocate the use of MOSFETs. Others make a case for IGBTs. Choosing between them is a very application-specific task in which cost, size, speed, and thermal requirements should all be considered.

IGBTs have been the preferred device under the conditions of low duty cycle, low frequency (< 20 kHz), and small line or load variations. They also have been the device of choice in applications that employ high voltages (> 1000 V), high allowable junction temperatures (> 100°C), and high output powers (> 5 kW).

Some typical IGBT applications include motor control where the operating frequency is < 20 kHz and short circuit/in-rush limit protection is required; uninterruptible power supplies with constant load and typically low frequency; welding, which requires a high average current and low frequency (< 50 kHz); zero-voltage-switched (ZVS) circuitry; and low-power lighting with operation at low frequencies (< 100 kHz).

Typical MOSFET applications include switch-mode power supplies using hard switching above 200 kHz or ZVS below 1000 W. Battery charging is another common use for MOSFETs.

Of course, nothing is as easy as it seems. Tradeoffs and overlaps occur in many applications. The purpose of this article is to examine the "crossover region" that includes applications operating above 250 V, switching between 10 and 200 kHz, and power levels above 500 W. In these cases, final device selection is based on other factors such as thermal impedance, circuit topology, conduction performance, and packaging. A ZVS power-factor-correction (PFC) circuit is one example of an application that falls into the crossover area between IGBTs and MOSFETs.

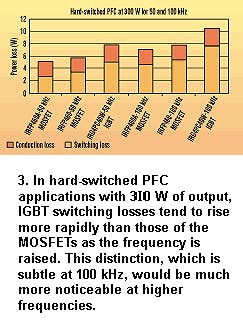

Yet the losses of an IGBT like International Rectifier's IRG4PC40W are approximately equal to the losses of the company's IRFP460 if the switching speed is reduced to 50 kHz. This could let a smaller IGBT replace the larger MOSFET in some applications. Such was the state of technology in 1997, when IGBTs had a slight edge over MOSFETs at 50 kHz and were making inroads into designs up to 100 kHz.

Recent advances, though, have given the advantage back to MOSFETs. The lower-charge MOSFETs now available have reduced the losses at high frequency. They have, therefore, reasserted MOSFETs' dominance in hard-switching applications above 50 kHz.

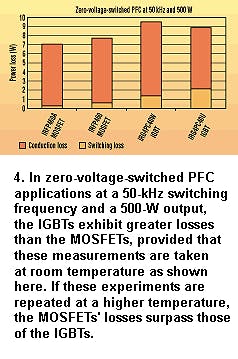

When the application uses zero-voltage switching, results vary with operating temperature. With 50-kHz switching and a 500-W output, the 9.5-W IGBT losses are higher than the 7-W MOSFET losses at room temperature (Fig. 4).

If output power remains at 500 W and the switching frequency is raised to 134 kHz at the higher temperature, the IGBT will exhibit slightly worse losses (25.2 W) than the MOSFET (23.9 W). If the same measurements are taken at room temperature, losses are 17.8 and 15.1 W, respectively. The increase in switching losses at the higher frequency eliminates the advantage that the IGBT had at high temperature when the switching frequency was lower.

These examples illustrate that there is no iron-clad rule that can be used to determine which device will offer the best performance in a specific type of circuit. The choice of IGBT or MOSFET will vary from application to application, depending on the exact power level, the devices being considered, and the latest technology available for each type of transistor.

In the battle between MOSFETs and IGBTs, either device can be shown to provide an advantage in the same circuit, depending on operating conditions. Then how does a designer select the right device for his application? The best approach is to understand the relative performance of each device and realize that if the component looks too good to be true, it probably is.

There are a few simple things to keep in mind about specifications. Test data, supplier claims, or advertisements which select conditions at maximum current and temperature will favor the IGBT in a given application. Take, for example, a motor-control application where a forklift is lifting its maximum-rated load while moving up an inclined ramp in the desert at noon.

In this particular scenario, the IGBT appears to be the device of choice. But when the average power consumption during an entire workday is considered, the maximum torque of the forklift motor is needed only 15% of the time, and the average torque load of the motor is only 25% of the rated torque. Under average or typical conditions, a MOSFET provides the longest battery life while meeting all peak-performance lev els—and usually at a lower cost.

Data that are based on applications at the highest switching frequency, the shortest pulse width, or the lowest current will tend to favor the MOSFET over the IGBT. For instance, a power supply operating at room temperature with nominal load and line voltage will make the MOSFET appear to be better than the IGBT. Conversely, if the power supply is operated at the maximum case temperature, maximum load, and minimum line voltage, the IGBT will look better. Actual performance, however, is almost never under "nominal conditions." Variations in ambient temperature, line voltage, and load are more realistic, and they should be considered.

Presently, some of the newest IGBTs can offer competitive performance and cost advantages in ZVS PFCs at 1000 W and up, operating at switching frequencies of 100 kHz and above. Nevertheless, in all other power-supply applications, the MOSFET continues to reign supreme.

There seems to be an industry-wide perception that MOSFETs are a mature product category that will not offer significant performance improvements in applications, while IGBTs are a new technology that will replace MOSFETs in all applications above 300 V. Such generalizations aren't true.

About the Author

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: