Many devices are now battery operated. They could be powered with anything from a single coin cell to lithium-ion (Li-ion) batteries. But they all have finite energy. In some cases, replacing these batteries may be undesirable and even impossible. System power requirements, then, need to be reduced to extend operating time and reduce the need for battery replacements.

Before designing an ultra-low-power system, it is important to understand the tradeoffs of using different microcontrollers (MCUs) that feature low-power technology and peripherals, as well as the tools that can help maximize the application lifetime for a given energy budget.

This file type includes high resolution graphics and schematics when applicable.

Start with the MCU

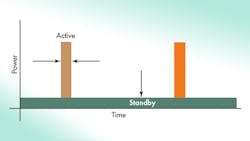

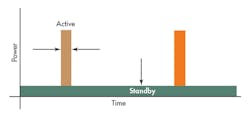

Selecting an ultra-low-power MCU is the first step in reducing overall system power. In some cases, the MCU dominates the overall power consumption. If an application is typically asleep for an extended period of time and wakes up periodically to process information, the standby power consumption is going to be the dominating factor (Fig. 1). There also will be cases where the application would be 50% active and 50% in standby.

In any case, selecting an MCU that has the best of active and standby power consumption helps to lower the average power consumption of the application. This is where an MCU built using advanced low-leakage process technology can reduce the active current, which is typically measured in µA/MHz, as well as the standby power consumption. Ideally, for the lowest possible power consumption, the application should both minimize its active time and reduce its standby power consumption.

Looking at the memory technology and how it is leveraged within an application can greatly reduce C-startup and other application initialization time, which in turn reduces system energy requirements. For example, by leveraging an MCU with ferroelectric RAM (FRAM) non-volatile memory technology in an application, the program code, constants, variables, and stacks all can be treated like SRAM memory but are actually stored in a non-volatile fashion.

A write to FRAM is immediate and no special configuration is required, unlike its flash counterpart, which also requires a typically power-hungry charge-pump. FRAM is a unified memory and can easily be partitioned and tailored to specific application needs. So how exactly does FRAM help in reducing system energy?

By storing certain variables or large arrays including application state, calibration information, mathematically calculated intermediate results, and other data in FRAM, the application could restart from a cold-start or a deep-sleep mode much quicker without the need to re-initialize these variables or re-perform costly hardware calibration steps. This reduces the C-startup routine and application code initialization execution times. Since they are in non-volatile memory, the variables are immediately available for use at a developer’s disposal and a previous execution context can be restored quickly. Doing this using flash is much more challenging without running the risk of a power failure in the middle of an erase/write. This is not the case with FRAM.

Every ultra-low-power MCU has some form of low-power modes to put the device into various sleep states. These modes typically would shut down the CPU core or disable certain clocks in the system. Some peripherals operate independently from the CPU. Hence, some form of clock source may need to be available to keep the peripheral active. These all depend on the low-power modes and the architecture for a specific MCU. Generally using any low-power mode is better than none. Tweaking the system to use the lowest-possible power mode would be the next step.

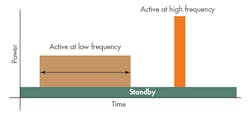

Clock speed is an imperative contributing factor as the power consumption increases linearly to frequency, so selecting the lowest clock speeds would be ideal. A good practice would be to start from the lowest clock frequency and then scale upwards if needed, such as 1 MHz. A low-power application typically would want to execute as quickly as possible during active and re-enter standby.

For example, if the application requires math-intensive tasks, it would be useful to increase the CPU speed to complete the task as soon as possible. In some cases, decreasing the CPU frequency is not a good strategy because it lengthens the active time, which increases energy consumption. There is middle ground where operating the MCU at a slower frequency can hurt overall power consumption versus using a higher CPU frequency and entering a low-power mode as soon as possible.

Figure 2 showcases higher energy consumption when the application remains active for an extended period of time because of a slow CPU frequency. On the other hand, high CPU frequency has its own limitations and might not be the solution for every single application. Higher CPU frequency means higher peak current, and some batteries may not able handle the peak currents to support such frequency. In a low-power context, ideally, the high-frequency clock source should be disabled. And, a low-frequency clock source could be kept active to wake the CPU up periodically if needed.

Another important consideration to minimize the active power consumption applies to MCUs where the CPU core is supplied by a separate (usually internal) voltage regulator and the output voltage of such a voltage regulator is adjustable either manually or automatically depending on a desired operating frequency. Those voltage regulators typically contribute a quiescent-current to the device’s active power consumption that often depends on their chosen voltage setting.

Studying the datasheet of such MCUs typically reveals that there are “sweet spots” in terms of combined CPU + low-dropout regulator (LDO) active power consumption per MHz that are located at the maximum frequencies for a given voltage range. Since the energy consumption to complete one unit of work in active mode depends on the total current consumed by the CPU plus the voltage regulator over time, a faster clock frequency often will result in lower energy consumption despite leading to higher power consumption during the time a task is completed.

Peripherals

Another technique is to understand the strength of each peripheral and how to leverage this intelligence by using the internal interconnects with another peripheral in which it can operate independently from the CPU. For example, after an analog-to-digital converter (ADC) conversion, the direct memory access (DMA) could automatically move the data to memory without any CPU intervention. The CPU can be asleep throughout the process until it is time to process the data. Another example is configuring the timer to automatically trigger the ADC periodically without the need to manually trigger the ADC with an active CPU. Typically, peripherals consume much less power than the CPU. As such, keeping the CPU off as long as possible and leveraging these peripherals to be active is something to keep in mind.

Another consideration would be to disable any unused peripherals, which may not always be easy to spot. A quick and efficient way to identify these peripherals is to use a robust real-time power debugger that provides intelligent power consumption information about a system. Taking a look at the available tools and software can also help a developer optimize the system power consumption.

Specialized static code analysis tools can analyze code during compile time and provide instant feedback on what can be done to optimize power in an application. Using such a tool in combination with a real-time power analysis hardware tool or debugger, developers can further observe how their code behaves in real time at every power state including the states of the peripherals. If enabled or disabled, developers can optimize power based on feedback given by a static code analysis tool and closely monitor the impact of all changes on the application’s power consumption.

The combination of such modern tools allows the developer to quickly identify problem areas in an instant visual feedback and address them. Optimizing the system power in software becomes an iterative process accelerated by having the right tools such as ULP Advisor and EnergyTrace from Texas Instruments, which induces faster market time.

Leveraging some application-specific software frameworks, drivers and algorithm libraries that are typically optimized for their architecture can result in lesser CPU-cycles, reducing execution time. This will also help the overall energy consumption.

Some silicon vendors offer math or DSP libraries that are tailored for the specific MCU architecture and can be used for signal-processing and filtering algorithms. Most compilers would include a generic math library that may not be optimized for a specific architecture, as they could be designed for multiple MCU architectures. Device driver libraries are also optimized for the specific device’s peripheral.

Rather than trying to figure out how to initialize a peripheral or questioning if the initialization routine is optimized, silicon vendor drivers are designed to initialize peripherals in the most optimized fashion. Keep power efficiency in mind as one parameter when selecting any third-party software and whether it is optimized for a particular device.

Conclusion

Employing these suggestions should help developers minimize system power requirements. Developers should consider selecting the right microcontroller for the job, having the mentality of re-entering standby mode as soon as possible, leveraging intelligent peripherals and available real-time power analysis tools.

William Goh is an applications engineer for Texas Instruments’ ultra-low-power MSP430 microcontrollers (MCU). He started his career at Texas Instruments as an intern in 2007. Since then, he has served in various roles ranging from assisting high-priority customers to MSP430 MCU silicon product and software development. He graduated from University of Florida with a master’s degree in electrical engineering. He can be reached at [email protected].

About the Author

William Goh

Applications Engineer

William Goh is an applications engineer for Texas Instruments’ ultra-low-power MSP430 microcontrollers (MCU). He started his career at Texas Instruments as an intern in 2007. Since then, he has served in various roles ranging from assisting high-priority customers to MSP430 MCU silicon product and software development. He graduated from University of Florida with a master’s degree in electrical engineering. He can be reached at [email protected].