Entropy for Embedded Devices

This article is part of the TechXchange: Cybersecurity

What you'll learn:

- What is the concept of entropy?

- Embedded-system applications that exploit entropy.

- How to implement entropy.

- What sources of entropy are available?

Computers are designed to be predictable. Under the same circumstances, the same program should produce the same output when supplied the same input. This obviously makes computers very useful. But there are times when we want to intentionally introduce randomness for the purpose of security. We do this by collecting entropy, or randomness.

What is Entropy?

Entropy is the concept of "unknowingness" or disorder. At its most fundamental, it’s a physical manifestation of the Heisenberg Uncertainty Principle, which states that both the position and momentum of subatomic particles can’t be simultaneously measured with accuracy.

More practically, it’s a measure of how unpredictable something is, and in information systems we estimate this quantitatively in bits. Each flip of a coin produces a single bit of entropy; a binary value for which we can’t predict the state. So, if we want to generate a large random value (say, a cryptographic key), we need to flip many coins and concatenate their output bits (after some additional processing to ensure there’s no bias).

In one simple form, information entropy can be captured from the drift of a clock signal. A clock signal oscillates at a periodic frequency, close to but not exactly at the desired frequency. Minuscule manufacturing tolerances, thermal noise, and quantum effects introduce a certain amount of uncertainty.

The drift in the clock period can be measured by using a second clock, but only if the second is completely decoupled from the first. If our clocks were dependent (such as when one is derived from the other), then the measurements would be highly predictable. In the coin-flip analogy, we have two clocks, the spin on the coin (many clock cycles), and the rise-fall of the coin (one clock cycle).

Care should be taken that no unexpected parasitic coupling exists between our independent clock sources. Such coupling is wonderfully demonstrated in mechanical systems, where things like unsynchronized pendulums eventually fall into sync through the coupling of the crossbar from which they're suspended. Similarly, parasitic electromagnetic effects like capacitance and EM radiation can couple our electrical counterparts.

Note also that it’s impossible to tell if a sequence was generated randomly: Being random, all sequences are possible, even those that would fail “obvious” statistical tests such as all zeros or ones, or short repeating patterns. Nevertheless, many practical systems will attempt to detect such things because they might be a symptom of certain hardware faults.

Whitening and Bias Reduction

Not all entropic systems produce uniformly distributed output. This means that a clever attacker who knows how the output is biased may have an edge (however slight) in predicting the next value.

Predictability can be devastating in some scenarios. To fix this, we use algorithms that reduce the bias in the output, moving it closer in appearance to the white noise of uniform distribution. It’s vital to perform this step, as using raw entropy from the hardware can contain significant bias.

A cryptographically secure pseudorandom number generator (CSPRNG) is an excellent algorithmic choice for this whitening process. Here, the raw entropy is provided as a seed to the CSPRNG, which mixes new entropy in with the old and provides a uniformly distributed output stream. The raw entropy data will be fed into these algorithms to produce unbiased random data, which we use in our software systems.

Why Entropy Matters

Entropy is used in many places, most of them security-related, where we literally want to “keep the attackers guessing.” A non-exhaustive list of example applications in an embedded system includes the following:

- Key generation is a common use of entropy. A brute force attack on a cryptographic secret involves testing every possible value in the key space until the correct one is found. This is supposed to take a very long time, because keys are large numbers, and the probability of any one key being valid should be equal. Problems arise when a weakness in the entropy source is used to generate the key, introducing bias toward setting or clearing some key bits and resulting in some keys being more likely than others. So, the attacker can prioritize guesses, and improve the probability of guessing correctly in a shorter amount of time. In other words, the strength of the key against brute force attacks is 2^entropy, not 2^keysize. Similar issues arise with nonce generation, which can be disastrous for some cryptosystems.

- Address Space Layout Randomization (ASLR) involves moving code and data pages around in virtual memory (usually performed once on each system boot or process creation). This is a mitigation that makes exploitation of memory corruption vulnerabilities more difficult, because the attacker doesn’t know the memory location of the exploitable code and data structures. Weak entropy can let the attacker simply guess at the location until they guess correctly.

- Stack canaries are another exploit mitigation. These are values placed on the stack frame by the compiler to detect when a stack-based buffer overflow has occurred. If the value of this canary is known to the attacker, then it may be bypassed easily. While the canary instrumentation is applied to the code automatically by the compiler, the initial value must still be initialized on boot or process creation by the developer, and this value must be random.

- TCP sequence numbers in the TCP packet headers need to be random. If an attacker can guess these, then it may aid them with some network-level attacks like session hijacking and host impersonation.

Random-number-generator implementations can be divided into two general categories: the easy cases, and the hard cases.

Easier Implementations

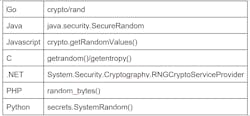

At the application layer of your embedded system, the entropy solutions are relatively easy to wield: Simply use the correct random number generator (RNG) API provided by your language library.

For systems that have an operating system (like Linux, which has recently received much needed improvements), the language runtime and libraries will be supported by the system using suitable APIs like getrandom(). For bare-metal systems, your platform vendor may provide a library that abstracts the underlying hardware mechanisms.

As a general rule, the following language-specific recommendations are best-practice:

What we don’t want to see are any extremely poor implementations, such as:

- Non-cryptographic pseudorandom generators like C’s stdlib::rand()

- CSPRNGs used with constant or predictable seed values

- Comments and stubs that don’t do anything at all (“/* todo, implement RNG */”)

Harder Implementations

But what about the hard cases?

- Where do the software runtime and libraries APIs get entropy when there’s no underlying OS?

- Where does the underlying OS get entropy from, and is it good?

- What about early during system boot, when the OS services simply aren’t yet available, such as in the bootloaders?

- What about during highly controlled conditions of manufacturing, when things like secrets are provisioned?

- Consider virtual environments (like virtual machines, or zygotes) where the state of the RNG may be inadvertently cloned. [

The simple software advice above breaks down when we look deeper into the system. We know full well those libraries don't just work by magic. At some point, we get to the point where the embedded developer is responsible for implementing the language runtime and libraries.

It's your job to ensure those software libraries have the supports they need to function as advertised for your application-level software developers. This often boils down to ensuring that the microcontroller you choose for your embedded device has a strong entropy source.

Save/Restore Entropy Across Reboots

Some entropy generation techniques are slower than others. Furthermore, entropy may increase over time as the system “warms up” (figuratively and literally). The randomness you get from a hardware device may be perfectly acceptable once the system has been running for a while. However, it might be completely predictable in the moments shortly after boot, before it has had time to diverge from its reset state.

To account for effects such as these, many RNG systems will save their state (e.g., at regular intervals and before sleep/power off) and reload it at boot/wake. When restoring such a saved seed or entropy pool, assume that the stored state itself may have been tampered with and don’t trust it completely. Rather, you should mix it in with whatever fresh entropy you can get before using the RNG in earnest.

Don't Put All Your Eggs in One Basket: Combining Entropy Sources

Not all entropy sources are as good as others.

- They may have a strong bias.

- They may be slow and produce entropy at rates slower than what’s demanded by the consuming system.

- Some may be subject to environmental influence.

- Some just have confidence problems, lacking clarity as to how they were characterized, or use unproven techniques.

The best way to overcome such problems is to use multiple sources, combined in a way that adds their entropy together. By combining several sources in this way, a failure of any one input may be compensated by the others, preventing a catastrophic failure of the entire RNG subsystem. It can be accomplished in various ways, but a cryptographic hash function is best and is widely available. This is what a CSPRNG does internally, and using one here is the best approach.

Practical Entropy Sources

Ideally, you can choose components that satisfy the security needs of the system. But what if the components don’t? There are times when cost, availability, functionality, performance, or other design factors outweigh security. At other times, product requirements evolve, and in-field devices need to be retrofitted with new features for which they weren’t originally designed. In these cases, we may need to compromise and do our best with what we have. So, what entropy sources do we have at our disposal?

True Hardware Random Number Generator

A TRNG is your best bet if you have one. Many SoCs will expose a register interface to an on-chip hardware module that produces entropy.

Under the hood, a TRNG may use one of several different techniques to produce entropy. The most common ones involve ring oscillators or similar techniques that measure unpredictable quantum effects or thermal noise. We don't need to dig into the details here, they're all generally of high quality and many academic papers and patents have been written to prove it.

What matters most is that your chip vendor has already performed the rigorous effort of characterizing its behavior and tuning it to the expected operating conditions. The documentation they provide will describe how to use it, which is usually through a simple register interface (often abstracted with a convenient library).

RDRAND

Modern x86 processors from Intel and AMD support the RDRAND/RDSEED instructions, which are used to access their hardware TRNG. This is worth special mention for two reasons:

- These new instructions were introduced not long after the NSA was caught backdooring the Dual_EC_DRBG entropy mechanism used in other systems. And with Intel being a U.S. company, a great deal of unproven speculation continues to surround the intention behind RDRAND.

- AMD’s implementation of RDRAND on some devices was flawed (now fixed), producing only values of 0xFFFFFFFF under certain sleep/resume conditions.

These issues highlight a universal truth in security: Nothing is perfect, so defense-in-depth is important. Entropy sources can fail in weird ways, be subject to environmental influence, have accidental (or intentional) design flaws, or just not be sufficiently characterized for all operating conditions. As previously mentioned, combining multiple entropy sources can be a strong hedge against the unknown and eliminate single points of failure.

External TRNG

On systems without integrated entropy sources, other components can be added to provide this functionality. Often, a dedicated security device like a secure element or a trusted platform module (TPM) is used and connected to a serial bus of some sort. Caution is warranted for designs where the threat model includes physical attacks, due to the ease with which the traffic between this chip and the host system can be intercepted and tampered with.

Analog Sources

Without an intentionally designed TRNG, it’s still possible to collect entropy from the environment. Analog-to-digital converters (ADCs), which a wide variety of analog signals, are included in a wide range of peripheral devices. Cameras, microphones, fingerprint sensors, battery gauges, and radio interfaces are all usable in this regard.

Whenever the precision of the ADC is high enough (commonly 16 bits or more, even on cheap microcontrollers), it will exhibit a fair amount of noise in the low-order bits. Adding this data as entropy to your RNG subsystem may be possible. Again, caution is needed if you’re relying on this as a sole entropy source: A physically present attacker may be able to measure or influence the very signal your ADC is measuring.

SRAM Boot State

When an SRAM device is powered up from a cold boot, its contents are random. Two devices should be expected to differ by about 50% when comparing these random boot states. Between boot cycles on the same device, though, about 10% of the bits can be expected to change. Thus, the contents of the SRAM at boot provides a good source of entropy on any microcontroller that contains SRAM.

Note that if collecting this state on a warm reset, it may instead contain stale system data that’s entirely predictable. This technique may require careful characterization of the processor and SRAM in question as not all SRAM implementations are appropriate for such an approach. For virtualized environments, this technique will typically be useless.

Counters

Measuring the time since system boot is a relatively simple operation. If there’s any variability in the boot time, this may contain some small amount of usable entropy. This is particularly true if there are any points during boot where the system pauses for something unpredictable, like the lock of a PLL.

Constants

Constants by definition contain zero entropy. That said, if your device has a device-unique constant, such as a serial number or a random and securely stored seed injected during manufacturing, then there’s some benefit (and little cost) in mixing this in with your other entropy sources. If nothing else, it ensures that in the event of complete failure of all other sources, two devices will still not produce the same sequence of RNG outputs.

Final Thoughts

The importance of quality entropy to help embedded-system security can’t be overstated. Nearly all microcontrollers have some ability to collect random data in a usable fashion, even those that don’t advertise this as a feature. Sometimes, we simply must be creative. Above all, make sure you have a backup plan in the event that any one entropy source fails completely.

Read more articles in the TechXchange: Cybersecurity

About the Author

Rob Wood

VP, Hardware and Embedded Security Services, NCC Group

Rob Wood’s career in embedded devices spans two decades, having worked at both BlackBerry and Motorola Mobility in roles focused on embedded software development, product firmware, and hardware security, and supply-chain security. Rob is an experienced firmware developer with extensive security architecture experience. His specialty is in designing, building, and reviewing products to push the security boundaries deeper into the firmware, hardware, and supply chain.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: