Augmented Reality Will Be Here Sooner Than You Think

This file type includes high resolution graphics and schematics.

The field currently known as augmented reality (AR) is in its infancy but is developing swiftly, with commercial products expected in the next two years. With its potential usefulness in nearly every endeavor, its applications and devices soon will permeate our everyday lives. Rather than being a technology per se, AR uses technology in a nearly invisible way to enhance your leisure and/or your work.

In time, the term will cease to have any meaning outside its ubiquitous applications. But at this stage a definition is in order. For now, think of AR as a means to superimpose digital content on your physical environment. In time, AR conceivably may expand to include content that addresses one or more of the senses, both detecting and enhancing your vision, hearing, smell, taste, and tactile feeling. But early conceptions seem to focus on visual enhancements, which for humans may be the most instantly evocative.

This space is operating in near-green field status right now. The opportunities for, say, designers of the user experience, as well as the electronic engineering to support that experience, are vast, if somewhat amorphous. AR probably will have a firm foothold in the consumer market, where entertainment, timely information related to any daily task, and location-based services come to mind. Undoubtedly AR will also find its way into nearly every vertical industry you can imagine, for productivity and precision, among other benefits.

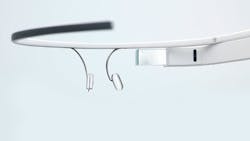

Through The Google Glass

The company with the most powerful Web search engine in the world appears to be moving to market with a device that would superimpose pertinent information on your visual field as you go about your day. Google Glass would be like frames for glasses with just a small screen above the right eye, well within the field of vision, instead of lenses. Another prototype combines this approach with a special contact lens, with mind-blowing results. Various tools are in the pipeline.

Related Articles

• Stony Brook’s Reality Deck Is Dazzling Visual Display

• Big Data Plumbing Problems Hinder Cloud Computing

• The Internet Of Things Needs Firewalls Too

The difference between an eyeglass-frame approach and a contact lens form factor raises design and engineering issues directly linked to behavioral issues for end users. For the consumer market, hardware designers may face a choice: make devices that are visible fashion statements, just as cell phones evolved from a tool to a fashion accessory, or pursue invisibility.

How users interact with their AR proxy is another facet of appearances. Talking to yourself is a giveaway, just as using motion to select an option or otherwise interact with your environment might call attention to you. Thus, one engineering and design challenge is to develop a means of interaction with AR that’s at once intuitive and inconspicuous.

How designers meld form and function for AR will be a fascinating challenge. Let’s take a bit of a leap here. Consider the notion of “body hacking,” or implanting devices in the body. Cyborg-like body enhancements could include sensors, even robotics. Sensors might provide biometrics on your blood pressure, your pulse, electroencephalography (EEG) readings, and other indicators for certain applications. Just as AR might read your biometrics, it might also alter or program them through biofeedback. Applications might include sensory manipulations. The eyeglass-frame concept with visual, informational overlays suddenly sounds rather quaint, and it hasn’t hit the market yet.

Near-term, it’s possible that the physical component of AR might be worn as glasses, an ear bud, or even sensors on the body. Some computing, memory, and graphics ability may reside in the device(s), in a computer on your belt, or in the cloud. Wireless connectivity ultimately reaching the cloud may be likely, given the potentially massive amounts of data and processing that may be needed for various applications.

The Internet Of Things

That’s the end user part of the equation. But the creation, storage, processing, analysis, and communication of the “pertinent information” that is expected to feed AR applications presents an entirely different challenge. We’ll need new infrastructure, with massive storage, incredibly fast data flows, new and evolving algorithms for analysis and prediction, lightning fast networks—the works. And all this has to happen within a rational business model.

To place AR and its infrastructure into perspective and draw back the curtain on an even broader context, I’d suggest that it fits into the evolving notion of the Internet of Things (IoT), in which every electrically driven device—even all significant objects in our lives, equipped with communicating sensors—has its own IP address. The IoT is the canvas and AR is the paint, with both serving as inextricable and mutually reinforcing technologies. AR will allow us to interact with a sensorially enhanced physical environment, and the IoT imbues the objects in that environment with meaning.

This file type includes high resolution graphics and schematics.

This view has huge implications for the future. If you combine nanotech, AR, robotics, 3D printing, and the IoT and give it a whirl in the blender, the result may be a rough draft of the next generation’s landscape. As these elements evolve together and mature, the terms “Internet of Things” and “augmented reality” will seem quaint and unnecessary. We’ll simply accept this new way of interacting with our environment, with content, and with other people.

The Early Stages

Hardware designers and application developers who are interested in pursuing AR may be curious about the status of the field. Have standards emerged? Is the effort coalescing around any hardware or software platforms or languages?

The development of a “killer app,” for instance, would seem to first require a platform—display parameters, for instance, something extensible to other applications. There’s been some open discussion of a reference model for AR for software development, but at this stage that the field is wide open.

Having the AR field so open is a blessing and, possibly, a curse. The blessing of an immature field like AR is that innovations could come from anywhere. The proverbial guys in a garage stand as much chance as major, established brands whose names we all know. Crowd-sourced contributions will be critical in avoiding a complete takeover of ideas driving this space by a handful of large corporations. Why not unleash everyone’s imagination on a challenge of this nature? Certainly, AR applications can be dreamed up by anyone. It wouldn’t serve the potential of AR for, say, an “IMAX in your face” application to dominate the market while a zillion possibilities are overlooked.

The curse of a green field, if there is one, is that a player that develops advanced applications and brings them to market early may have no incentive to join a standards effort and may influence the market to adopt de facto standards. Standards tend to spur innovation and, certainly, grow markets through economies of scale and wide acceptance and uptake.

Everyday Lives

Earlier, I blithely suggested that we’ll simply accept this new way of interacting with our environment. But will we? Do familiar doubts or concerns nag at you as you consider AR? We’ve been wrestling with too much information for a decade or two. We’re beginning to understand that predictive algorithms can tease out our likely future decisions and behaviors from our past actions. Data privacy and security concerns make the headlines with dismaying regularity.

Factors in the user experience have already arisen, pre-AR. Will a steady flow of pertinent information overlaying my environment be helpful or even tolerable? Many will want to avoid, though some may seek, a sort of “IMAX in your face” effect. An off switch, now and in the future, is a godsend.

And most of us probably wouldn’t enjoy being deprived of life’s unpredictable elements. You don’t want AR to create a feedback bubble that surrounds you with only your own thoughts or predicted behaviors. Designers of the user experience may have to provide a mashup between what’s known of your outlook, preferences, and behaviors with tangentially related but less linear, predictable elements.

In an AR-enhanced world, our personally identifiable information goes way beyond financial, medical, and legal records to real-time metrics on who we are, where we are, how and what we’re feeling, what we’re doing, and what we’re about to do. This could be useful to us and our closest family and friends. But advertisers would also love to have that data. So might a hacker, an estranged spouse, or the police. If we’re worried about privacy now, how will we deal with that concern in a world in which server farms archive our EEG readings and other biometrics?

Designers and engineers working at this new depth of user experience and external infrastructure will undoubtedly face these issues as AR is realized and brought to market. To gain wide acceptance, those who work on AR will have to resolve most of them, at least to customers’ satisfaction. Today people consciously or unconsciously decide to allow others to have their personally identifiable information if the value returned is high enough. Conversely, when that data extends down to your thoughts and feelings, the reaction may be different.

This file type includes high resolution graphics and schematics.

About the Author

Jay Iorio

Technology Strategist

Jay Iorio serves as technology strategist for the IEEE Standards Association. He also serves as the architect and manager of the IEEE Island in Second Life, a test bed for the virtual world. He recently spoke on a panel at the South by Southwest (SXSW) Festival on augmented reality. He can be reached at [email protected].

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: