Why Optical Circuit Switching is Becoming Essential for AI Data Centers

What You’ll Learn:

- Why traditional electrical switching architectures are struggling to keep pace with AI workloads.

- How optical circuit switching reduces latency, power consumption, and network complexity.

- Why solid-state metasurface beamsteering is a key enabler for scalable optical switching.

- How optical circuit switching integrates with existing data centers.

Not that long ago, scaling an AI cluster meant adding a few hundred accelerators and adjusting the network fabric around them. That no longer matches reality. Today, clusters contain tens of thousands of GPUs, and the largest systems are pushing toward hundreds of thousands.

At that scale, the network becomes a primary determinant of system performance.

The real challenge is architectural. Most data centers still rely on multi-tier electrical switching fabrics like fat-tree or Clos topologies, which worked extremely well for unpredictable, traditional workloads.

AI training workloads behave differently.

They generate steady, high-volume east-west traffic between accelerator groups that must be in sync at every step of the training process. Every hop through an electrical switch introduces latency. Every optical-electrical-optical (OEO) conversion consumes power.

As clusters scale, the energy required to move data across the network has grown from operational detail into a primary design constraint. In large AI deployments, networking power becomes a meaningful share of the entire system budget.

That reality is prompting a deeper architectural question: Do all of these dataflows really need to pass through multiple packet-processing stages, or is there a more direct way to connect compute resources at scale?

Why Optical Circuit Switching Matters Now

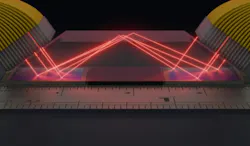

Optical circuit switching (OCS) takes a fundamentally different approach to building large networks (Fig. 1). Instead of routing each packet independently through multiple switching stages, OCS establishes direct optical paths between endpoints. Once that path is formed, traffic flows continuously without repeated packet inspection or buffering along the way.

For AI training, that matters, where training jobs move massive amounts of data that repeat predictably thousands of times. Instead of processing every packet along the way, the network can establish a dedicated path for the duration of the job and reconfigure it when workloads change. The result is better bandwidth utilization, lower network oversubscription, and meaningful reductions in energy per bit.

This isn’t new. Optical circuit switching was studied extensively in the early 2000s, often using MEMS mirror arrays to steer light between fiber ports. Those systems had practical challenges that limited widespread deployment. Mechanical complexity constrained port counts, manufacturing costs were high, and long-term reliability all became concerns. Meanwhile, electrical switching continued to improve rapidly, and OCS remained a niche solution.

A few things have changed over the last several years. AI infrastructure has crossed a threshold where the communication patterns of training workloads stress networks. Power has moved from operational concern to architectural constraint. And, critically, solid-state approaches to optical beamsteering have matured to the point where the practical barriers that stalled earlier OCS deployments are now addressable.

>>Check out more of our Sensors Converge 2026 coverage

That convergence is bringing optical circuit switching back into the spotlight, but with a much larger architectural role than originally envisioned.

Rethinking Radix and Network Scale

For decades, the structure of data center networks has largely been dictated by the limits of switching silicon. Individual ASICs could support a certain number of ports (32, 64, perhaps 128), and larger networks were built by stacking these devices into hierarchical layers. As systems scaled, so did the number of tiers. Emerging technologies like metasurface-based solid-state programmable optics reopen that design assumption and architectural possibilities.

When switching fabrics move from hundreds of ports toward thousands, the calculus begins to change. The need for deeply layered hierarchies becomes less obvious, and the architecture of large clusters can start to flatten.

Switching domains with more ports cut down on the number of hops and helps lower latency. In some cases, whole layers of packet processing may no longer be needed. Oversubscription, which today is often managed through careful traffic engineering, can instead be addressed through the switching fabric itself.

At the smaller end of the scale, compact 256 × 256 optical switches could even appear at the rack level. That opens the door to dynamic connectivity inside a rack, allowing accelerator groups to be reorganized in software depending on the workload. At the opposite extreme, very large optical switching domains, potentially reaching 10,000 × 10,000 ports, could serve as reconfigurable backbones for massive AI clusters.

These possibilities go beyond incremental bandwidth improvement, and they introduce new ways of designing large-scale networks.

The Bottleneck is Shifting

Discussions about optical infrastructure often focus on insertion loss and link budgets. Those factors certainly matter, but the constraints shaping AI infrastructure are starting to shift.

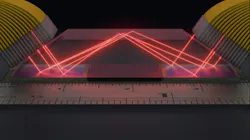

Transceiver technologies are evolving rapidly. Co-packaged optics and next-generation pluggable modules are improving link efficiency and reducing electrical reach limitations (Fig. 2). As link-level efficiency improves, attention naturally moves higher in the system's architecture.

As efficiency improves, the key questions become:

- How large can a switching domain realistically become?

- How quickly can connectivity adapt to workload placement?

- How many network tiers are truly necessary?

- How much power can be removed from packet-processing layers that no longer need to exist?

Solid-state programmable optics, particularly approaches based on metasurface beamsteering, begin to address these questions directly. With no moving parts and electronic control of optical paths, they offer better reliability and scalability than earlier mechanical systems.

Just as important are the connectivity patterns that can be defined and redefined in software. Instead of deploying a fixed topology and hoping it fits future workloads, the network fabric can adapt to how compute resources are used.

How Optical Circuit Switching Fits into Real Networks

Rather than replacing packet networks, optical circuit switching complements them in current designs.

Electrical switches still handle short-lived flows, control traffic, and anything that needs fine-grained routing. Optical circuits handle heavy, continuous data that dominates AI training.

This leads to a hybrid fabric where large dataflows bypass congested packet-processing tiers while the existing control plane remains intact. Optical circuits can be coordinated with cluster schedulers or software-defined networking controllers, allowing connectivity to evolve alongside workload placement.

This convergence of scheduling, networking, and optics reflects a broader shift in infrastructure design. Networking is starting to respond to workloads, adapting to application behaviors versus relying on static assumptions.

Optical Circuits: Looking Ahead

Optical circuit switching has been understood for decades. What’s changed is the scale of the systems that need it.

As AI clusters grow, long-standing assumptions about network architecture are being revisited. Radix counts that once seemed unrealistic are entering practical system design discussions. Network layers that were historically unavoidable may not need to exist in the same form.

The AI data center of the future may look less like a rigid electrical hierarchy and more like a flexible optical domain, one where connectivity evolves alongside compute rather than constraining it.

In that environment, the network becomes an adaptable infrastructure layer that responds to workload behavior. Optical circuit switching is no longer just an interesting alternative architecture. It’s emerging as a foundational architecture for connecting the largest AI systems.

>>Check out more of our Sensors Converge 2026 coverage

About the Author

Gleb Akselrod

CTO and Founder, Lumotive

Gleb Akselrod, CTO and Founder of Lumotive, has over 10 years of experience in photonics and optoelectronics. Before Lumotive, he was Director for Optical Technologies at Intellectual Ventures in Bellevue, Wash., leading commercialization of optical metamaterial and nanophotonic technologies.

Previously, he was a postdoctoral fellow at Duke University’s Center for Metamaterials and Integrated Plasmonics, focusing on plasmonic nanoantennas and metasurfaces. He earned his Ph.D. in Physics from MIT in 2013 as a Hertz Foundation and NSF Graduate Fellow, studying excitons in materials for solar cells and OLEDs. Before MIT, he worked at Landauer Inc., developing and patenting a fluorescent radiation sensor now used by the U.S. Army. He holds a B.S. in Engineering Physics from the University of Illinois at Urbana-Champaign, over 30 U.S. patents, and has published 25+ scientific articles.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: