Modular Voltage Regulator Brings the Power to Big AI Chips

Check out Electronic Design's coverage of APEC 2024.

Modern-day graphics processing units (GPUs) and other AI chips are massive. The unfortunate tradeoff is that they also consume an enormous amount of power, and their power needs are rising exponentially.

The AI boom pushes the limits of traditional power electronics at every level of the data center. But it’s uniquely challenging to deliver such vast amounts of power through the printed circuit board (PCB) to the point-of-load (POL) of the silicon—the “last inch” of the power delivery network (PDN). As peak currents climb to more than 1,000 A, they can lead to unsustainable losses and generate heat that can sap a system’s performance.

In that context, companies on the front lines of power electronics are racing to roll out voltage regulator modules (VRMs) that can supply large amounts of current at the specific voltages required by such loads.

Infineon Technologies is upping its game with a family of high-density dual-phase AI power modules. The cube-shaped DC-DC converters can step down high voltages useful in power distribution in data centers to the very small voltages required by the processor cores at the heart of next-gen AI chips. By itself, the voltage regulator can supply 120 A of peak current per phase with close to 90% efficiency at full load.

But several of the voltage regulators can also be placed together on the circuit board, flanking the CPU, GPU, FPGA, or other high-performance processors from both sides, to sling more than 2,000 A into it.

Infineon said the TDM2254xD unites everything from the power stage—based on its OptiMOS family of MOSFETs—in charge of voltage conversion to the magnetics managing current and heat in the module to the capacitors that condition power before it enters the processor. Integrating all of these components in the same module means it can be placed physically closer to the point of load, reducing parasitic losses.

Infineon introduced the new AI power modules at APEC 2024 in March.

Power Delivery: The Limiting Factor for AI Silicon

In most cases, power delivery is becoming a bottleneck for high-performance AI chips.

Today’s central processing units (CPUs) are far from stingy when it concerns power, requiring a power supply of around 300 W. But the latest AI accelerators are in a different league. NVIDIA's H100 has long been the most power-hungry data-center GPU on the market, with a rated power envelope (TDP) of up to 700 W. To train and execute even more advanced forms of AI, NVIDIA is upping the ante with its upcoming “Blackwell” GPU, which is rated to consume 1000 W or more.

These AI accelerators are driving up the power demands of data centers by more than 3X. The power required for even a single column of servers is rising to more than 90 kW, up from 15 to 30 kW today.

The challenge is that AI chip designers are also scaling to process nodes as advanced as 5 and 3 nm. As a result, these chips run on relatively small supply voltages even as they consume large amounts of power. The core operating voltages at these process nodes presently stand between 0.75 and 0.85 V. And since Ohm’s Law states that power is equal to the current times voltage (P = I × V), each of these AI chips can then require several thousands of amps of current that must be thrust into the POL.

For years, the standard DC bus voltage to deliver power to the servers inside a rack inside was 12 V. But technology companies are upgrading to 48 V to minimize losses in the PDN. Thus, the currents between the PCB and final DC-DC converter stage are falling by a factor of four. So, when referring to Ohm’s Law, the cutback in current slashes resistive losses by 16X.

The final step before power enters the processor is a voltage regulator that steps down 12- or 48-V DC bus voltages to the specific voltages required by the processor’s core, while increasing the current to the required levels. Though the voltage regulator only needs to deliver power a short distance to the AI chip, the PDN is still subject to losses (I2R) stemming from the resistance on the power rail, which can lead to thermal issues. These losses are on top of those owing to parasitic inductance and capacitance.

As the difference widens between the high voltages used to deliver power inside the server and the very small voltages used to run the processor itself, other problems emerge. Since it’s stepping down 48 V (instead of 12 V) to voltages as small as sub-1 V at the processor’s core, the voltage regulator inevitably loses more power in the process. The alternative is to place more than one DC-DC converter stage before the POL.

Since it can only step down input voltages of 4.25 to 16 V to outputs of 0.225 to 3 V, Infineon’s power module can act as a second DC-DC converter stage after a first stage drops the voltage from 48 to 12 V.

Voltage Regulators: As Close to the POL as Possible

The most important factor when it comes to AI power delivery is the placement of voltage regulators on the PCB, which influences the amount of resistance on the power rails feeding into the processor’s pins.

But since even a single GPU requires more than 1,000 A of current, figuring out the optimal placement on the PCB is no easy feat. If the VRM itself isn’t placed physically close to the processor’s pins, the current must travel a longer distance on the PCB, leading to larger parasitic losses. The substrate that the processor is placed on can also increase the impedance to a point where it causes further PDN losses.

But placing the voltage regulator too close to the AI chip can cause other problems. The high-bandwidth IO used to connect the AI chips together in large clusters is vulnerable to switching noise that stems from hard-switching buck regulators. The voltage regulators supply large amounts of current that, in turn, can interrupt signals routing to the processor, reducing signal integrity (SI). The problem also rears its head with accelerators cards that unite the CPU and GPU, such as NVIDIA’s Grace Hopper superchip.

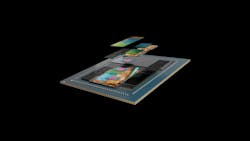

The most widely used arrangement is lateral power delivery (LPD), in which a series of voltage regulators flank the north and south side or east and west side of the processor, feeding power from both sides to distribute it more evenly. But as the GPU and other types of AI silicon consume more and more current, it’s becoming much more inconvenient to fit all power electronics within the finite real estate on a PCB.

In general, higher currents require more phases. Today, these multiphase DC-DC converters consist of several power stages that regulate the voltage and split the output current among themselves. Even though it’s possible to use a single controller to coordinate them all, the power stages generally must be arranged on the PCB one at a time with the accompanying magnetics and passives. Thus, the more phases required to accommodate the processor's peak current, the larger the DC-DC converter. That limits how closely it can be placed to the point of load.

In most cases, each phase can supply 40 to 60 A of current. Consequently, it can take more than 10 separate phases to support the peak current of even a single AI processor. This is not sustainable as power levels continue to rise.

Inside Infineon’s Next-Gen Power Modules for AI

As it turns out, voltages regulators are becoming one of the largest battlegrounds in the world of power electronics. Infineon is trying to stand out from competitors with its new dual-phase power modules.

One of the most notable innovations in the TDM2254xD series is that the power inductor is placed on top of the power stage to transfer heat from the power FET to the heatsink. Placing the magnetics on top of the module maximizes its heat dissipation. The unique architecture of the VRM also pushes the power efficiency to 89% at full load. Furthermore, integrating the power switch in the buck regulator reduces its parasitic impedances, enabling it to run at higher frequencies of up to 2 MHz, according to Infineon.

Power inductors are a vital component in any voltage-regulator topology. They work with capacitors to play the role of rectifying the rectangular wave output from the power FET to a specific DC voltage.

Dissipating heat is one of the difficulties with power-hungry GPU and other AI chips. But integrating the inductor in the voltage regulator reduces its operating temperature by 5°C at full load, said Infineon.

In general, AI workloads are highly dynamic, which can lead to high-voltage transients. Overloading the processor with very high voltages, and/or having it occur very suddenly, can disrupt or permanently cause damage. Routing power to limit this is complicated by all of the other parts competing for real estate on the PCB.

Infineon said the TDM2254xD sits on top of a separate substrate that isolates the power FET from the PCB. This reduces the risk of switching voltages that can negatively impact SI and result in noise-coupling.

The dynamics of AI workloads also widens the gap between peak and idle current consumed by the GPU or other AI processor, imposing high di/dt transients. These sudden high currents can stress out the PDN in a system. The processor’s socket and the parts of the PCB that supply it with power are loaded with capacitors to keep the load current within the ripple envelope. The company said the DC-DC converter also brings a number of capacitors into the fold to help manage the highs and lows of current more effectively.

In large-scale systems that lash together tens of thousands of AI chips, Infineon claimed the power savings made possible by its new voltage regulator can add up to "megawatts" of electricity over the long term.

The TDM2254xD is housed in 10- × 9- × 8-mm or 10- × 9- × 5-mm packages.

About the Author

James Morra

Senior Editor

James Morra is the senior editor for Electronic Design, covering the semiconductor industry and new technology trends, with a focus on power electronics and power management. He also reports on the business behind electrical engineering, including the electronics supply chain. He joined Electronic Design in 2015 and is based in Chicago, Illinois.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: