Members can download this article in PDF format.

Advances in embedded processors have opened the field for bringing increased AI intelligence to the edge. However, one major challenge has emerged: Traditional edge AI solutions (graphics processing units (GPUs), field-programmable gate arrays (FPGAs), and application-specific integrated circuits (ASICs)) have bounded applicability because most of them are limited in terms of flexibility. Typically, ASICs and/or high-power-consumption GPUs and FPGAs fall into this category.

Looking for answers to the shortcomings of existing products, engineers and designers examined the use of embedded processors, for both lower-end, resource-constrained consumer products as well as complex industrial operations. The solution? The TinyEngine neural processing unit (NPU).

Sponsored Resources:

NPU 101

NPUs are specialized microchips engineered to efficiently execute AI tasks. They have emerged as crucial technologies, transforming the functionality of embedded systems. In the pursuit of intelligent solutions powered by artificial intelligence and machine learning (AI/ML), NPUs deliver the requisite local, specialized processing capability.

In contrast to generic or specialized processors that manage general or specific computing tasks, an NPU is specifically designed for the mathematical computations that enable AI functionalities such as voice recognition, image editing, real-time translation, text production, and more.

NPUs are becoming ubiquitous in contemporary smartphones, laptops, and tablets. They’re also finding homes in industrial applications like machine vision, predictive maintenance, autonomous robotics, automated guided vehicles (AGVs), smart warehousing, industrial automation and factory control, and medical devices, among others. Many of these devices do most of their processing at the edge.

Key characteristics of neural processing include:

- Extensive parallel processing: This is a core capability of NPUs. They usually can process hundreds, thousands, or greater numbers of processing elements (PEs) concurrently. This design is important for deep-learning, parallel-processing architectures.

- Minimal power consumption: They use much less energy than standard processors.

- Real-time processing: The ability to process data in real-time is essential for applications such as autonomous driving (collision detection) and industrial automation (factory robotics), where real-time processing and immediate feedback are crucial for decision-making.

- They can offer better security because the data stays on the device unless directed otherwise. They also use less bandwidth, are more energy-efficient, and have lower latency — all critical requirements for edge networks.

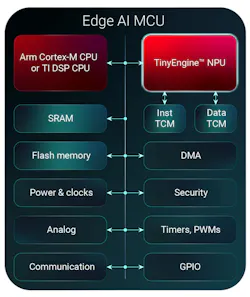

Because so many different NPU designs exist, it’s critical that designers do their homework when researching NPU requirements. One such cutting-edge device is Texas Instruments’ TinyEngine NPU. This architecture is integrated into TI’s C2000 and Arm Cortex-based microcontrollers (Fig. 1).

AI at the Edge

Integrating the TinyEngine NPU into these MCUs results in a high-performance, power-efficient AI accelerator-based MCUs. They enable the MCUs to handle multiple, concurrent AI workloads such as edge AI in advanced driver-assistance systems (ADAS), as well as infotainment, portable devices, and personal electronics.

Being able to operate at the edge has several advantages. First, since processing is local, reliance on the cloud isn’t a requirement, although if necessary, cloud connectivity can be configured as needed. Another significant attribute is reduced latency. This is particularly important for applications such as autonomous-vehicle instantaneous decision-making and high-speed camera image analysis.

Equally significant is power consumption. Edge networks must prioritize power efficiency, especially since AI processes can be demanding on resources. Running AI at the edge mandates devices that use minimal energy. This is where NPU-enabled MCUs excel — they reduce footprint, lower power usage, and limit heat generation. Conserving every milliwatt is essential, making NPU-enabled MCUs indispensable across edge networks and devices that run AI locally.

NPUs are purpose-built devices that run the building blocks of modern neural networks at the edge. They’re specifically designed to run defined operations such as convolutions, matrix multiplications, and activation functions. These types of tasks would require lots of computing power from the main CPU. But because NPU architecture greatly speeds up inference and uses less power, it’s ideal for edge deployment.

Edge AI Use Cases

- Object detection in vision systems: Object detection using cameras is ubiquitous across many sectors of technology: factory automation, home/building security, automotive safety, robotics, and more. Complex object detection requires deep learning for accuracy. AI-based object detection at the edge enables NPU-based MCUs to apply hardware acceleration for fast and accurate detection, for example (Fig. 2).

- Defect detection in factory automation: AI-enhanced edge neural networks are capable of low-latency, accelerated processing. This is ideal for camera-based visual inspection used on assembly lines. The fast processing and low latency, coupled with advanced machine-vision cameras, allow for high-speed scanning and defect detection.

- Low-latency, high-accuracy DC arc detection for solar applications: An edge-based NPU is used to monitor solar-system arcing. Running a local edge network, low latency enables monitoring of multiple channels simultaneously, with 10X the speed of traditional CPUs. This allows for millisecond detection of arcs, reducing or preventing solar-system damage.

- Industrial drive and motor control: Local AI-enabled edge networks can monitor AC motor bearings. Real-time monitoring NPU-enabled MCUs opens the door to fault classification at the motor. Using edge AI, complex vibration patterns can be learned, and with low latency and accelerated processing, potential motor bearing faults can be rapidly identified.

Conclusion

As edge technologies advance and the four domains mentioned above become more homogeneous, the scope and breadth of edge networks will permeate across nearly all segments of technology and industry. The push to do more with less is tantamount in today’s compute networking environments.

NPU-enabled MCUs are rapidly becoming the go-to technology in the drive to evolve the edge. Low-power, accelerated processing-based neural networks create complex, high-performance, AI-enabled edge networks that hit the sweet spots of saving power; local, accelerated processing; real-time functionality; and cost efficiency.

Sponsored Resources: