Electrical-vehicle (EV) sales are on the up. In the U.S., sales of EVs increased 80% in 2018 compared with 2017, reaching more than 2% of total vehicle sales for the first time. Each of those EVs boasts a host of apps and features that permit the car’s charge schedule to be managed, its interior to be conditioned ready for driving, its available range to be monitored, and a host of other useful functionality that all enhances the appeal of ownership.

For EVs, just as for their internal-combustion-engine (ICE) counterparts, security has been a hot topic ever since Charlie Miller and Chris Valasek rocked the world of automotive embedded software with their paper “Remote Exploitation of an Unaltered Passenger Vehicle.” Buy a new EV today and it will clearly be far safer than a 40-year-old classic ICE car could ever be—not the least of which because it won’t carry highly explosive petrol. However, for the EV to retain its safety advantage over the veteran, its safety-critical systems must be secure.

As the world of smartphones has shown us, Li-ion batteries can also sometimes catch fire and explode—and the hotter they are, the more likely that is to happen. In addition to the threats posed to connected ICE vehicles, EVs suffer an unsavory combination of potential attack vectors exemplified by battery monitoring and charge scheduling apps, and by safety-critical battery-cooling systems and charge-management systems on the vehicles themselves. This makes security for EVs a heightened cause for concern.

Defense in Depth

One of the issues highlighted in Miller and Valasek’s report on the Jeep was that there was no separation between safety-critical domains from those that are more benign. It’s no surprise that separation technologies are now a hot topic in the world of automotive security.

Tesla’s approach to separation in hardware—unlike the technology in the compromised Jeep—was considered state-of-the-art when the Keen Laboratories hacker team created a malicious Wi-Fi hotspot to emulate the Wi-Fi at Tesla’s service centers. When a Tesla connected to the hotspot, the browser pushed an infected website created by the hacker team. That provided a portal to access relatively trivial functions, with safety-critical systems such as braking falling under their control once they had replaced the gateway software with their own.

Tesla’s quick response was admirable, and the principle of separation is undoubtedly sound. But as their experience shows, it’s no silver bullet. No connected automotive system is ever going to be both useful and absolutely impenetrable, and no single defense of that system can guarantee optimal impenetrability. It therefore makes sense to protect proportionately to the level of risk involved. That means applying multiple levels of security so that if one level fails, others are standing guard.

Examples of such defenses might include:

- Secure boot to make sure that the correct image is loaded

- Domain separation to defend critical parts of the system

- MILS (Least Privilege) design principles to minimize vulnerability

- Minimization of attack surfaces

- Secure coding techniques

- Security-focused testing

It’s easy to suggest that security should be maximized for every one of these defenses, but much more difficult to finance. However, ensuring that these different lines of defense are complementary, so that the strength of one helps defend the weakness of another, development efforts can be optimized.

Developing Secure Application Code

To illustrate this principle, it’s useful to focus on two such examples: domain separation and secure coding practices.

Domain Separation and High-Risk Areas

It’s not practical to maximize security in every part of every system, especially when (for example) a head unit’s Linux-based OS is involved, complete with massive footprint and unknown software provenance. Focusing attention on the components of the system at most risk is more pragmatic, as reflected by the “Threat Analysis and Risk Assessment” process described in SAE J3061. Examples of likely high-risk areas include:

- Files from outside of the network

- Backwards-compatible interfaces with other systems, including old protocols and old code and libraries, which are hard to maintain and test in multiple versions

- Custom APIs that may involve errors in design and implementation

- Security code, including anything to do with cryptography, authentication, authorization (access control), and session management

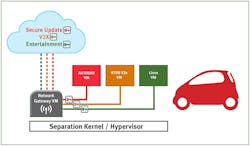

Consider that principle in relation to a system deploying domain separation technology—in this case, a separation kernel or hypervisor (Fig. 1).

1. A secure automotive system deploys separation technology.

It’s easy to find examples of high-risk areas specific to this scenario. For instance, consider the gateway virtual machine. How secure are its encryption algorithms? How well does it validate incoming data from the cloud? How well does it validate outgoing data to the different domains?

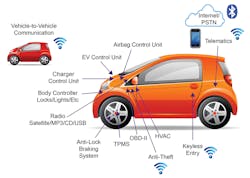

Then there are data endpoints (Fig. 2). Is it feasible to inject rogue data? How is the application code configured to ensure that doesn’t happen?

2. A number of automotive attack surfaces and untrusted data sources present security vulnerabilities.

Another potential vulnerability arises because many systems need to communicate across domains. For example, central locking generally belongs to a fairly benign domain. However, in an emergency situation after an accident, it becomes imperative that doors are unlocked, implying communication with a more critical domain. If such communications between virtual machines are implemented, though, their very nature demands that their implementation should be secure.

With these high-risk software components identified, attention can be focused on the code associated with them. That leaves a system where secure code doesn’t just provide an additional line of defense, but it actively contributes to the effectiveness of the underlying architecture by reinforcing its weak points.

Optimizing the security of this application code involves the combined contributions of a number of factors, mirroring the multi-faceted approach to the security of the system as a whole.

Secure Coding Practices

The CERT (Computer Emergency Readiness Team) division of the Software Engineering Institute (SEI) have nominated a total of 12 secure coding practices, all of which have a part to play in the code for the automotive system outlined in Figure 1. For example:

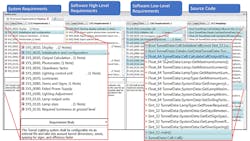

- Practitioners should “Create a software architecture and design [their] software to implement and enforce security policies.” ISO 26262 requires that requirements are specified, and that bidirectional traceability is established between those requirements, software design artefacts, source code, and tests. SAE J3061 suggests extending those principles to include requirements for security alongside requirements for safety, and tools can help ease the resulting administrative headache associated with traceability (Fig. 3).

- Many developers have a tendency to attend only to compiler errors during development, and to ignore the warnings. The warnings should be set at the highest level available and all of them should be attended to. Static-analysis tools are designed to identify additional and more subtle concerns.

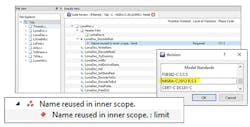

- Code design should be as simple and small as possible. There are many complexity metrics (Fig. 4) to help developers evaluate their code, and automated static-analysis tools help by automatically evaluating those metrics.

- A secure coding standard should be applied. CERT C and MISRA C:2012 are two examples (Fig. 5), despite a common misconception that the latter is designed only for safety-related developments. Its suitability as a secure coding standard was further enhanced by the introduction of MISRA C:2012 Amendment 1 and its 14 additional guidelines, and their recent collation into MISRA C:2012 (3rd Edition, 1st Revision).

3. The TBmanager component of the LDRA tool suite automates requirements traceability.

4. The LDRA tool suite helps report on complexity and automatically evaluates those metrics.

5. MISRA standards checking with a tool suite such as that from LDRA helps ensure both safe and secure coding.

Conclusions

No connected automotive system is ever going to be both useful and absolutely impenetrable. It makes sense to protect it proportionately to the level of risk involved if it were to be compromised, and that means applying multiple levels of defense so that if one level fails, others are standing guard. EVs have unique systems that can be especially vulnerable, and particular attention should be paid to them.

Domain separation and secure application code provide two examples of these defenses. The effort required to create a system that’s sufficiently secure can be optimized by identifying high-risk elements of the architecture, and applying best-practice secure coding techniques to the application code associated with those elements.

Electric vehicles are just as vulnerable to bad actors as their ICE counterparts, as Tesla’s experience has shown. Effective protection against bad actors is paramount if the trend toward an uptake in EVs is not to be compromised by potential security threats.

Mark Pitchford is Technical Specialist at LDRA.

About the Author

Mark Pitchford

Technical Specialist, LDRA

Mark Pitchford has over 25 years’ experience in software development for engineering applications. He has worked on many significant industrial and commercial projects in development and management, both in the UK and internationally. Since 2001, he has worked with development teams looking to achieve compliant software development in safety- and security-critical environments, working with standards such as DO-178, IEC 61508, ISO 26262, IIRA, and RAMI 4.0.

Mark earned his Bachelor of Science degree at Trent University, Nottingham, and he has been a Chartered Engineer for over 20 years. He now works as Technical Specialist with LDRA Software Technology.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: