Plugging in Intel’s Neural Compute Stick 2

Intel’s latest Neural Compute Stick (NCS) 2 (Fig. 1) arrived the other day and I was looking forward to plugging it in and see how it compared to its earlier incarnation. The original Neural Compute Stick I tried out had a Myriad 2 VPU (video processing unit). This chip incorporated a pair of RISC CPUs and a dozen specialized vector 128-bit VLIW SHAVE (Streaming Hybrid Architecture Vector Engine) processors designed for machine-learning (ML) inference chores with a bent toward image processing. The chip has a dozen camera inputs, but these weren’t exposed on the original stick.

1. Intel’s Neural Compute Stick 2 plugs into a USB 3 socket and sports a Movidius Myriad X chip.

The NCS 2 also plugs into a USB 3 socket. It does have the newer Myriad X chip. This chip is found in a number of commercial drones that are moving toward obstacle avoidance and object recognition.

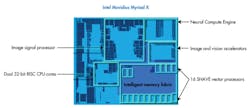

The Myriad X VPU (Fig. 2) includes the same type of VLIW SHAVE processors, but it bumps the number up to 16. It also has the same 32-bit RISC processors. The number of MIPI lanes is increased to 16, too, allowing for support of eight HD cameras. However, like the original stick, version 2 doesn’t expose these to the outside world. All data passes through the USB connection.

2. The Myriad X ups the number of camera inputs and number of SHAVE VLIW processors to 16.

The Myriad X can deliver 4 TOPS of performance, with the DNN engine contributing a quarter of that. The hardware accelerators include things like hardware encode and decode of H.264 and Motion JPEG, as well as a warp engine for handling a fish-eye lens, dense optical flow, and stereo depth perception. The latter can manage 720p streams at 180 frames/s.

There is a 450-GB/s intelligent memory fabric and 2.5 MB of on-chip memory that forms a multiport memory system linked to all major functions on the system. This minimizes data movement that’s often required on other systems.

OpenVINO

The Myriad X and NCS 2 are supported by Intel’s OpenVINO toolkit. OpenVINO, which stands for Open Visual Inference and Neural network Optimization, is an open-source project. It includes the Deep Learning Deployment Toolkit and Open Model Zoo. The latter is a repository for pre-trained models and demos that are also provided as part of Intel’s OpenVINO incarnation.

Intel’s OpenVINO support targets not only the NCS 2, but also FPGA, CPU and GPU machine-learning solutions. It can be used with models from a number of ML frameworks that have also been used to create demos.

Intel provides a Windows and Linux implementation for the NCS 2. I tried both and there’s really little difference between the two other than the installation. The demos are typically command-line-oriented and include pre-trained models or sample input data. Some utilize input streams from a USB camera with the video being piped over the USB link to the NCS 2.

The support is more extensive than when I looked at the original NCS, and the install is a bit more polished. Of course, the performance is significantly better with the Myriad X. The Python APIs also have better documentation. The latest OpenVINO release works with the NCS and NCS 2.

As with the NCS, getting the system up and running with the demos is relatively simple. Though the steps are easy to follow, there’s not always a description of the how or why, so new users will have a bit of a learning curve to tackle once they get past the initial demos. Extending them tends to be easy, but coming up with new models and using them is a bit more involved even when working with models from supported frameworks like Caffe and TensorFlow.

For those who were using the original NCS SDK, a very good article to check out is “Transitioning from Intel Movidius Neural Compute SDK to Intel Distribution of OpenVINO toolkit.” This is also a good overview of the NCS 2, including how to employ multiple NCS 2 devices on a single system. In addition, the article has links to using the system with virtual machines and Docker images.

One nice thing about the move to OpenVINO is that it adds MxNet, Kaldi, and ONNX support to the SDK’s Caffe and TensorFlow support. ONNX stands for Open Neural Network Exchange format. It allows models to be moved among frameworks.

It definitely helps to know Python, as that seems to be the language of choice for neural-network frameworks. Intel’s Developer Zone has lots of useful articles that are cross-linked, but there’s not a centralized spot to work from. Still, many articles like Optimize Networks for the Intel Neural Compute Stick 2 (Intel NCS 2) can be found on the site that provide in-depth details about how to program the NCS 2.

I didn’t try the NCS 2 on the Raspberry Pi 3, but it will work with other platforms that run Ubuntu and have USB 3 support.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: