EEMBC is the premier benchmarking group for embedded applications. They provide a range of benchmarks from floating-point tests to ultra-low-power (ULP) tests.

I talked with Markus Levy, EEMBC President, about their latest benchmark, IoTMark-BLE.

Wong: Can we start off this interview with a short description of your new IoTMark-BLE benchmark?

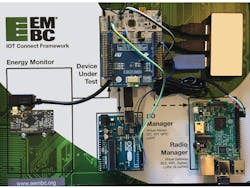

Levy: Sure, Bill. In short, the EEMBC IoTMark-BLE is both a benchmark and an analysis tool that measures the energy used by the system while it performs relevant real-world tasks. The system includes the MCU, the Bluetooth Low Energy radio, and the protocol stack. The benchmark and tool are built on an interconnected, flexible HW+SW framework that includes an EnergyMonitor to measure energy; a Radio Manager to coordinate the communication with the device under test (DUT); and an IO Manager to synchronize activities and to simulate a sensor input on the DUT’s I2C.

Wong: What do you mean that it’s both a benchmark and analysis tool?

Levy: Benchmarking is our primary goal to serve EEMBC’s members; therefore, as a benchmark, the IoTMark-BLE is used for competitive evaluation of MCU, RF modules, and other system components. Hopefully soon we’ll also start seeing these industry-standard results appearing in datasheets, similar to what we’ve seen with our other benchmarks such as CoreMark and ULPBench.

As a tool, this benchmark’s framework allows IoT device designers to change parameters and experiment with the options that provide the best energy efficiency or battery life. Just as a simple example, for a specific implementation, the designer may discover that sending 20 bytes of data once per second is more efficient than sending 10 bytes of data twice per second. There are a host of other parameters that can be changed; I’ve included a list of these (where the numbers in brackets are [min value, max value]):

- # of bytes written during BLE notify [1, 20]

- # of bytes written during BLE command [1, 20]

- # of bytes read by I2C [1, 4096]

- Advertise interval [20ms, 10s]

- Connection interval [7.5ms, 4s]

- Server (DUT) wakeup interval [3.75ms, 6s]

- Client (Radio Manager) wakeup interval [500ms, 6s]

Wong: EEMBC has typically produced benchmarks based purely on software; for example, CoreMark. Why is a complex framework required for this new benchmark?

Levy: Short answer is that this is a system benchmark. A system benchmark requires hardware interaction, especially one in which radio transmit and receive are used. In our case, we used a Raspberry Pi board with a BLE shield as the Radio Manager to keep costs down. The tricky part is coordinating the separate pieces of hardware used for the radio function, IO functions, and to sync the timing window for the energy measurement. It’s been incredibly complex getting this all to work properly and most important for benchmarking, repeatedly.

The setup for the IoTMark-BLE benchmark requires an IO Manager, Radio Manager, and energy measuring device. All components are integrated into a seamless user interface.

Wong: With the availability of multitudes of IoT communication protocols, why did EEMBC target BLE?

Levy: First of all, I don’t think there’s any doubt that BLE is one of the most popular protocols for low-power IoT devices, so this was no surprise that the working group members voted to use it. However, the key lies in what I said on the previous question about the flexible framework—this will easily allow us to develop a similar testing method for 802.11ah or Wi-Fi HaLow, Thread, LoRA, or other protocol. As a matter of fact, that’s our plan, but the members have to vote which protocol to implement next.

Wong: How did EEMBC choose the specific parameters for this BLE benchmark?

Levy: The idea was to model the BLE usage for a variety of applications, such as health monitors, home automation devices, and wearable devices. Each of these applications can vary a range of parameters including payload size, frequency of payload transmission, and transmit power. Bluetooth also has a lengthy specification for the Generic Attribute Profile (GATT) that defines how two BLE devices transfer data back and forth using concepts called Services and Characteristics. For our benchmark, we wanted to stay as generic and simple as possible while keeping the test real world.

Wong: So then, what are the specific parameters in this EEMBC methodology?

Levy: The easiest way to answer this is to go through the basic workflow of the benchmark. The test starts off with the device under test (DUT) waking up every 1000 ms and reading 1 kB of data over I2C from the IO Manager. This simulates a device reading from a sensor. The DUT then performs a low-pass filter (LPF) on this data and uses a BLE notification model to send the processed LPF data to the Radio Manager.

Because the BLE characteristic only has 8 bytes available out of 30 for data, we only send one packet, otherwise we'd have to wait another 1000 ms to send another one and the test would have moved on. Some hardware can burst a few packets per interval, but we do not code for that provision to keep the test consistent.

From here, the DUT goes back to sleep, but meanwhile both notify and write are queued by respective BLE stacks and the DUT wakes up to read data sent to it by the radio manager, perform a CRC, and returns to sleep. Functionally, the CRC is an unnecessary step, but we it because this is a benchmark and it provides a way to do some basic authentication that the benchmark was run correctly.

All of the above steps are repeated 10 times. Then we take the average energy consumption of each cycle, plug it into a formula, and calculate the score. The tests will also give a breakdown of energy consumed for each step, as this will allow a designer to analyze just the cost of doing the BLE transmission, for example.

Wong: Drawing from the adage “if you build it, they will come,” do you really believe that the microcontroller vendors will adopt the IoTMARK-BLE methodology?

Levy: Good question, because this new benchmark is our most complex one to date. The interest has been extremely high, so it’s just a matter of time. At this point, we have been doing development of the firmware and extensive testing using the STM32L476 Nucleo and BlueNRG shield, as well as Silicon Labs and NXP devices. The real question is which vendor will be first to publish results, thereby becoming a target for all others.

Whenever a new benchmark comes out, there’s always a period of time in which members are figuring out how to optimize or at least ensure they are getting the best results possible. This is especially important for an ultra-low-energy test, where you’re not only checking that the software is optimized, but also checking for any unnecessary power draws, proper sleep-mode transitions. Furthermore, using BLE in the benchmark, one must also ensure that all extraneous RF interference is eliminated or minimized.

About the Author

Markus Levy

Director of Machine Learning Technologies, NXP Semiconductors

Markus Levy joined NXP in 2017 as the Director of AI and Machine Learning Technologies. In this position, he is focused primarily on the technical strategy, roadmap, and marketing of AI and machine learning capabilities for NXP's microcontroller and i.MX applications processor product lines. Previously, Markus was chairman of the board of EEMBC, which he founded and ran as the President since April 1997. Mr. Levy was also president of the Multicore Association, which he co-founded in 2005.

Before that, he was senior analyst at Microprocessor Report and an editor at EDN magazine. Markus began his career at Intel Corp., as both a senior applications engineer and customer training specialist for Intel's microprocessor and flash-memory products. Markus volunteered for 13 years as a first responder—fighting fires and saving lives.