Speed Machine-Learning Accelerators with Flexible Chip Interconnect

Arteris is known for its network-on-chip (NoC) interconnects (Fig. 1). Arteris IP can handle on-chip as well as off-chip traffic, including emerging interface protocols like Cache Coherent Interconnect for Accelerators (CCIX). Its platforms are used in applications that target everything from autonomous vehicles to sensor fusion.

1. Arteris’ NoC interconnects are available in different configurations that support caching as well as non-cached applications.

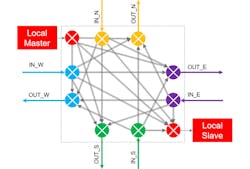

Its new FlexNoC 4 with AI Package is a flexible-chip-interconnect system that’s designed to speed up machine-learning accelerators. It can address many topologies like mesh, rings, and torus, providing predictable data flow across the chip. The new development GUI allows topologies to be automatically generated (Fig. 2).

2. FlexNoC-generated routers are optimized, but developers can customize switches, FIFOs and other router elements.

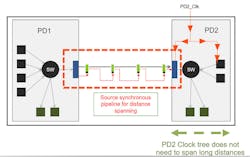

FlexNoC 4 can handle long-distance communication on large chips thanks to source-synchronous communications (Fig. 3) and virtual channel links (VC-links). Source-synchronous communications packages clock with data so that the clock trees of the source and destination can be kept independent. Keeping them in sync across a large chip is difficult at best.

3. Source-synchronous communications brings its own clock along with data so that the clock tree of the source and destination can be independent of each other.

VC-Links work like VLAN channels on a network. A single pipe is used for multiple data streams that enter one end of the pipe and appear at the other end in their individual streams. The system supports up to 31 pipes per virtual link. An arbitration system incorporates bubble prevention mechanisms as an option that favors whole packets. VC-Links can be utilized where necessary.

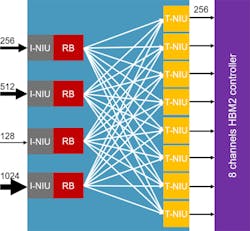

The system is also designed to support high bandwidth applications with bus widths up to 1,024 bits. This includes support for interleaved memory access and high bandwidth memory 2 (HBM2) (Fig. 4). There can be 8- or 16-channel interleaving between initiators (I-NIU) and targets (T-NIU) using Reorder buffers (RB). The system can handle data-width conversions as well as manage traffic aggregation. The system will eventually support a memory scheduler.

4. FlexNoC can support HBM2 and multichannel memory with bus widths up to 1024 bits.

FlexNoC will support data paths up to 2,048 bits. Buffering allows the system to wrangle with data-rate mismatch. It also supports an intelligent multicast system that manages the hierarchical fanout; therefore, a broadcast can be done as close as possible to the destinations. A posted write to a broadcast station will distribute the data to multiple destinations.

All this sounds good in general, but why is the new platform especially good for AI application hardware? High bandwidth is one aspect; however, efficient routing is another. That’s because machine-learning accelerators aren’t usually one large island of computing, rather they’re many islands that need to exchange data efficiently, including the weights for neural networks. Arteris’ network makes this more efficient and easier to design.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.