PCI Express Is Handling More than Just Peripherals

This file type includes high resolution graphics and schematics when applicable.

PCI Express (PCIe) Gen 3 is the mainstay for microprocessors. It scales by adding more lanes typically in an x1, x2, x4, x8, and x16 progression. Processor chips may use anywhere from one to more than a couple dozen lanes depending upon the bandwidth needed for a particular application.

The high-speed serial PCIe interface superceded the parallel PCI bus as the foremost peripheral interface, although even the PCI predecessor, ISA, is still in use. Access to peripherals such as Ethernet adapters remains a focus for PCIe, but it can also be utilized as multiple-node interconnect fabric as well as an access mechanism for solid-state storage also known as non-volatile memory express or NVMe.

NVMe is a storage protocol based on SCSI that is also the basis for SAS (Serial Attached SCSI). SAS uses the same electrical interface as SATA (Serial Advanced Technology Attachment) while NVMe runs on top of PCI Express. In general, they are similar in that commands and operations are queued to provide more efficient throughput between the storage device and the host. NVMe can handle other storage technologies, but for now it is primarily NAND flash memory including 3D NAND flash.

NVMe storage devices can be placed on the motherboard or attached in a variety of ways. An NVMe PCI Express card is one way to do it. Another is the M.2 NVMe module form factor like Micron’s 512 Gbyte unit (Fig. 1) that uses 3D NAND and an x4 PCI Express interface. The M.2 sockets are becoming more common on motherboards and are ideal for embedded applications since they are more rugged, but also provide a way for developers to select the amount of storage needed for an application. The M.2 form factor also supports USB and SATA interfaces with keyed sockets so only matching modules can be plugged into a board.

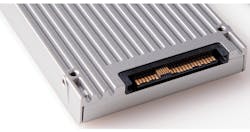

On the enterprise side, the U.2 drive module (Fig. 2) is becoming more popular. The connector actually supports a range of interfaces including an x4 PCI Express interface to handle NVMe as well as multichannel SAS and SATA. These modules are designed for hot-swap operation and are found on systems that may have a half dozen to hundreds of slots. These take advantage of the PCI Express switches that are available so one or more hosts can access the drives.

PCI Express fabrics have been used to link multiple hosts together as in Dolphin’s PCI Express solutions. This consists of a PCI Express switch and PCI Express host adapters that can be cabled to the switch. A system is designed to run a version of Linbit’s DRBD that replicates disk storage. Of course, the hosts could use a PCI Express interface to use NVMe storage as well.

PCI Express has also been used to link other devices together. For example, some GPGPUs can utilize their PCI Express interface to communicate with other systems linked by Ethernet supporting remote DMA (RDMA) using a protocol called GPUDirect. This configuration is useful in supercomputing clusters with GPGPUs located on different nodes within the system. The approach can be used with other interconnects like InfiniBand.

PCI Express can be used with a single-root complex host to interface with peripherals, but these days it can do much more.

Looking for parts? Go to SourceESB.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.