This file type includes high resolution graphics and schematics when applicable.

The data center industry has become one of the world’s largest consumers of electricity. Accordingly, Yole Développement recently published a report entitled “New Technologies and Architectures for Efficient Data Center,” which examines several possible scenarios for the evolution of these centers’ energy consumption.

And make no mistake: Evolution is a necessity. “In the actual scenario, with an average Power Usage Efficiency (PUE) of 1.8, worldwide data center energy consumption will reach 507.9 TWh by 2020,” explains Mattin Grao Txapartegy, a technology & market analyst at Yole.

With that in mind, here are five major tools that will help create more efficient data centers:

1. Energy Efficiency Metrics

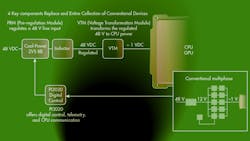

To help understand the energy use of a data center, we must understand and communicate about energy through the same energy efficiency metrics, such as Power Usage Effectiveness (PUE) and Data Center Infrastructure Efficiency (DCiE). Although not yet standards, they help to analyze the use of energy:

- Power Usage Effectiveness is an index defined by The Green Grid that measures how efficiently a computer data center uses energy—specifically, how much energy is used by the computing equipment. PUE is the ratio of total amount of energy used by a computer data center facility to the energy delivered to computing equipment. PUE is determined on a scale from 1 to 4, with 1 being very efficient and 4 very inefficient. Anything that isn't considered a computing device in a data center (lighting, cooling, etc.) falls into the category of facility energy consumption.

PUE = Total Facility Power [kW]/IT Equipment Power[kW]

Total Facility Power is the power measured at the utility meter, while IT Equipment Energy represents the load of IT equipment.

- Data Center Infrastructure Efficiency, another index defined by The Green Grid, is the reciprocal of PUE. It is calculated as a percentage by taking the total power of the IT equipment, then dividing it by the total power into the data center multiplied by 100.

DCiE = IT Equipment Power[kW]/Total Facility Power [kW] * 100%

In reality, not all of the power entering the data center is used to operate the IT loads. There are losses in the power system that must not be ignored. In addition, power is consumed by the data-center support infrastructure. The support infrastructure elements include power transformers, uninterruptible power source (UPS), generators, computer room air conditioners (CRACs), remote transmission units (RTUs), chillers, ventilation, air conditioning (HVAC) systems, and video surveillance systems.

An ideal PUE value of 1.0 is difficult to reach (Fig. 1). Uptime Institute’s 2014 Data Center Industry Survey concluded that the average PUE value for its respondents’ largest facilities is 1.7. The Green Grid recommends the use of annualized energy consumption figures in calculations, which allows facilities to avoid periods when their IT equipment is running at full capacity to avoid incorrect PUE values. According to The Green Grid, the most likely measurement point would be at the output of the computer room power distribution units (PDUs).

2. Power Conversion Architectures

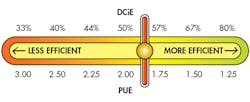

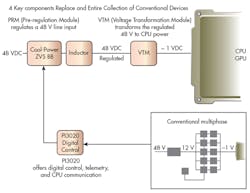

Two-stage power conversion (48 V-to-12 V-to-load) has been a very common architecture for powering CPUs and GPUs in data centers. Single-stage power conversion offers another option (48V to point-of-load [POL]). It reportedly eliminates one conversion loss and reduces distribution power loss by a factor of 16 in a rack implementation, compared with two-stage power conversion.

Google announced at the Open Compute Project (OCP) Summit that it has been using a 48V to point-of-load design for the last several few years now. It does not require special safety precautions; Google found that it was at least 30% more energy efficient and more cost effective than a two-stage power conversion design. Since joined OCP, Google has been promoting single-stage power conversion (48V to point-of-load) and working toward a rack standard that suppliers could use in the future. Here are two of the latest solutions in the market:

Vicor Corporation has been supporting the data center infrastructure recently highlighted by Google for some years already. Vicor’s latest generation of 48-V Direct-to-PoL modules integrate the following: non-isolated Buck-Boost Pre-Regulator modules (PRMs; e.g., I3751-00) and Voltage Transformation Modules (VTMs; e.g., VTM48KP020x095BA0; Fig. 2). Vicor’s technology takes the regulation, isolation, and voltage transformation functions of a typical dc-dc converter and separates them into individual elements. The PRM acts as high-efficiency step-up/ step-down voltage regulation. In contrast, the VTM acts as a high-efficiency voltage transformation unit at the point of load while providing isolation from input to output.

In addition, STMicroelectronics has developed a digital power architecture that is fully isolated for direct power conversion from 48V to any point-of-load. This three-chip solution (comprising the STRG06, STRG02, and STRG04) is fully compliant with Intel’s VR12.5 (Haswell and Broadwell), VR13 (Skylake), and DDR3/4 voltage-regulation specifications, as well as all field-programmable gate arrays (FPGAs) and application-specific integrated circuits (ASICs) for data center applications.

3. Power Supply

An Uninterruptible Power Supply (UPS) is a system that provides backup electricity to IT systems. It contains an energy storage system, which supplies power to the load when utility power is unavailable. Traditionally, data centers draw power from the grid. Microsoft previously claimed that in a traditional 25MW data center (prox. 25MW), the UPS and battery equipment room footprint accounts for 150,000 ft2, or approximately 25% of the total facility footprint.

Last year, Microsoft (which has been designing, building, and operating data centers for over two decades) donated a distributed Uninterrupted Power Supply (UPS) technology called Local Energy Storage (LES) to the Open Compute Project. LES offers an integrated power supply and battery that completely eliminates the facility UPS and moves that capability directly into the IT load. Moving the energy storage close to the server eliminates up to 9% of the losses associated with conventional UPS systems, resulting in an up-to-15% improvement in Data center PUE while shrinking the data center footprint.

An example of such a power supply is the one just released by Artesyn Embedded Technologies. This 1600-W power supply (HS-OCS Series) is designed for hyperscale data centers, like those using the Open Compute Project specifications (Fig. 3).

4. Lighting Systems

Light-emitting diodes (LEDs) are another tool that can help to improve the efficiency of a data center. They consume less energy, generate less heat, and are more durable when compared to traditional and halogen incandescent technologies.

The Telecommunication Infrastructure Association (TIA) Standard for Data Centers ANSI/TIA-942-A recommends that data center operators implement LED lighting within their facilities. However, the focus is not solely on changing the lighting. There is now an LED lighting system solution that allows lighting use whenever and whereever it is needed, thus improving the efficiency of a data center.

For example, the OCP electrical specification (Data Center v1.0) used in the design of Facebook’s innovative and energy-efficient data center uses LED lighting throughout the data center interior. In the innovative power-over-Ethernet LED lighting system, each fixture has an occupancy sensor with local manual override and programmable alerts via flashing LEDs.

Ethernet LED lighting technology is also known as Power over Ethernet (PoE). It can provide data centers with intelligent lighting capabilities that integrate sensors, which can detect motion, lighting, and energy metering. LED light fixtures can be powered not by an electrical powerline, but by basic Ethernet cable, because low-energy LED lighting is low voltage.

Data centers are starting to adopt this technology. For example, the Long Island, N.Y.-based data center mindSHIFT Technologies has installed Power over Ethernet LED lighting across 40,000-square-feet of data center and office space, where it hopes the technology leads to 70% less energy consumption than ordinary LED lighting systems. mindSHIFT Technologies, Inc. selected SmartCast Power over Ethernet (PoE) LED lighting by Cree, Inc. integrated with a Cisco network.

Several lighting companies offer Ethernet LED solutions, including Philips, Ellipz lighting, Cree, Innovative Lighting, Eaton, Molex, Orion Energy Systems, Platformics, and NuLEDs.

5. Power Software

Data-center virtualization can reduce costs, power, cooling, and hardware, resulting in greener data centers. In addition, a new tool for Software Defined Power promises to help achieve more efficient data center infrastructure in terms of power. Dubbed Virtual Power Systems (VPS), it was recently selected as a “2016 TiE50 Top Start-up” at the TiE50 Technology Awards Program. VPS promises to aid in optimizing capacity utilization, controlling performance, and reducing TCO costs using a software-defined power called Intelligent Control of Energy (ICE) software.

VPS ICE intelligently and dynamically allocates power to racks, branch circuits, and IT nodes, with constant awareness of power consumption needs across the data center topology. CUI partnered with VPS to set a new standard for an efficient power infrastructure for data centers to create a larger Software Defined Power ecosystem—from board level to system level—ultimately creating a more intelligent, more efficient data center infrastructure.

Conclusions

Data centers industry will keep growing due to the increase demand in data processing therefore data energy consumption will growth too. It is important to understand the environmental impact of data centers and that there are excellent options in the market to apply the concept of energy efficiency—not only because of the economic benefits, but because of the high potential for reducing carbon footprint.

References

- Open Project Compute Data Center v1.0

- "1600 W OCS Data Sheet" by Artesyn Embedded Technologies

- "Factorized Power Architecture and VI Chips" by Vicor

- "Power Equipment and Data Center Design" by The Green Grid

Looking for parts? Go to SourceESB.

This file type includes high resolution graphics and schematics when applicable.

About the Author

Maria Guerra

Power/Analog Editor

Maria Guerra is the Power/Analog Editor for Electronic Design. She is an Electrical Engineer with an MSEE from NYU Tandon School of Engineering. She has a very solid engineering background and extensive experience with technical documentation and writing. Before joining Electronic Design, she was an Electrical Engineer for Kellogg, Brown & Root Ltd (London. U.K.). During her years in the Oil and Gas Industry she was involved in a range of projects for both offshore and onshore designs. Her technical and soft skills bring a practical, hands-on approach to the Electronic Design team.