The explosion of mobile video, mobile broadband, and the Internet of Things (IoT) has led to millions of new flows traversing the mobile network, challenging mobile operators to support this influx of data on their already taxed networks. At the same time, mobile operators are looking to the success of the cloud in enterprise data centers as a model for what virtualized technologies can achieve in the telecom space—namely, economies of scale, scalability, and reduced CapEx and OpEx.

This file type includes high resolution graphics and schematics when applicable.

However, the telecom cloud is not the same as the IT cloud. The telecom industry’s demanding requirements for five-nines availability, scalability, reliability, and complex networking must be met, and there’s the need for a supplementary approach. In addition, operators want to leverage the significant investment they’ve already made in their telecom infrastructure.

Two key technologies are being pursued in tandem to deliver scalability and performance associated with the telecom cloud: software-defined networking (SDN) and network functions virtualization (NFV). SDN involves the decoupling of the control plane from the data plane, allowing the centralization of the management (orchestration) plane. NFV separates the application from the underlying hardware to enable better utilization of server resources.

Seen as one of the most disruptive technologies to arrive in the market in recent years, SDN transforms the way networks are managed, controlled and utilized. With its ability to dynamically respond to the migration, spin up, and spin down of application instances, SDN provides a powerful complement to NFV’s ability to maximize the utilization of available server resources.

What is SDN?

Let’s start with a simplified answer to the above question: SDN offers empowerment, presenting mobile operators with the opportunity to take control of the networking resources needed to get subscriber packets to the application instances designated to process them.

SDN opens up a new opportunity that hasn’t been available before because network elements have previously required some esoteric commands to be entered through a command-line interface, which are done by humans and prone to error, as well as not being done in real-time. SDN has taken the opportunity to present an API consistent across the industry, allowing applications to be written that configure networks on the fly, and thereby respond to developing service demand shifts in real-time.

The SDN Model

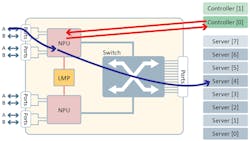

The SDN model features three tiers (Fig. 1). In the first tier, dubbed “orchestration,” resides the business logic to manage services across the network domain. The second tier, the controller, is responsible for configuring the various network devices to ensure that application traffic gets delivered to the desired services. The third tier depicts the nodes as the forwarding engines—the network elements responsible for the forwarding of traffic.

The key benefit of the SDN model is the separation of network control from the packet-handling elements. This promises to enable a diverse and competitive ecosystem of SDN-based products to revolutionize how networks are built. Of course, making SDN work effectively at very high data rates and in an environment where a huge number of new flows are being encountered every second spawns a number of challenges.

Typical SDN Handling for a New Flow

The standard philosophy of SDN is that when a new flow arrives at the forwarding element, the packet will be held while a decision is reached about how to handle the new flow. A notification, such as an OpenFlow packet-in message, is constructed by the agent managing the network processing unit’s (NPU) tables and is sent to the registered controller function. The controller now has to apply some logic and determine how best to handle the packet. It may apply some Layer 7 deep-packet-inspection (DPI) inference rules to determine the nature of this new flow, or it may make a decision based upon fairly simplistic logic.

In any event, the controller needs to construct a command message to create a rule for the new flow and instruct the SDN agent to update the NPU’s tables accordingly. Once provided with the rule to apply to this flow, the NPU will update its flow table and forward the packet as instructed.

However, this rather convoluted approach isn’t ideal, as it adds an inordinate latency delay before the packet can be forwarded on, which negatively impacts the quality of the subscriber’s experience. Determining the action to apply to a packet in a timeframe commensurate with the expected end-to-end latency of the packet is therefore critical.

Furthermore, when considering a network element capable of supporting 400 Gb/s worth of throughput, it’s likely that the rate at which new flows are encountered would swamp any controller function if it had to determine the handling for each new flow. In some deployments, the rate of encountering new flows could approach 100,000 new flows per second. Such a new flow rate would allow just 10 µs to fully determine and configure the correct handling.

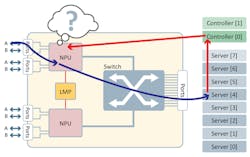

Therefore, a degree of pragmatism must prevail. Moreover, the purity of SDN architecture will need to be adapted to allow for real-world practicalities (Fig. 2).

SDN Controller Can Predefine Flow Rules

It’s quite possible that the controlling function could anticipate all of the flows that will be encountered and provision the flow table in advance. The controller may be able to create the rules required as part of the session creation process. This way when a packet arrives, there’s no such thing as a new (or unexpected) flow, because the handling for the packet is already determined and can be immediately forwarded with no additional delay.

This arrangement is not as unlikely as it may seem at the outset. In applications with a clear separation between the control and data plane (such as GTP), the control plane may be able to provision the NPU for the flows generated by a newly logged-on user. However, in most deployment cases, a new flow will not be pre-advertised. Thus, it’s impossible to avoid the need to wait for the controller to respond to the creation of a new flow, once again causing undue latency (Fig. 3).

Autonomous Handling for a New Flow

There is another approach—one that’s pragmatic, practical to deploy, and can fit in with the SDN model without being held hostage to the latency caused by having to rely totally on a remote controller. It’s possible to have the NPU determine what action to apply to a new flow by reference to a policy defined by the controller in response to the strategy set by orchestration.

A new flow arrives at the NPU, one for which no previous actions have been set. The NPU applies the rule logic set by the controller to autonomously determine which virtual instance (if NFV) or processing resource should handle this flow. With no delay in waiting for the response from the controller, the packet is immediately forwarded to the nominated processing resource. In general, the processing resource assigned to handle this flow by such a method will be a suitable choice.

However, a server (or virtual machine) can inform the SDN controller if it determines that it’s not best suited to be handling this new flow. Should the controller conclude that a different action be applied for this flow, then it can communicate this exception to the NPU. From that point onward, the flow will now be handled as specifically requested by the controller. This approach has the benefit of being able to respond in a timely fashion to new flows, while avoiding significant latency and maintaining the top down control of SDN management (Fig. 4).

Radisys has developed software technology called FlowEngine that blends SDN orchestration principles and architecture with the realities of high-speed packet forwarding. It allows each flow to be autonomously assigned to a virtualized-network-function (VNF) pool at wireline speed.

Summary

Mobile operators will continue to move forward with their rollouts of SDN and NFV technologies to achieve the benefits of a virtualized network, including reduced CapEx and OpEx along with the ability to deploy new services rapidly. Understanding how typical SDN handling and autonomous handling for a new flow is managed will help eliminate concerns around latency issues and improve the overall subscriber experience.

This file type includes high resolution graphics and schematics when applicable.

About the Author

James Radley

Senior Architect

James Radley, senior architect at Radisys, has more than 25 years’ experience in the computer industry, the last 20 of which focused on the design of platforms for the telecommunications industry. At Radisys, he specializes in network technologies, including the use of switches and packet processors.