Lowdown on the Latest PCI Updates

This file type includes high-resolution graphics and schematics when applicable.

The Peripheral Component Interconnect (PCI) architecture has been the cornerstone for I/O connectivity in computing, communication, and storage platforms for more than two decades. What started off as the ubiquitous local bus interface for all types of I/O devices in the PC industry, has since evolved to satisfy the requirements for I/O technology across the server, client, embedded, and mobile market segments. PCI Express (PCIe) remains unsurpassed as the attach point for storage, networking, and a wide range of external I/O controllers. It enables a power-efficient, high-bandwidth, and low-latency interconnect between CPUs, host controllers, memory, and devices.

Through a robust compliance program that ensures seamless interoperability for plug-and-play, the PCIe specification offers backwards compatibility that protects industry investments; ongoing evolution in power efficiency and performance; and agility to adapt to today’s revolutionary trends in computing, storage, and communications. PCI-SIG, the consortium of nearly 800 member companies that owns and manages PCI specifications as open industry standards, expects PCIe technology to remain relevant and continue to increase in adoption.

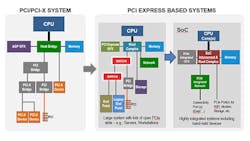

This article delves into the latest PCIe technology updates for hardware, software, and electromechanical form factors that support next-generation product designs. On the hardware and software side, PCI/PCIe specifications define mechanisms for device discovery, configuration, driver association, power management, error reporting, and virtualization. These mechanisms scale from large platforms with fabrics interconnecting hundreds to thousands of discrete components across multiple subsystems, to highly integrated system-on-chip (SoC) implementations for mobile and the Internet of Things (IoT).

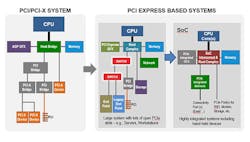

Within PCI-SIG, industry-leading member companies are actively working toward the fourth generation of serial signaling technology at 16 GT/s with the development of the PCIe 4.0 specification. This new PCIe specification will maintain full backwards compatibility, while providing further enhancements in power-efficient performance scaling, as well as architectural improvements to reduce system latencies and support low-cost, flexible platform integration. In addition to performance improvements, PCI-SIG is constantly evolving its specifications to support lower power (both active and idle), software and protocol enhancements for power-efficient performance, I/O virtualization, and SoC efficiency.

PCI/PCIe Evolves into Scalable Architecture

Conventional PCI technology, a bus-based architecture, matured into an open I/O standard under the direction of the PCI-SIG via width and speed increases, sustaining the I/O needs of the computing industry for more than a decade. After hitting a performance wall with the bus-based PCI architecture, PCI-SIG moved to a serial, point-to-point, full-duplex, differential interconnect link called PCI Express (PCIe) with the release of the PCIe 1.0 specification in 2002 (Fig. 1). PCI-SIG insisted on software compatibility between the PCI and PCIe architectures to ensure a seamless technology transition from the bus-based to serial-based interface.

The PCIe architecture supports varying bus lane widths (x1, x2, x4, x8, x16), offering a scalable solution for different bandwidth requirements. Every few years, PCI-SIG releases a new version of the PCIe specification, doubling the data rate each time, in addition to providing protocol and power enhancements (Fig. 2). The steady evolution of PCIe technology has resulted in its integration into the CPU package, making it a ubiquitous interconnect that can deliver high bandwidth at low latency and low power.

A Layered, IP-Friendly Architecture

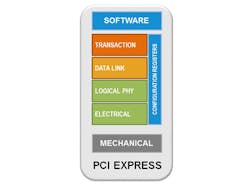

The PCIe specification features a layered architecture (Fig. 3), with each layer offering a distinct and well-defined functionality. As a result, each layer can evolve independently, from both the specification and IP implementation points of view. Interfaces such as the PHY Interface for PCI Express (PIPE) offer the benefit of economies of scale by buying off-the-shelf IP blocks, analog as well as logic layer stacks, and freeing up resources so that companies can innovate using their own value-add IP.

PCIe technology, like its predecessor PCI, is a load/store architecture unlike any other open industry I/O standard. Devices based on the PCIe specification can be mapped directly into the system memory space, enabling them direct access to system memory, and promoting energy-efficient performance. Architected mechanisms define a consistent interface between software and hardware for essential system functions, including discovery, device driver association, and power management, to ensure seamless interoperability across multiple platforms and operating systems. Advanced error detection, recovery, and reporting mechanisms, in addition to hot-plug support, all meet the high reliability, availability, and serviceability (RAS) requirements of servers and storage segments. Further, scalable I/O subsystems can be built by exploiting the hierarchical architecture using switches.

The first layer of the PCIe architecture is the Transaction Layer, a fully packetized, split-transaction protocol designed to deliver maximum link efficiency. A unique benefit to the PCIe architecture is that it offers a well-defined producer-consumer ordering model to ensure data consistency. This provides programmers with a consistent model independent of the type of I/O device they’re working with. PCIe technology uses in-band, credit-based, flow-control mechanisms to avoid the added cost of sideband mechanisms, while delivering performance at line rate. The Virtual Channel capability offers quality of service (QoS) support across the hierarchy, making it possible for different types of devices to communicate over shared links for power and cost efficiency.

Next, there’s the PCIe Data Link Layer, which provides reliable data transport services through a combination of cyclic redundancy check code (CRC), retry and hierarchical time-out mechanisms. Following that is the PCIe Physical Logical Layer, which is responsible for packet framing, data encoding/decoding, and link initialization. While PCIe 2.5 GT/s and 5 GT/s modes use 8b/10b encoding, the 8 GT/s and upcoming 16 GT/s modes use 128b/130b encoding for maximum bandwidth efficiency.

Finally, there’s the PHY Electrical Layer that uses low-voltage signaling, and supports transmitter operation at both full and half-swing for power efficiency. The next generation of PCIe technology will include quarter-swing technology for boost power efficiency even further. Embedded clocking ensures pin efficiency, which translates to cost and power benefits.

Low-Power High Performance

Ongoing refinement of PCIe technology continues to make it a leading low-power solution in both active and idle modes. IP providers have reported delivering 8 GT/s data rates at 5 pJ/bit and consuming only 10 micro-watts when the link is idle. The PCIe specification provides an architected mechanism for system software and hardware to autonomously transition I/O devices into low-power link and device states. Mechanisms are provided for system software to statically and/or dynamically allocate a platform power budget between different I/O subsystems depending on usage requirements and available power.

In addition to the evolution in speed, PCI-SIG is continuously innovating the PCIe architecture to ensure its relevancy and desirability for emerging and evolving usages. I/O virtualization, widely adopted by servers and clients, enables device sharing among multiple operating systems running simultaneously in a virtualized system environment, while delivering the same level of throughput as a dedicated I/O subsystem.

In addition, caching hints have enabled, for instance, networking to DMA-transfer most I/O traffic directly to and from the CPU caches, eliminating memory and coherency-management traffic. This has led to efficient line-rate delivery in the face of exploding demand for networking bandwidth in the data center. Atomic operations enable accelerators to work collaboratively with each other and with the main CPU at high performance levels.

The storage industry is increasing its adoption of PCIe technology due to the benefit of power-efficient performance with high bandwidth scalability. PCIe introduced independent spread spectrum clocking (SRIS) for cost-effective cables, both for storage as well as backplane interconnect usages.

PCIe technology also offers a rich set of form factors, including the Card Electro-Mechanical (CEM) specification used in desktop, workstation, and server platforms; the small-form-factor SFF-8639 used primarily in compact storage devices across client and server platforms; the M.2 compact form factor primarily for the mobile segment; the external cabling; and the upcoming OCuLink cable form factors.

PCIe Compatibility

PCI-SIG administers periodic compliance workshops around the globe to educate its members throughout the technology-development lifecycle and to test interoperability at various levels: electrical, protocol, and software. This helps to ensure seamless interoperability between PCIe components, and backwards compatibility to demonstrate that investments are protected and different system components can make the technology transitions depending on I/O subsystem needs.

The consistent driver, hot-plug, power-management and programming models ensure that no surprises pop up when adding a new component in a system, or when an I/O device gets integrated into the main die (e.g., CPU or SoC) as a root-complex integrated end-point. This provides an additional level of investment protection to software developers, designers, system integrators, and customers.

Conclusion

By successfully navigating several technology transitions and specification updates, PCI-SIG is positioned to become a leader in the computing, communications, and storage industries with its technology offerings. The power and promise of this open standards organization, backed by almost 800 industry members, is that its technology will be open, scalable, cost-effective, power efficient, leading edge, and multi-generational, with relevance across all market segments and usage models.

About the Author

David Harriman

Senior Principal Engineer

David Harriman is Senior Principal Engineer in the I/O Technology and Standards Group at Intel. Harriman develops and promotes next-generation platform technologies, focusing on I/O interconnects such as USB and PCI-Express (PCIe). Over the years, Harriman has been a lead contributor for the PCIe specification and continues to chair the PCI-SIG PCIe Protocol Working Group.

Harriman joined Intel in 1993, where he helped develop the first desktop chipset for the Pentium Pro processors and the first AGP chipset. Harriman was one of the primary developers of Intel’s hub architecture and currently holds around 100 chipset and interconnect patents.

Debendra Das Sharma

PCI-SIG Board Member, UCIe Consortium Chair, and Intel Fellow, Intel Corp.

Dr. Debendra Das Sharma is an Intel Fellow and Director of I/O Technology and Standards Group. He is an expert in IO subsystem and interface architecture, delivering Intel-wide critical interconnect technologies in Peripheral Component Interconnect Express (PCIe), coherency, multichip package interconnect, SoC, and rack scale architecture. He has been a lead contributor to multiple generations of PCI Express since its inception, a board member of PCI-SIG, and leads the PHY Logical group in PCI-SIG. He also is chair of the UCIe Consortium.

Debendra joined Intel in 2001 from HP. He has a Ph.D. in Computer Engineering from the University of Massachusetts, Amherst and a Bachelor of Technology (Hons) degree in Computer Science and Engineering from the Indian Institute of Technology, Kharagpur. He holds 99 U.S. patents. Debendra currently lives in Saratoga, Calif. with his wife and two sons. He enjoys reading and participating in various outdoor and volunteering activities with his family.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: