GTC 2026 Wrap-Up

What you’ll learn:

- What NVIDIA CEO Jensen Huang highlighted in his keynote.

- What’s next after Vera Rubin?

- Why is Jensen standing next to Disney’s Olaf?

NVIDIA’s main highlight at this year’s GTC was its Vera Rubin “AI factory in a box." The full-stack, vertically integrated hardware/software solution is designed to support the latest artificial-intelligence (AI) software, such as agentic AI in the cloud.

As usual, NVIDIA CEO Jensen Huang gave the two-hour-long keynote with plenty of videos and robots, including Disney’s Olaf from the movie Frozen. This is more than Disney’s usual animatronics. It was an AI-driven robot that walks and talks with a “mind of its own” courtesy of training and development via NVIDIA hardware and software, which even involved a “Jetson-based computer in its tummy.”

GTC 2026 Keynote

High-Performance AI Hardware at GTC

The Vera Rubin is built on NVIDIA’s Vera CPU and GPU. The framework includes the BlueField-4 STX storage processor as well. Spectrum-X provides high-performance Ethernet connectivity. And NVIDIA’s InfiniBand links clusters together.

NVIDIA is also targeting space applications. The Space-1 Vera Rubin Module could support orbital data centers. Certain IGX Thor and Jetson Orin implementations are now suitable for embedded applications in satellites and space stations.

Moreover, NVIDIA released a few details about the future Feynman platform. The Rosa CPU is named for Rosalind Franklin, a chemist known for her work in X-ray diffraction and crystallography. The platform encompasses the BlueField-5 data-processing system and CX10 networking, and it will utilize fiber optics for connectivity.

Among other announcements was the availability of the desktop DGX Station based on the GB300 Grace Blackwell Ultra, which can deliver 20 petaFLOPS and support up to a 1-trillion-parameter large language model (LLM). The DGX Spark is a more powerful four-node cluster. The RTX PRO 4500 Blackwell Server Edition and RTX PRO Blackwell workstation were also presented.

NemoClaw Secures OpenClaw

OpenClaw is an open-source, AI agentic platform that's become very popular. It provides persistent memory to agents that it manages and works with well-known applications and chat apps. It can be sandboxed and is able to manipulate files and applications.

NVIDIA’s NemoClaw, based on its Agent Toolkit, builds on top of OpenClaw, enhancing security and privacy. It allows users to configure how agents operate and how data can be manipulated. These can run on AI workstations.

Turning Disney in Physical AI

Near the end of Jensen’s presentation, a little robot walked on stage (Fig. 1). It was Disney’s snowman, Olaf, from the movie Frozen. This robot is controlled by an NVIDIA Jetson platform.

The robot was trained on NVIDIA hardware and software. It used the Isaac Sim and Omniverse robot simulation systems. Olaf learned to walk, talk and interact with people. The interaction with Jensen was likely partially scripted, but the robot was working on its own AI models.

More from GTC

There was a lot going on at GTC, and not just from NVIDIA. I won’t try to enumerate them all but here are a couple you might find interesting.

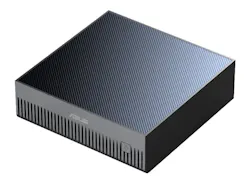

First there was the ASUS Ascent GX10 (Fig. 2). Its their version of the NVIDIA DGX Spark, which is based on NVIDIA’s GB10 Grace Blackwell. The GX10 can deliver up to 1 petaFLOPS, and with 128 GB of memory, it can handle a 200-billion-parameter model. You can link systems together using NVIDIA’s NVLink. Of course, it runs NVIDIA’s software stack, including CUDA. Not bad for under $3,500.

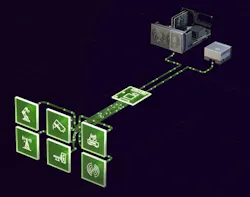

NXP Semiconductors highlighted its physical AI and humanoid robotics support. I was more interested in their support for NVIDIA’s Holoscan Sensor Bridge (HSB) (Fig. 3). HSB is open-source software with a matching API that can stream sensor data directly to GPU memory. This can be useful for many applications from military and avionics to medical and industrial automation.

This Sensor-over-Ethernet and Camera-over-Ethernet architecture is designed to deliver data with under a millisecond latency. It's able to deliver a 4k60 camera image to an NVIDIA IGX Orin in 17 ms. NXP sensors and micros can fit on either side of the connection. This type of sensor feedback is inherent to physical AI and humanoid robotics.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: