How Bluetooth 5.1, UWB, and Wi-Fi 802.11az Empower the Next Frontier of Micro-Location

Members can download this article in PDF format.

What you’ll learn:

- How Bluetooth 5.1, UWB and Wi-Fi 802.11az provide micro-location.

- How these technologies compare to deliver the next-generation of micro-location.

Using wireless technologies for positioning isn’t new. However, the level of accuracy required has evolved over the years upon identification of new location-based use cases.

A GPS system, for example, can achieve roughly between a 5- to 20-meter level of accuracy, depending on signal conditions. This is sufficient when driving around to locate a particular building, but a GPS level of accuracy can’t meet the needs of finding, say, a specific shelf in a store or point to the right painting in a museum tour.

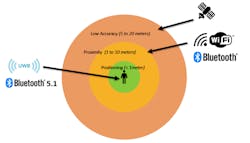

Today’s Bluetooth and Wi-Fi positioning systems based on received signal strength can deliver indoor positioning at a level sufficient for applications like detecting the proximity of an object or person within a few meters. The next generation of technologies, though, aims to unlock an even higher level of accuracy, reaching a sub-one-meter level of accuracy down to a few centimeters—otherwise known as micro-location positioning. It unlocks a new generation of use cases that allow users to interact very precisely with various actors in the environment, from hands-free access control to asset tracking and much more.

Systems based on Bluetooth 5.1 core specifications, ultra-wideband based on IEEE 802.15.4z, and Wi-Fi Next Generation Positioning based on IEEE 802.11az offer the potential to unlock these next-generation positioning applications (Fig. 1).

How Does Bluetooth 5.1 Provide Micro-Location?

Released in 2019, Bluetooth SIG updated the Bluetooth core specs for Bluetooth 5.1, including enhancements for direction finding. Prior to the 5.1 release, Bluetooth was already used extensively in deployments for indoor location tracking, using a technique called received signal strength indicator (RSSI) to estimate the distance between a transmitter and a receiver based on how much path loss is measured.

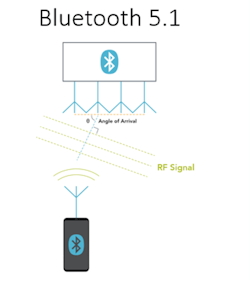

However, the receiver can only detect that the transmitter is in a circular zone and doesn’t have information about the direction of the incoming signal. Bluetooth 5.1 specifications adds directionality to an incoming signal by providing angle information. Systems for asset tracking or wayfinding applications can be implemented using angle of arrival (AoA) or angle of departure (AoD) Bluetooth 5.1 methods (Fig. 2).

The direction is based on the angle of the incoming signal. For direction finding, the Bluetooth 5.1 devices transmit packets appended with a constant tone extension (CTE) field. The CTE field is a bit sequence of unmodulated 1s with variable duration that simplify the phase computation on the receiver. Bluetooth 5.1 receivers use an antenna array with at least two antennas and compute the angle of incidence based on the phase difference between the antennas, the signal’s wavelength, and the distance between the antennas.

Combined with the RSSI measurement, the angle information allows devices to pinpoint their location with better accuracy than the RSSI method alone.

The accuracy of Bluetooth 5.1-based systems depends on multiple factors including the number of antennas in the array and the antenna pattern, as well as the post-processing algorithm to determine the angle from phase I/Q information. The topology of the site also is important as both RSSI and phase accuracy are degraded by obstacles. However, the measurements can be greatly improved with the deployment of multiple locators for trilateration.

Depending on the implementation, Bluetooth 5.1-based systems should be able to achieve a sub-meter level of accuracy down to tens of centimeters. At the time of this writing, support for Bluetooth 5.1 has been added by all major chipset manufacturers.

How Does Ultra-Wideband (UWB) Enable Micro-Location?

UWB isn’t a new technology. As defined in the IEEE standard 802.15.4, it was first deployed in the early 2000s. At the time, it was geared toward high-speed transmission USB replacement, but it never quite achieved wide commercial adoption. In recent years, the MAC and PHY layers were improved in the IEEE 802.15.4z amendment for ranging purposes.

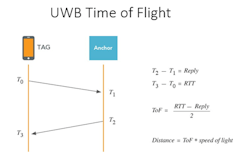

Unlike Bluetooth, UWB doesn’t use signal strength to evaluate distance; instead, it uses time of flight (ToF). ToF measures the signal’s propagation time from the transmitter to the receiver. Since RF signals travel at the speed of light regardless of the environment, distance estimation based on ToF is more robust to the environment than the RSSI method used in Bluetooth (Fig. 3).

UWB differs from Bluetooth and Wi-Fi. It doesn’t use modulated sine waves to transmit information; rather, it utilizes modulated pulse trains. UWB pulses have very short duration, on the order of a nanosecond. The signal’s properties make this technology more resilient to multi-path environments typical in indoor areas because UWB’s short pulses are more immune to impairments from reflected signals than Bluetooth or Wi-Fi.

UWB’s ToF measurement can be supplemented with angle information to provide even more precise location. Similar to what was described above for Bluetooth 5.1 AoA, the UWB anchor receiver employs an antenna array of two or more antennas. The calculation uses the arrival times on each antenna and the antenna spacing information to determine the angle of incoming signal.

Systems based on UWB technology can achieve an accuracy in the range of 10 cm depending on the environment. At the time of this writing, several major chipset manufacturers offer UWB solutions, and the adoption of this technology by several smartphone manufacturers is proof of the growing momentum.

Micro-Location via Wi-Fi 802.11az

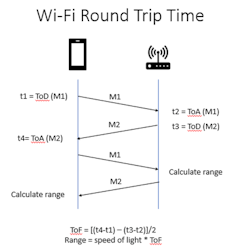

The newest and least notorious of the technologies discussed, the Wi-Fi 802.11az Next Generation Positioning (NGP) standard is nearing completion (targeted in 2022). Like Bluetooth, Wi-Fi technology has been used for some time with the RSSI-based method to provide positioning. But the NGP standard builds on a Wi-Fi feature called Fine Timing Measurement (FTM),

FTM uses round trip time (RTT) information to estimate distance between Wi-Fi-enabled stations and access points. The RTT mechanism employs time of departure (ToD) and time of arrival (ToA) timestamps. The 802.11az standard is designed improve upon the legacy FTM by leveraging the latest features in the 802.11ax (Wi-Fi 6) standard (Fig. 4).

For improved accuracy, 802.11az’s enhancements take advantage of the wider channel bandwidth available in the newer generations since Wi-Fi 6 signals support up to 160-MHz channel bandwidth and Wi-Fi 7 up to 320 MHz. Wider bandwidth delivers higher resolution, while MIMO operation provides better resilience to multi-path effects.

For improved protocol efficiency, NGP uses the null data packet (NDP) frames that are already defined in the 802.11ax standard for beamforming sounding. The new standard also utilizes the multi-user capabilities of Wi-Fi 6. When using trigger-based ranging with uplink and downlink OFDMA, the access point can effectively get ranging information from multiple stations in a single transmit opportunity. This significantly reduces the overhead needed to exchange ranging information and improve the scalability to more stations.

At the time of this writing, there’s limited data available for commercial positioning solutions using 802.11az NGP technology. However, test data published on Wi-Fi Ranging shows a promising performance for line-of-sight and non-line-of-sight environments where decimeter level of accuracy can be reached.

Comparing the Technologies for Next-Generation Micro-Location

When comparing the positioning accuracy among the three technologies, UWB can reach the highest level of accuracy with centimeter-level positioning. Bluetooth 5.1-based systems should be capable of reaching sub-meter accuracy, while Wi-Fi deployments based on 802.11az should be able to reach decimeter-level accuracy. Keep in mind that many factors must be considered when discussing the positioning accuracy. The environment, system design, antenna path delay, and other parameters can degrade the nominal accuracy.

Beyond positioning accuracy, numerous factors affect the decision to invest in a new positioning technology, and these criteria are application-dependent. For example, security, power consumption, cost, existing infrastructure, transmission reach, and interoperability may factor in the decision.

Regardless of the technology selected, careful design validation testing is required to ensure the best possible performance, and ultimately leading to successful deployment.

References

LitePoint, https://www.litepoint.com/knowledgebase/webinar-bt-5-1-uwb-802-11az

Qualcomm, https://www.qualcomm.com/documents/qualcomm-wifi-ranging-white-paper

About the Author

Eve Danel

Senior Product Marketing Manager, LitePoint

Eve Danel is a Senior Product Marketing Manager at LitePoint responsible for wireless connectivity test systems. Eve has over 15 years of experience working in test and measurement companies. Prior to joining LitePoint, she was a Senior Product Manager at VeEx Inc. responsible for Wi-Fi and Ethernet network test solutions.

Eve holds an MS degree in Digital Signal Processing from San Jose State University.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: