100-Gbit Ethernet Is Easy...’Til You Get to Interoperability Testing

How often have we heard that Ethernet scales easy? Maybe it used to be, but we need some compromises, fancy tricks, systems, and techniques to get to where we need to be for the ubiquitous data center.

The math seemed pretty simple. Just multiply by 10 and that would be the next Ethernet speed: 10 Mbits/s, 100 Mbits/s, 1 Gbit, 10 Gbits, and 100 Gbits/s. Then something funny happened between 10 and 100 Gbits/s. My colleague Bill Wong explains the gory details here in an interview with Kevin Deierling of Mellanox, “Q&A: Ethernet Evolution—25 is the New 10 and 100 is the new 40.”

The gist of it is that the combination of installed data-center cabling and well-grounded pragmatism with respect to cost, performance, and connector footprint tradeoffs led to the formation of a 25-Gbit/s interface by the IEEE 802.3by Ethernet Task Force in June of last year. Now we have 10 GbE, 25 GbE, 40 GbE, 50 GbE (which I forgot to mention), and 100 GbE (with 200 GbE and 400 GbE in the works).

While the migration path of choice depends on the application and the installed infrastructure, the need for higher-speed interfaces to the data center is clear as the amount of data increases exponentially. The data sources are many, but irrelevant here—we just need faster connections. Also, the use of faster memory in the form of NVMe flash SSDs is eliminating that speed bottleneck, so the interface itself is becoming an even more important gating factor.

To meet these higher-rate-data needs, chip manufacturers are trying to increase the speed of transmission, and network equipment manufacturers are trying to come up with a system that’s error-free and faster, in and out of the cloud. However, even though getting to 25 Gbits/s seems easier than jumping to 100 Gbits/s, it’s still a very complex endeavor. It’s especially complex when you think about getting all manufacturers of network interface cards (NICs), chips, and optical interfaces to line up and be interoperable in concert with accelerating signal transition speeds.

I asked Chris Loberg, senior manager for performance oscilloscopes at Tektronix, about how this move to 25 GbE, 100 GbE, and beyond was going, and it turns out there are indeed some issues, so we sat down (virtually) to discuss them. You can watch the interview here:

While getting to 25 or 100 Gbits/s is fine, the challenge is debugging the link between two Ethernet transceivers from different manufacturers. Sure, the IEEE spec provides guidance on how to build a link negotiation sequence. In practice, though, there always seem to be bugs, and they are hard to detect when traffic is streaming at 25 Gbits/s.

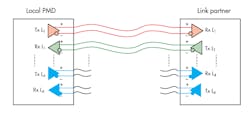

As Loberg explains, the trick is to start by get deeper visibility into the physical-layer (PHY) link negotiation sequence, in real time (Fig. 1).

1. Getting 25-GbE devices from different manufacturers to communicate reliably requires the ability to go deep into the PHY-layer link-negotiation sequence. (Source: Tektronix)

The link-training sequence establishes the receiver and transmitter characteristics and capabilities so they can start transmitting the payload. However, it’s not much use if a signal can’t get through at all. The reasons can be many, of course, but if the right voltage levels aren’t being used, for example, the signal will get lost in the noise and the receiver won’t be able to decode it.

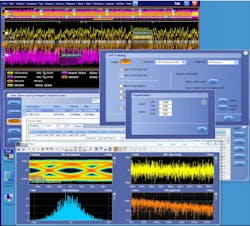

Assuming the PHY layer is working okay, but the devices still aren’t communicating, Loberg has some more suggestions on how to approach the protocol stack. I’ll let him explain it, but the point is that you need to make sure the right messages are being sent in a timely fashion (Fig. 2).

2. High-speed Ethernet signal analysis requires a fast-triggering scope for the PHY layer as well as the ability to look at the protocol messaging and timing. (Source: Tektronix)

It used to be Ethernet link-training analysis wasn’t as critical, since the data rates were lower and a protocol analyzer was sufficient. “Or maybe there weren’t even interoperability issues at the link training level,” said Loberg. “But now, with these 25-Gbit/s streams, it takes a scope with a fast trigger system and the ability to have segmented memory on that scope, to really get a good look at what's happening on the link and decode it to get both a PHY-layer and protocol-layer version [to get an] understanding [of] what's happening on that link.”

Solving the interoperability issue will accelerate adoption of silicon by different transceiver manufacturers in an open system. Still, the problem is only exacerbated by the move to 100 Gbits/s. To help with this, Tektronix itself contributed to the solution by adding extra functionality to its high-end DPO70000SX series of scopes in the form of the Option HSSLTA (High Speed Serial Link Training Analysis) tool. Downloadable from Tektronix’s site under license, the tool also supports non-return-to-zero (NRZ) and PAM4 signaling (28 and 56 Gbaud) with built-in bit-error-rate (BER) measurement. Note: It’s not cheap.

In the meantime, it’s always funny to hear the breathless talk of jumping quickly to 100 GbE and then 400 GbE, only to have us all be brought down to earth quickly by an errant voltage level or mistimed message in a link-training sequence. Oops! Still, it’s good news for everyone that the faster we go, the faster we get more problems to solve.

About the Author

Patrick Mannion

Founder and Managing Director

Patrick Mannion is Founder and Managing Director of ClariTek, LLC, a high-tech editorial services company. After graduating with a National Diploma in Electronic Engineering from the Dundalk Institute of Technology, he worked for three years in the industry before starting a career in b2b media and events. He has been covering the engineering, technology, design, and the electronics industry for 25 years. His various roles included Components and Communications Editor at Electronic Design and more recently Brand Director for UBM's Electronics media, including EDN, EETimes, Embedded.com, and TechOnline.