DACs Overcome Signal-Source Hurdles in Quantum Research

Developing viable quantum computers is the focus of numerous public and private research efforts on a global scale. One of the more difficult challenges facing quantum researchers is sourcing the very precise signals needed to understand, trigger, and evaluate the behavior of the quantum bits (qubits). These challenges include scaling, synchronization, reliability and repeatability, and cost.

The types of atomic and subatomic particles being used will vary, as will the techniques for manipulating them. These particles and techniques can include ions, electron/spin, photons, and nuclear/particle spin using lasers, electrical stimulation, magnetism, or ion traps to control quantum bits (qubits).

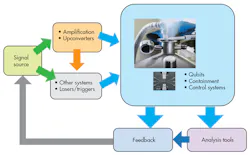

Though the techniques vary, the general setup for signal sourcing starts with a base signal source that generates the in-phase and quadrature (IQ) signal (Fig. 1). This is then passed along to other systems for amplification or attenuation, upconversion, and potentially to the trigger system of choice. The nature of quantum behavior requires that most of these experiments be done at very low temperatures, usually a few degrees Kelvin or less, creating signal-source challenges around pre-distortion and pre-compensation to account for various losses and cabling requirements.

1. Typical quantum-computing tests start with a base signal source, but signal sourcing is complicated by the need to perform most tests at only a few degrees Kelvin.

Adding to the complexity is the fact that these aren’t just one-way experiments. Typically, researchers want very fast feedback to the signal source to enable real-time iteration during experiments.

It’s worth noting this type of setup and the challenges faced aren’t unique to quantum-computing research. High-energy physics, molecular, and materials researchers, and biological and chemical researchers, often use similar setups and face similar challenges around sourcing signals. The most significant signal-source challenges include scaling, synchronization, and upconversion.

Scaling Challenges

Developing a single-qubit quantum computer is theoretically interesting, but doesn’t provide the computing power for the algorithms needed for a quantum computer. To be viable, quantum computers must have many qubits working together. Therefore, current experiments must scale up. This is challenging from a research standpoint, since each qubit or experiment can require multiple independent signals, with its own upconversion, pre-distortion, and conditioning.

Consequently, the various pieces of equipment needed for each qubit can get unwieldly, requiring lots of rack space and expensive instrumentation. Cabling alone can become a major nightmare, particularly if each cable must be precisely characterized to ensure that signals don’t distort or degrade as they go through the experiment. In fact, one of the bigger challenges is the sheer length of the cables needed and the latencies produced as the experiment scales up. It’s not just the signal source in use; it’s the cabling that goes with it as well.

Synchronization to the Femtosecond

Among all of the cabling and equipment, there also needs to be synchronization to the femtosecond. Signal-source-to-signal-source synchronization is critical to ensure accurate and repeatable results. There must be low channel-to-channel jitter across synchronized channels and markers, and digital channels and mixed signals must all be timed to trigger on time. Being able to start, stop, and trigger with microsecond, picosecond, or even femtosecond accuracy across sources is critical to ensuring that microscale devices behave accurately.

As with cabling, synchronization is one of those considerations that people tend to ignore while they are setting up an experiment. Often this requires an external clock of some sort, and the signal sources themselves must hold the synchronization over long periods of time with only a few picoseconds of skew. In some ways, a good signal-source synchronization solution is more art than science, and invariably takes significant time to implement.

Upconversion Challenges

2. A quantum research setup usually requires three pieces of equipment: a baseband generator, IQ modulator, and RF source—which can be costly.

Typically, a baseband generator such as a digital-to-analog converter (DAC) creates a signal that’s then mixed up to an IQ modulator and ultimately passed to an RF signal source (Fig. 2). In most setups, the baseband generator is an arbitrary waveform generator (AWG); the IQ modulator is a vector signal generator (VSG); and the RF source might be a microwave signal source. This means that three different pieces of equipment are required to get the signal of choice.

Getting Costs Under Control

Although the cost of VSGs or microwave generators isn’t trivial, it’s outweighed by the far more significant cost associated with generating baseband signals reliably and consistently, with sufficient performance. Using an automobile analogy, a DAC, which forms the heart of an AWG, can be thought of as the engine. By itself, the most powerful engine in the world isn’t going anywhere without a transmission, wheels, brakes, doors, and all of the other elements that make up a car. Similarly, a DAC needs input/output, memory, a user interface of some sort, and so on, before it can be used to generate signals.

One approach to obtaining a signal-generation engine is to purchase the complete “car.” A number of test and measurement vendors offer such solutions in the form of arbitrary waveform generators (AWGs), and in fact such signal generators are currently being used in many quantum-computing research efforts. Also, AWGs offer the potential for direct synthesis, which can eliminate the need for VSGs, and can produce sufficiently clean signals.

However, it’s not always an ideal fit. General-purpose signal generators aren’t necessarily optimized for quantum-computer research. The two main challenges are performance of the source and cost-per-channel of the system. With traditional AWG solutions, if 50 channels are suddenly required, the team could be looking at literally spending millions of dollars just on sources.

This expense encourages some quantum-computer researchers to build their own signal-source solutions using DACs and FPGA boards. This “kit car” approach, with an engine and the simplest platform possible, may potentially have the required speed, but may not handle well or have other features that aren’t needed. It’s a science experiment, or one-off, rather than a repeatable, fine-tuned machine.

Another downside to this approach is time. It takes years to work through issues around stability and scalability. Figuring out how to get 20 to 30 DACs to talk to each other is a daunting problem, as is making sure the first source behaves exactly the same as the twentieth source. When building DAC and FPGA boards by hand, getting the same performance every time is frustratingly difficult. Scientists in the throes of research and “rolling their own” sources often overlook the importance of source consistency, which is critical if an experiment is to yield good results and data. Ultimately, the “kit car” approach can work, but is far from ideal.

New DACs, A New Hope

One of the obstacles to direct synthesis of signals for quantum-computing research has been DAC technology itself. Designing a DAC, with both a high number of bits and a high sample rate, as well as good dynamic range, is a difficult undertaking. Because of these challenges, DACs were primarily used to generate low-frequency or baseband signals.

However, DAC technology is now far enough along that it’s possible to directly generate high-frequency signals with high fidelity, directly out of the DAC. There are still some limitations and times when an upconverter or mixer may still be needed, but modern DACs can cover signal requirements for the ISM band and LTE 5G up to 5 GHz, for example.

What’s also changing is that DACs are starting to build-in signal processing and modulation, in addition to signal-generation functionalities. This is driven in part by the demand for lower-cost, smaller-sized devices for telecommunication and military systems.

Among the more useful of these digital-signal-processing blocks are complex modulation or IQ modulation, numerically controlled oscillators (NCOs), and conditioning functionalities, such as finite-impulse-response (FIR) filters and digital interpolation. This enables direct generation of complex RF signals in an efficient, compact, and more affordable way. In addition to quantum computing, this is important for many applications that require multiple coherent channels such as configuring phased-array antenna elements.

A New Era in Signal Generation

3. For quantum-computing researchers needing to constrain per-channel cost and save bench space, new signal generators such as the Tektronix AWG5200 take advantage of the latest DAC technology to offer 10-Gsample/s rates, 16-bit resolution, and up to eight channels in a single instrument.

The availability of new high-performance DACs that offer a mix of speed and resolution within a fully integrated product package are enabling the development of AWGs that can directly generate highly detailed RF/EW signals or the complex pulse trains used in advanced research. With 16-bit resolution, these instruments have sample rates of up to 10 Gsamples/s and as many as eight independent channels with better than 10-ps channel-to-channel skew (Fig. 3).

Signal generation is a significant challenge and major expense in quantum-computing applications when researchers need the ability to send dozens of synchronized signals to quantum-computer cores. New DAC technologies along with AWGs that take advantage of the latest offerings have the potential to address one of the more (but by no means the only) daunting challenges facing quantum researchers.

Kip Pettigrew, a product and marketing manager for the Tektronix Sources and Analyzer Product Line, is responsible for the Arbitrary Waveform Generator products along with associated software platforms.

About the Author

Kip Pettigrew

Product and Marketing Manager, Sources and Analyzer Product Line

Kip Pettigrew, a product and marketing manager for the Tektronix Sources and Analyzer Product Line, is responsible for the Arbitrary Waveform Generator products along with associated software platforms. Before joining Tektronix, he worked at ESI as a Product Marketing Engineer and was a Principle Scientist & Engineer for 10 years specializing in micro (MEMS) fabrication, micro-fluidics, and heat transfer. Kip holds an MBA from the MIT Sloan program, a Master's in Engineering from UC Berkeley, and a Bachelor’s in Engineering from MIT.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: