From the late 1980s to the early 2000s, the rule of the microwave instrumentation market was simple: “He who makes the best microwave transistors wins.” During this era, test vendors released instrumentation that pushed the envelope of characteristics like frequency range, noise floor, and linearity performance. In fact, some of the critical innovations during this era included advances in hybrid microcircuit technology, synthesizer tuning time, and phase noise.

This file type includes high resolution graphics and schematics when applicable.

As we have seen over the past decade—and will continue to see—the key area of improvement for RF signal generator and analyzer technology is wider instantaneous bandwidth. Not surprisingly, the evolution of the wireless industry had a substantial effect on the market requirements and hence the capabilities of modern RF signal generators and analyzers.

From 1G to 5G

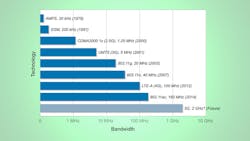

How has the wireless communications industry help drive improvements in signal-analyzer technology? Perhaps that’s best answered by observing the rapid increase in channel bandwidth of modern wireless standards over time. For example, we see that an AMPS communication channel (1G) consumed around 30 kHz of bandwidth for one-way communication (60 kHz for full duplex) (Fig. 1). Later, GSM (2G) would consume 200 kHz and UMTS channels (3G) would consume 5 kHz.

The need for increased channel bandwidth should not come as a surprise. The well-known Shannon-Hartley theorem model of channel throughput suggests that capacity is a linear function of bandwidth (not accounting for multiple antenna technology):

Capacity = Bandwidth × log2(1 + SNR)

Personally, one of the most interesting evolutions occurred several years ago with the widespread development of 802.11ac devices. For the first time in my personal memory, the wireless industry created a widely used standard that was ahead of the capabilities of RF signal generators and analyzers. In fact, we saw many of the test and measurement vendors accelerate the development of wider bandwidth instruments to support the bandwidth requirements of 802.11ac in a timely manner.

Looking forward, the next major milestone for RF test equipment will be the ability to test the 5th generation of cellular devices (5G). Researchers are actively prototyping 5G candidate technologies such as massive MIMO, GFDM. and millimeter-wave communications today (with NI products). Given how drastically these technologies will change the requirements of test equipment, a 2020 deployment date is not so far away. In fact, RF test equipment will likely require 2 GHz of bandwidth by 2017 or 2018 to support a 2020 deployment.

By any standard, achieving 2 GHz of instantaneous bandwidth would be a major milestone in the test and measurement industry. Although we at NI claim to have the widest-bandwidth (more than 750 MHz) high-performance VSA, there is obviously a need for bandwidth improvements in the near future.

Making it All Possible

You might be wondering how our industry will achieve 2 GHz of bandwidth. I think it’s important to keep Moore’s law in mind. As you know, Gordon E. Moore famously predicted in a 1968 paper that transistor density on an integrated circuit would double every two years. If you have followed the computing industry for any length of time, you undoubtedly heard many references to how Moore’s law described the ever-increasing capability of modern computing technology. As an example, consider the performance improvements of FPGAs and CPUs over the last 15 years (Fig. 2).

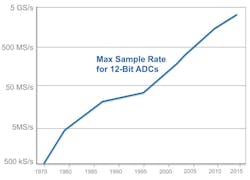

However, CPU and FPGA performance aren’t the only benefactors of exponential improvements in transistor density on an IC. In fact, we have observed a similar trend in analog-to-digital converter (ADC) sample rate. Consider, for example, the maximum available sample rate of 12-bit ADC technology versus time (Fig. 3).

In 1975, a 12-bit ADC with 2-µs settling time (approximately 500 ksamples/s, though not an exact corollary) was considered state of the art. Today, the fastest sampling 12-bit ADCs hit rates greater than 2 Gsamples/s, a feat that’s powering some of the widest-bandwidth signal analyzers in the industry.

Next-Generation RF Instruments

Designing the next generation of RF instruments will not be easy, and it certainly requires much more than a faster ADC. Although off-the-shelf ADCs (and digital-to-analog converters or DACs) help today’s RF instruments achieve wider bandwidths than ever before, they are only part of the puzzle. Beyond the ADC, engineers still have to solve challenges such as flatness and linearity. The solution will require continued innovation in analog design and signal processing.

Finally, a remaining challenge is the signal-processing horsepower required to convert intermediate frequency samples into something meaningful. Fortunately, innovations such as multicore CPUs and high-performance FPGAs make these challenges solvable. Here at NI, our belief is that the software powering tomorrow’s RF instrumentation is the most important innovation—and one we will continue to invest in far into the future.

About the Author

David Hall

Head of Semiconductor Marketing

David A. Hall is the head of semiconductor marketing at NI and is responsible for developing and executing go-to-market plans for the semiconductor industry. His job functions include managing the semiconductor test business, identifying industry trends, and educating customers on best semiconductor test practices. Hall’s areas of expertise include ATE architectures, RF measurement techniques, digital signal processing, and best measurement practices for mobile and wireless connectivity devices.

With nearly 15 years of experience at NI, Hall has served in multiple roles throughout his career including applications engineering, product management, and product marketing for automated test and RF instruments. He has also held management positions in product marketing, which focused on employee development and meeting business results across products and application areas. Hall is a known expert on subjects such as 5G, the Internet of Things (IoT), autonomous vehicles, and software-defined instrumentation. He holds a Bachelor’s with honors in computer engineering from Penn State University.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: