NI Week 2015: Turning Big Data to Useful Data

The problems and opportunities brought about by exponentially increasing amounts of data were the themes of this year’s NI Week, specifically, the acquisition, storage, access, and analysis of that data toward actionable intelligence.

This year, National Instruments highlighted some solutions to the problems of what it has called and trademarked “Big Analog Data” as they relate to wireless, Industry 4.0, automotive test, the power grid, and the omnipresent, interwoven, Internet of Things (IoT).

The theme was supported by hardware and software announcements and demonstrations in the areas of wireless production test, CompactDAQ hardware, DIAdem 2015 and DataFinder Server Edition 2015 data-management software, CompactRIO, FlexRIO and Single-Board RIO controllers, and, of course, updates to LabVIEW for 2015.

The keynotes also included a demonstration by Nokia of the first 5G millimeter-wave proof-of-concept system capable of 10-Gbit/s data rates. It was first demonstrated at the 5G Summit in Brooklyn in April, but was showcased at NIWeek as it used LabVIEW, LabVIEW FPGA, and PXI, which allowed the team to reuse much of the work it had used for earlier prototypes, the last one being at 1 GHz bandwidth.

The current system has a latency of less than 1 ms, operates in the 73-GHz band with a bandwidth of 2 GHz, and will be shared with the 5G standards bodies.

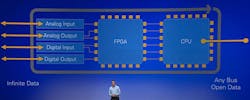

In the meantime, NI used the event to highlight trends and how it’s enabling designers to address the problems those trends present so they can be turned into opportunities. At the heart of its ability to do so is the core architecture of a flexible and reconfigurable I/O front end enabled by an FPGA and followed by a compute engine, or CPU (Fig. 1).

This architecture is foundational to the company’s hardware approach and has withstood the years. Its effectiveness was affirmed and underscored recently when Intel bought Altera for $47 billion.

The architecture is then wrapped in LabVIEW, an environment for creating custom applications that interact with real-world data. The essence of LabVIEW is that it simplifies the acquisition, analysis and presentation of data, making it popular with anyone who doesn’t want—or need – to know the nitty-gritty of designing an embedded or data-acquisition system.

So what kinds of data are talking about? NI defines it as Big Analog Data, which NI president and co-founder James Truchard (“Dr. T”) defined in his keynote as “real time, in motion, at rest, and archived” (Fig. 2).

With connected test and measurement, monitoring systems and sensors proliferating, we’re at a point where the amount of incoming data is heading toward infinity, especially with the IoT taking off.

Eric Starkloff, executive vice president of global sales and marketing at NI describes this connected data gathering and control as, “instrumenting the world,” and that it puts us in a scenario similar to the Cambrian explosion of life diversity 500 million years ago.

The difference now is that it’s 22 Exabyte’s of unstructured data running around, gathered over the past two decades using legacy NI systems and boards. Now, with connectivity and the prevalence of sensing and IoT, that data is rising exponentially.

“The opportunity is to instrument the entire world,” said Starkloff, “but when we connect to the physical world, it’s an infinite source of data [such as] temperature, audio signals, electromagnetic waves: we’re limited only by the number of channels and sample rates we choose to apply.”

The trick is to be able to manage and make use of all the data collected by these connected measurement systems and embedded devices. This effort starts at the point of data acquisition with smarter measurement systems that allow more processing to capture only the most critical data needed. This makes sense, as it will reduce the amount of data to be transmitted, stored and searched. The second part relates to smarter data management all the way through to the enterprise to get the most critical data in front of the right people to make data-driven decisions faster.

LabVIEW is inherently a programming language for data flow and helps at the point of acquisition, but there’s more to the solution of Big Analog Data.

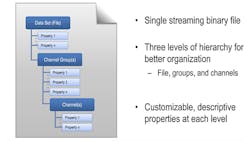

NI’s Technical Data Management System (TDMS), with DIAdem and DataFinder Server Edition both came to the show with updates for 2015 (Fig. 3). Jaguar/Range Rover gave a solid demonstration of how good data management can speed test, save money, and improve the final product and customer satisfaction.

During the demo, the company described how it was generating over 500 Gbytes of data per day in research and development, but it was repeating tests because it couldn’t find the test results. Data management was a manual process with multiple tools with specific scripting. The end result was that it was really only able to analyze 10% of the data.

To quantify the impact of this “lost data” problem, NI polled its 3500 conference attendees using the NIWeek App. It turned out that the time spent by EEs just looking for measurement data was worth an estimated $17 million, so it’s a very real problem.

As part of NI’s TDMS, DIAdem is software specifically designed to help quickly locate, inspect, analyze, and report on measurement data. For its part, DataFinder crawls through data files and finds descriptive information and ultimately builds a database solution out of it, with no interaction required (Fig. 3).

The DataFinder index contains descriptive properties to find files quickly. These descriptive properties, or metadata, are customizable at setup and are entered by the user. It’s this metadata attached to the files, combined with the TDMS binary open file format, which makes data queries faster—with more-useful results.

A year ago, Jaguar/Range Rover turned to DIAdem and DataFinder to automate the test process and now it’s analyzing 95% data and doing tests 20 times faster.

For example, files for the 2016 Range Rover Evoque were searched for test data associated with the parameter of vehicle speed tests at 155 KPH/95 MPH. Up to 400,000 files were searched and results presented in one second. Further queries and batch processing of thousands of files are possible.

While the speed of search was a key benefit, according to the presenter, the system’s benefits also extended to lowering the number of number of tests (as data could be now be found), thereby lowering cost. In addition, the final product ended up being better as they were detecting more issues up front, before passing the final product to the customer, so customer satisfaction also went up.

CompactDAQ was the NI hardware used for the Range Rover Evoque test system, and NI used the demo to announce its latest additions to the family with new 4- and 8-slot controllers featuring quad-core processing, and a new 14-slot USB 3.0 CompactDAQ chassis, along with 2015 editions of DIAdem and DataFinder Server Edition.

The controllers are intended to address the point-of-acquisition intelligence required to capture the most necessary data, and feature the quad-core Intel Atom 1.91 GHz E3845, more than 60 sensor-specific I/O modules with integrated signal conditioning, and 32 Gbytes of nonvolatile storage and removable SD storage to create smarter data logging and embedded monitoring applications.

The 14 slots allow more of those I/O modules to be integrated in to single system, while USB 3.0 means it can stream measurement and sensor data at up to 250 Mbytes/s to meet current and future needs.

DIAdem 2015 is being released as 64-bit software, so users can load and analyze more data, and NI added improved search capabilities to globally find specific data sets for analysis, as well as new data visualization and analysis functions. DataFinder Server Edition 2015 adds multistep querying that can be sent out to a global federation of servers to find the data users need to analyze in seconds.

3D and a Consumer Market of 1

The Range Rover team was making data-driven decisions, faster, which played into Dr. T’s opening remarks about the consumer-first paradigm, where data can be reused over time to predict reliability and product quality for everything from phones to bicycles to improve the user experience.

In the case of bikes, Truchard points to the combination of stored and analyzed data with 3D printing and automated manufacturing putting control in the hands of consumers, essentially creating a “market of ‘1.’” For example, Truchard envisions a scenario with 3D-manufactured bicycles where each consumer can specify their own design and manufacturers need to have sufficient modeling and test data to ensure the final product will work, before it’s made. “So you have to use previous test data to predict how new designs will work.”

IoT and Wireless Test

When it comes to IoT, there’s lots of speculation about whether or not it’s hype, but as Starkloff said, it doesn’t really matter, “it is self-fulfilling.” Much like Moore’s Law. We’re making it happen every day by adding intelligence and connectivity into everything. “Billions of dollars are being invested to make that goal possible,” he said, including wireless, processors, FPGAs, sensors, and software. The question now is how to take advantage of it.

From NI’s point of view, it has spent $250,000 of research and development on LabVIEW, PXI and associated software, as a platform to help you do so. The first Vector Signal Transceiver (VST) emerged in 2012 and became the highest-selling PXI instrument of all time.

However, as Starkloff said, “Test hardware must adapt at the speed of software just to be able to keep up.” In testing smarter devices for IoT, it must also be more robust and ready to deploy to the factory floor.

Last year, NI announced the Semiconductor Test System (STS) to lower the cost of semiconductor test on the production line, this year it is did likewise for wireless, with the introduction of the Wireless Test System (WTS) for rapid device-level test (Fig. 4).

Based on the same PXI hardware and LabVIEW and TestStand software as STS, WTS adds a rugged enclosure to the VST and PXI chassis, while combining the wireless test system instrument software with advanced software for testing cellular and connectivity protocols. Supported protocols include: LTE Advanced to 802.11ac to Bluetooth Low Energy.

So armed, WTS is designed for manufacturing test of wireless LAN (WLAN) access points, cellular handsets, infotainment systems and other multi-standard devices that include cellular, wireless connectivity and navigation standards. Advanced switching topologies make it ready for multisite and MIMO test, and its software supports chipsets from top vendors such as Qualcomm and Broadcom.

A demo at NIWeek by HARMAN/Becker Automotive Systems GmbH, showed how a user could write a test sequence for four WLAN devices (802.11a/g/n/ac) in under one minute using the WTS’s drag-and-drop features.

The fact that it was HARMAN’s automotive group doing the demo indicates that the WTS is already enabling the connected car with Wi-Fi-cellular connectivity and eCall, an emergency call feature that transmits coordinates for a faster response time.

According to HARMAN, the WTS not only reduced test time by 30% versus a single-device approach, it also takes up less space.

The IoT benefit was emphasized by Starkloff, who pointed out that the system can address wireless test needs for 80% of IoT devices. “There’s no better platform on the planet for testing smarter IoT.”

Industry 4.0

The criticality and usefulness of IoT is nowhere as clear as it is on the factory floor, where designers are adding intelligence to an existing system or making a measurement system more connected.

Consumer applications, such as the wave of home-automation devices now flooding the market, are very much a “see what sticks” approach to innovation, whereas IoT applied to the factory floor can provide real return on investment in terms of reduced downtime, increased reliability, and greater efficiency.

Jeff Kodosky, NI co-founder and NI Business and Technology Fellow (and Father of LabVIEW), is a strong believer in the “tangible economic benefits” of IoT in industry, that it will have greater socio-economic impacts, and will dwarf the consumer side.

However, Kodosky sees a qualitative and quantitative change coming with more data at higher sampling rates with higher precision and more precisely time synchronized. Using cosmic microwave background analysis as an example, Kodosky explained how higher spatial resolutions translates to more data and more understanding, so there’s always pressure to measure faster and at higher resolution for more insight.

Another example he gave involved analyzing the vibration of rotating machinery. Where such vibration can indicate out-of-balance conditions, by sampling at higher precision and higher rates, bearing and gear-mesh issues can also be detected.

Kodosky’s point was that this on-coming tsunami of data needs to be handled in a hierarchical fashion, first at the edge, with sensor fusion and the closing of high-speed control loops. “Using FPGAs with LabVIEW is ideal for defining behavior at this level,” he said.

Moving on up the hierarchy, LabVIEW real-time can perform supervisory control and concentrate data. As it progresses, it becomes a system of nodes, then a system of systems and then moves to IT for global analysis and trend identification. “Labview, DIAdem, and InsightCM are all valuable tools here,” said Kodosky.

To enable Industry 4.0, NI used the event to announce its next-generation control systems, this time optimized for the IoT. These new systems include a quad-core Atom-based CompactRIO controller with a Kintex-7 FPGA, a FlexRIO controller with a Kintex-7 FPGA and dual-core ARM processor, and a single-board RIO controller (see photo at top of page).

IoT Enables Power Steering—for Grid

On Day 3 of the event, Mario Paolone, associate professor at the Swiss Federal Institute of Technology of Lausanne, elaborated on the use of IoT-based monitoring to enable a form of “power steering” on a national power grid whereby “packets of energy” can be distributed as needed.

To enable this, the true state of a grid needs to be known at frame rates or windows of 50 or 60 Hz. It currently takes at least a minute to get a good status view. This type of monitoring would require optimal control planning, involving “terabytes of data in quasi-real time,” according to Paolone.

However, if accomplished, and you know the system state, “it’s possible to steer energy in an optimal fashion using a TCP/IP-like protocol that can route energy packets like we do data packets,” he said. “It let’s you use power as a single system.” Interesting prospect.

About the Author

Patrick Mannion

Founder and Managing Director

Patrick Mannion is Founder and Managing Director of ClariTek, LLC, a high-tech editorial services company. After graduating with a National Diploma in Electronic Engineering from the Dundalk Institute of Technology, he worked for three years in the industry before starting a career in b2b media and events. He has been covering the engineering, technology, design, and the electronics industry for 25 years. His various roles included Components and Communications Editor at Electronic Design and more recently Brand Director for UBM's Electronics media, including EDN, EETimes, Embedded.com, and TechOnline.