Get Ready for a Wealth of Embedded Design Hardware and Software Options for 2018

Download this article as a .PDF

There has never been a more exciting, confusing, and challenging time to develop embedded products. The Internet of Things (IoT) is a given, but tools ranging from machine learning (ML), persistent storage (PS), and mesh networking are changing how developers look at a problem. Approaches that were impractical a few years ago are becoming readily available. That is not to say that these paths are not fraught with peril for the uneducated. Likewise, adopting the latest hardware and software should not mean ignoring other issues like privacy and security. Insecure systems can render the best-intentioned device or service untenable.

Changing User Interfaces

Science and engineering fact continues to chase science fiction. Conversing with computers is no longer the exclusive domain of movies like “2001: A Space Odyssey.”

A number of unrelated technologies have come together to make this possible including the internet, the IoT, artificial intelligence (AI), and machine learning, plus improvements in audio processing, MEMS microphones, and so on. The result is Amazon Alexa and its competitors like Google Home, Apple HomePod, and even Microsoft’s Cortana.

Amazon has been in the lead working with partners to deliver hardware development kits that work with Alexa (Fig. 1). Like most IoT solutions, it requires a collaboration with a number of partners. The noise reduction hardware and software are differentiating factors. Machine learning is being used at this level in addition to natural language processing in the cloud.

1. Need to create an Amazon Alexa Dot (a)? Development kits like those from Cirrus Logic (b) and XMOS (c) can make that happen.

The challenge for companies is deciding what walled garden to work with. It is possible to have products that support multiple IoT frameworks, or even voice control systems like Alexa, but normally products will support one.

Voice interaction is not the only user interface that has benefitted from the use of artificial intelligence and other technology advancements. Stylus or pen-based input has improved on the hardware side and paired with tablets and giant smartphones. Editing of handwritten script, versus typed text, is possible using tools like MyScript’s Interactive Ink and its Nebo app. The Digital Stationery Consortium’s (DSC) is also working on a universal digital ink interchange format. DSC’s format is based on Wacom’s WILL technology.

Voice and script are affecting two other user interface technologies: augmented reality (AR) and virtual reality (VR). Advances on the hardware side will improve to user experience, but it is the software frameworks, integration, and advancements that will make AR and VR stand out this year. I expect AR to lead in non-gaming applications.

Security, Accelerators, and Embedded FPGAs

“Essentially, every electronic application now reaches out to the internet, and there are so many people with nothing better to do than to hack into someone else’s good work to be mean or for profit, that it’s imperative that designers build an adequate level of security into their products today,” notes Tom Starnes, an analyst at Objective Analysis. “Unfortunately, security is a utility, the quality of which is hard to quantify, and isn’t as fun as pimping up a User Interface. However, as engineers it is our duty to protect users of our creations from unseen danger.”

Not a day goes by without a security breach being brought to light. PCs provided billions of targets to hackers and smartphones easily surpassed this. IoT devices will exceed smartphone deployments by orders or magnitude.

On the plus side, hardware vendors are focusing on including security hardware and firmware in standard products. Encryption hardware and secure key storage are more common now and are starting to be standard options within a microprocessor family. Look to dual, asymmetric core approaches to provide improved security as well as providing better power management.

Cortex-M23 and -M33 microcontrollers based on Arm’s ARMv8-m architecture will be available this year. Arm’s Platform Security Architecture (PSA) is a software complement to this. Unfortunately Intel’s Management Engine (ME) woes highlight problems that can occur with embedded hardware and firmware.

Higher-end embedded systems will continue to see growth in the use of hypervisors and virtual machines. Embedded development tends to follow advances pushed by the enterprise where this technology has been used for decades.

Hardware acceleration to support neural networks, the backbone of the machine learning trend, will move from evaluation to production for many more developers. GPGPUs are being used in this space as well as hardware specifically designed for AI acceleration. Even DSPs are being tuned for AI like Cadence’s Vision C5 DSP.

Intel’s Movidius Myriad 2 VPU (video processing unit) is already being using in DJI drones to handle collision avoidance and recognizing user control gestures (Fig. 2). It even works with the Raspberry Pi.

2. The Intel Movidius Myriad 2 VPU (video processing unit) is available on a USB 3.0 stick that works with a range of systems including the Raspberry Pi.

Developers will need to become more familiar with AI to determine what hardware and software requirements will be needed for training and deployment, since training typically requires more computation. There are many types of neural networks and different models. Some applications can benefit by simply using libraries or runtimes that take advantage of neural networks, while others may be much more complex programming, configuration, and training.

Also keep an eye out for embedded FPGAs and RISC-V. Embedded FPGAs (eFPGAs) are available from a number of vendors, and silicon foundries are supporting them. The eFPGAs have significantly reduced overhead compared to adding an FPGA chip to a design and provide a flexible way to incorporate proprietary designs.

RISC-V growth continues, but remains a minute fraction in terms of deployment compared to Arm, which dominates the space. On the other hand, its use is growing substantially. There are now a number of companies providing RISC-V IP and it runs on most FPGA platforms, including Microsemi’s Mi-V environment. There are advantages to keeping it simple, stupid (KISS).

Persistent Storage

A change is in the wind for non-volatile memory (NVM) technologies as silicon foundries add support for storage technologies like Resistive RAM (ReRAM), Conductive Bridging RAM, and MRAM. This means microprocessors and system-on-chip (SoC) solutions can utilize non-flash NVM technologies that offer advantages such as speed and unlimited write lifetimes. Texas Instruments’ MSP430 microprocessor family already has FRAM versions that offer significant advantages over flash-based solutions.

The Storage Networking Industry Association’s (SNIA) NVM programming model (NPM) will start having more impact as support for persistent memory model becomes more available in operating systems like Linux and Windows. Of course, having non-volatile DIMMs (NVDIMM) will be key to its success.

“In 2018 Intel is due to introduce the Optane DIMM based on the company’s 3D XPoint Memory,” says Jim Handy, an analyst at Objective Analysis. “This should be a game-changer for any system based on DIMMs, since it will support much bigger memory sizes than DRAM at a lower price. It’s nonvolatile, too, but there is no off-the-shelf software that supports that today, so this attribute will only be useful in systems built around proprietary software. Even so, we will see big changes in computer memory configurations starting next year.”

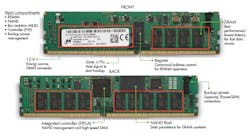

There are a number of vendors providing a range of NVDIMM solutions such as Micron’s NVDIMM (Fig. 3). It uses a combination of DRAM and flash memory combined with an FPGA controller and an external supercap. Diablo Technologies pioneered an all-flash NVDIMM device.

3. Micron’s NVDIMM includes an FPGA controller, DRAM, and flash memory with a link to an external supercap. The controller copies DRAM contents to flash when power is lost and copies it back when power is restored.

The biggest challenge is on the software side. SNIA’s NPM includes support for direct application access of non-volatile, in-memory storage (Fig. 4), unlike the conventional block-oriented disk storage or NVMe storage located on the PCI Express bus.

4. Storage Networking Industry Association’s (SNIA) programming model includes support for direct application access to in-memory NVM storage.

Also keep an eye out for the ruler form factor designed to handle NVMe storage (Fig. 5). It is complementary to the M.2 and U.2 form factors being used for NVMe storage. The system is designed to pack as much storage as possible into a 1U form factor.

5. The ruler form factor is a hot swappable, non-volatile memory system with an NVMe interface.

Wild West Wireless

Wireless connectivity has never been simple, but the number of options continues to grow. Even the venerable 802.11 standard has variations for 802.11a/b/g/n/ac. 802.11n/ac added in the 5 GHz band and multiple-input and multiple-output (MIMO) has now become ubiquitous. The latest trend is 802.11s mesh networking that continues to grow in importance.

Of course, wireless requires security and it is not immune from being compromised. The 2017 KRACK problem with WPA2 is only the tip of the iceberg. On the plus side, most attacks and problems can be fixed by a software upgrade, assuming the vendor provides it and the users install it.

Mesh networks continue to garner support throughout the wireless spectrum. The Bluetooth mesh standard has been established and is becoming more common as stacks become available. The Bluetooth mesh standard is independent of the new Bluetooth 5.0 standard, so this functionality may find its way into older devices through software updates. Bluetooth 5.0 employs a random frequency-hopping scheme that decreases the chance of conflicts with a neighboring BLE device. It also works on longer-range connections with a tradeoff in speed.

The IoT craze has fueled interest in a range of wireless solutions with long-range, lower-power, point-to-point technologies like LoRaWAN, SigFox, and NB-IoT. They have a range on the order of a kilometer and can penetrate barriers (such as walls) with a tradeoff in speed. Of the three, SigFox has been around the longest, but LoRaWAN and NB-IoT are just taking off and have significant support. One advantage over mesh technologies is the lower software overhead allowing these protocols to be used on very lightweight microcontrollers. Each approach offers advantages and disadvantages that developers will need to take into account when choosing a technology.

These long-range, low-speed technologies complement mesh technologies like ZigBee, Z-Wave, and mesh Bluetooth that offer higher speeds but more limited range. Mesh technologies target applications with a large number of local devices to provide coverage over an area in excess of the area addressed by individual devices.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.