Intel’s Latest Products Zero in on Data-Centric Computing

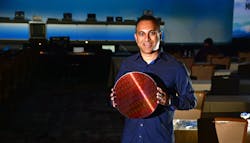

Navin Shenoy, Intel executive vice president and general manager of the Data Center Group, displayed a wafer containing Intel Xeon processors during the keynote at Intel’s data-centric product announcement. The latest crop of Xeons was one of many new products and technologies revealed at the event, which also included new networking and storage announcements (Fig. 1).

1. Intel’s new Xeon processors incorporate network and AI acceleration, as well as support from Optane DC (foreground).

The Xeon Cascade Lake family features the top-end Xeon Platinum 9200 processor with 56 cores and 12 DDR4 memory channels down to the 8-core, Xeon D-1600 system-on-chip (SoC) designed for edge computing and compact servers. The top-end system has a whopping 400 W TDP, but the 32-core Xeon 9221 is only 250 W. The latest systems target the cloud, allowing systems with up to eight chips to be connected together in a glueless configuration. In addition, network-optimized Xeon SKUs target network-function-virtualized (NFV) infrastructures.

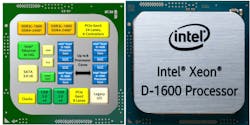

A dual-core D-1600 SoC requires only 27 W (Fig. 2). This family incorporates 10G Ethernet, USB, SATA, and PCI Express (PCIe) support. Incorporating two DDR4 memory controllers with ECC support, the SoC targets embedded systems, base stations, and network devices such as firewalls.

2. The Xeon D-1600 integrates peripherals like 10 G Ethernet, SATA and USB on-chip.

The Xeon systems have been augmented to handle networking and artificial-intelligence (AI) chores with Intel Deep Learning Boost (Intel DL Boost) technology. The AVX-512 Vector Neural Network Instructions (VNNI) are designed to accelerate machine-learning (ML) applications that employ deep neural networks. Intel intends to challenge GPGPUs like those from NVIDIA when it comes to inference jobs that have smaller batch sizes.

Intel DL Boost is optimized to accelerate AI inference workloads like image recognition, object detection, and image segmentation within data-center, enterprise, and intelligent edge-computing environments. Intel’s OpenVINO framework can take advantage of DL Boost in addition to other Intel platforms like Movidius and Nervana, as well as conventional CPUs. The framework supports models developed using popular platforms like TensorFlow, PyTorch, Caffe, MXNet, and Paddle Paddle.

The new chips provide additional hardware-based protections against Spectre and Meltdown attacks. They also have a unique Enhanced Privacy ID (EPID) that can be used by Intel’s Secure Device Onboard (SDO) for deployment of IoT devices.

Another key data-centric announcement was the Optane DC. The new DIMMs are supported on the memory channel, providing persistent-memory support. Persistent-memory support is now part of major operating systems like Linux and Windows Server. Optane DC provides higher capacity, non-volatile memory that also delivers faster boot-up while retaining data when a system is powered down.

Optane DC is available in a number of form factors, such as SSDs, in addition to the memory-channel-based DIMMs (Fig. 3).

3. Intel’s Optane DC comes in a variety of form factors from SSDs (shown) to DIMMs.

Intel will also be delivering flash memory in the removable, compact NVMe ruler form factor (Fig. 4). The storage devices employ 32-layer QLC NAND flash. The rulers are available in short, EDSFF form factors as well as full length rulers. Intel flash memory also comes in M.2, U.2 and PCIe board form factors.

4. The ruler form factor provides removable, dense QLC NAND flash storage for servers.

On another front, the company introduced the Ethernet 800 Series adapter (Fig. 5) that support speeds up to 100 Gb/s. The latest innovation includes Application Device Queues (ADQ) technology. ADQ is designed to increase application response-time predictability while reducing application latency and improving throughput.

5. The Intel Ethernet 800 Series adapter supports the new Application Device Queues (ADQ) technology.

Finally, Intel included news about its 10-nm Agilex FPGA, which we covered earlier. The Agilex F-Series will be available first with DDR4 and 56-Gb/s transceiver support and an optional quad-core, Arm Cortex-A53 SoC (Fig. 6). The I-Series and M-Series will be available later with DDR5 support and Compute Express Link (CXL) support. CXL, which will be found in future Xeon processors, provides a low-latency, peer-to-peer, cache-coherent environment for hardware accelerators like FPGAs.

6. Agilex 10-nm FPGAs will kick off with the F-Series, which has DDR4 and 56-Gb/s transceiver support.

Overall, it’s an impressive array of new products designed to support data-centric computing, including ML applications from the cloud to the edge.

About the Author

William G. Wong

Senior Content Director - Electronic Design and Microwaves & RF

I am Editor of Electronic Design focusing on embedded, software, and systems. As Senior Content Director, I also manage Microwaves & RF and I work with a great team of editors to provide engineers, programmers, developers and technical managers with interesting and useful articles and videos on a regular basis. Check out our free newsletters to see the latest content.

You can send press releases for new products for possible coverage on the website. I am also interested in receiving contributed articles for publishing on our website. Use our template and send to me along with a signed release form.

Check out my blog, AltEmbedded on Electronic Design, as well as his latest articles on this site that are listed below.

You can visit my social media via these links:

- AltEmbedded on Electronic Design

- Bill Wong on Facebook

- @AltEmbedded on Twitter

- Bill Wong on LinkedIn

I earned a Bachelor of Electrical Engineering at the Georgia Institute of Technology and a Masters in Computer Science from Rutgers University. I still do a bit of programming using everything from C and C++ to Rust and Ada/SPARK. I do a bit of PHP programming for Drupal websites. I have posted a few Drupal modules.

I still get a hand on software and electronic hardware. Some of this can be found on our Kit Close-Up video series. You can also see me on many of our TechXchange Talk videos. I am interested in a range of projects from robotics to artificial intelligence.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: