The Expanding Role of FPGAs in Edge AI

What you'll learn:

- How FPGAs deliver higher performance per watt in physical AI systems through reconfigurable hardware that can be fine-tuned for different AI models.

- How the hardware-level protections in FPGAs — including secure boot, encrypted bitstreams, and root-of-trust architectures — strengthen security.

- How new AI-focused tools are accelerating FPGA development, enabling long-term adaptability to evolving AI models and industry standards.

As AI workloads continue to come out of the cloud, edge hardware is having to evolve to keep up. Edge AI requires not only rapid inferencing, robust security, and strict power efficiency, but also the flexibility to adapt to the latest advances in AI models, often within tightly constrained physical and thermal envelopes. In this space, field-programmable gate arrays (FPGAs) are filling gaps that traditional CPUs and GPUs tend to struggle with.

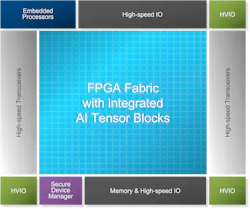

Today’s FPGAs are being designed to address the unique realities of deploying AI in the physical world. The latest chips integrate high-performance DSP blocks, large on-chip memory, AI accelerator engines, security hardware, and high-bandwidth I/O. The result is massive parallelism and low-latency compute tailored to the needs of neural networks. Importantly, FPGAs feature a reconfigurable architecture that can be adapted to unique needs of a specific AI model, delivering strong performance per watt for AI inference at the edge.

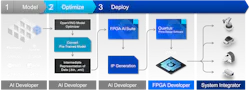

The FPGA AI design flow has evolved, too. Featuring automated quantization, optimized operator libraries, high-level synthesis, and seamless integration with frameworks like TensorFlow and PyTorch, FPGA tool chains dramatically reduce development complexity.

FPGAs Have the Flexibility to Adapt to Fast-Evolving AI

AI models are advancing at an extraordinary pace. Frameworks, operators, and quantization schemes evolve frequently, and fixed-function accelerators can quickly become obsolete when standards and requirements shift.

FPGAs, however, are reconfigurable by design. Their hardware can be programmed to support new data types, emerging network architectures, or proprietary inference engines without the need for new silicon. DeepSeek, for example, has incorporated FP8 into its native training flows, while partners like Intel and NVIDIA have more recently extended support to FP4 and INT4 for inference.

This adaptability allows developers to extend product lifecycles while ensuring compatibility with evolving AI workloads. For instance, as neural networks shift to transformer-based architectures, FPGA logic blocks can be re-synthesized to support the required matrix multiplications and attention mechanisms, even in-situ for equipment already deployed in the field.

Such hardware reconfigurability reduces the time and cost associated with re-deploying edge devices when standards evolve. This is critical in the industrial, automotive, and telecommunications industries that value long-term reliability and upgradability.

Real-Time Decisions: The Deterministic Latency of FPGAs

Edge AI often operates in time-critical environments, e.g., autonomous vehicles, robotics, medical imaging, and smart manufacturing. In these applications, predictability is as important as raw speed. CPUs and GPUs, while fast when used for large batch sizes, introduce non-deterministic latency due to complex instruction pipelines, cache hierarchies, and task scheduling overheads.

FPGAs, in contrast, offer deterministic behavior. Their data paths and control logic are implemented directly in hardware, ensuring consistent, cycle-accurate latency regardless of workload variations. This deterministic performance enables real-time inferencing and control loops that can be trusted in mission-critical systems.

For example, in a robotic arm guided by AI vision, the guarantee of consistent latency ensures that movement and perception remain synchronized, which is key for safety and accuracy.

How FPGAs Enhance Power Efficiency and Edge Scalability

Power consumption is a defining constraint in edge environments, where thermal budgets and battery life limit compute capacity. FPGAs provide an ideal balance of performance and energy efficiency because they execute computations in parallel through dedicated hardware pipelines instead of relying on sequential instruction execution.

By tailoring the hardware fabric to match the workload exactly, FPGAs avoid the wasted energy inherent in general-purpose processors. Moreover, techniques like partial reconfiguration (updating parts of the hardware on demand) and hardware-level pruning (removing unnecessary processing) can further optimize power usage. As a result, FPGA-based accelerators can deliver teraoperations-per-watt performance, enabling AI inference even in fanless or ruggedized edge systems.

Bolstering Security and Trust at the Hardware Level with FPGAs

Security is a growing concern as edge devices process sensitive data outside of centralized, managed data centers. FPGAs provide multiple layers of hardware-level protection. Their configuration bitstreams can be encrypted and authenticated, preventing tampering or reverse engineering. Many FPGAs also integrate secure-boot and hardware root-of-trust (RoT) mechanisms as well as physical unclonable functions (PUFs) that help establish a trusted computing base from power-up.

Furthermore, FPGAs can be programmed in their hardware to run inline encryption or secure key management, or even implement cryptographic protocols. This flexibility allows security features to evolve alongside new standards, including post-quantum cryptography (PQC), ensuring long-term protection in dynamic deployment environments such as 5G edge nodes or defense systems.

The Future of FPGA-Driven Edge AI

As edge intelligence scales across sectors, FPGAs are set to play a pivotal role.

Their unique combination of reconfigurability, deterministic latency, power efficiency, and hardware-level security aligns perfectly with the demands of AI workloads. While the programming model for FPGAs has traditionally been complex, modern high-level synthesis tools, AI-optimized IP cores, and frameworks such as Vitis AI and OpenVINO have lowered the barrier for developers. These advances make it easier to deploy machine-learning models on FPGAs with minimal hardware design expertise.

Ultimately, FPGAs bridge the gap between flexibility and performance, empowering developers to deliver adaptable, secure, and efficient edge systems that can keep up with the accelerating evolution of AI.

About the Author

Mike Fitton

Vice President and General Manager of Vertical Markets, Altera Corp.

Mike Fitton is Vice President and General Manager of Vertical Markets in Altera’s Market Development Group, where he leads business development and segment architect teams across cloud & enterprise, telecommunications, test, medical, consumer, and broadcast. In this role, he drives market strategy and segment success by enabling customers and partners across Altera’s served markets in close partnership with the company’s sales and product teams.

Voice Your Opinion!

To join the conversation, and become an exclusive member of Electronic Design, create an account today!

Leaders relevant to this article: